- •Table of Contents

- •What’s New in EViews 5.0

- •What’s New in 5.0

- •Compatibility Notes

- •EViews 5.1 Update Overview

- •Overview of EViews 5.1 New Features

- •Preface

- •Part I. EViews Fundamentals

- •Chapter 1. Introduction

- •What is EViews?

- •Installing and Running EViews

- •Windows Basics

- •The EViews Window

- •Closing EViews

- •Where to Go For Help

- •Chapter 2. A Demonstration

- •Getting Data into EViews

- •Examining the Data

- •Estimating a Regression Model

- •Specification and Hypothesis Tests

- •Modifying the Equation

- •Forecasting from an Estimated Equation

- •Additional Testing

- •Chapter 3. Workfile Basics

- •What is a Workfile?

- •Creating a Workfile

- •The Workfile Window

- •Saving a Workfile

- •Loading a Workfile

- •Multi-page Workfiles

- •Addendum: File Dialog Features

- •Chapter 4. Object Basics

- •What is an Object?

- •Basic Object Operations

- •The Object Window

- •Working with Objects

- •Chapter 5. Basic Data Handling

- •Data Objects

- •Samples

- •Sample Objects

- •Importing Data

- •Exporting Data

- •Frequency Conversion

- •Importing ASCII Text Files

- •Chapter 6. Working with Data

- •Numeric Expressions

- •Series

- •Auto-series

- •Groups

- •Scalars

- •Chapter 7. Working with Data (Advanced)

- •Auto-Updating Series

- •Alpha Series

- •Date Series

- •Value Maps

- •Chapter 8. Series Links

- •Basic Link Concepts

- •Creating a Link

- •Working with Links

- •Chapter 9. Advanced Workfiles

- •Structuring a Workfile

- •Resizing a Workfile

- •Appending to a Workfile

- •Contracting a Workfile

- •Copying from a Workfile

- •Reshaping a Workfile

- •Sorting a Workfile

- •Exporting from a Workfile

- •Chapter 10. EViews Databases

- •Database Overview

- •Database Basics

- •Working with Objects in Databases

- •Database Auto-Series

- •The Database Registry

- •Querying the Database

- •Object Aliases and Illegal Names

- •Maintaining the Database

- •Foreign Format Databases

- •Working with DRIPro Links

- •Part II. Basic Data Analysis

- •Chapter 11. Series

- •Series Views Overview

- •Spreadsheet and Graph Views

- •Descriptive Statistics

- •Tests for Descriptive Stats

- •Distribution Graphs

- •One-Way Tabulation

- •Correlogram

- •Unit Root Test

- •BDS Test

- •Properties

- •Label

- •Series Procs Overview

- •Generate by Equation

- •Resample

- •Seasonal Adjustment

- •Exponential Smoothing

- •Hodrick-Prescott Filter

- •Frequency (Band-Pass) Filter

- •Chapter 12. Groups

- •Group Views Overview

- •Group Members

- •Spreadsheet

- •Dated Data Table

- •Graphs

- •Multiple Graphs

- •Descriptive Statistics

- •Tests of Equality

- •N-Way Tabulation

- •Principal Components

- •Correlations, Covariances, and Correlograms

- •Cross Correlations and Correlograms

- •Cointegration Test

- •Unit Root Test

- •Granger Causality

- •Label

- •Group Procedures Overview

- •Chapter 13. Statistical Graphs from Series and Groups

- •Distribution Graphs of Series

- •Scatter Diagrams with Fit Lines

- •Boxplots

- •Chapter 14. Graphs, Tables, and Text Objects

- •Creating Graphs

- •Modifying Graphs

- •Multiple Graphs

- •Printing Graphs

- •Copying Graphs to the Clipboard

- •Saving Graphs to a File

- •Graph Commands

- •Creating Tables

- •Table Basics

- •Basic Table Customization

- •Customizing Table Cells

- •Copying Tables to the Clipboard

- •Saving Tables to a File

- •Table Commands

- •Text Objects

- •Part III. Basic Single Equation Analysis

- •Chapter 15. Basic Regression

- •Equation Objects

- •Specifying an Equation in EViews

- •Estimating an Equation in EViews

- •Equation Output

- •Working with Equations

- •Estimation Problems

- •Chapter 16. Additional Regression Methods

- •Special Equation Terms

- •Weighted Least Squares

- •Heteroskedasticity and Autocorrelation Consistent Covariances

- •Two-stage Least Squares

- •Nonlinear Least Squares

- •Generalized Method of Moments (GMM)

- •Chapter 17. Time Series Regression

- •Serial Correlation Theory

- •Testing for Serial Correlation

- •Estimating AR Models

- •ARIMA Theory

- •Estimating ARIMA Models

- •ARMA Equation Diagnostics

- •Nonstationary Time Series

- •Unit Root Tests

- •Panel Unit Root Tests

- •Chapter 18. Forecasting from an Equation

- •Forecasting from Equations in EViews

- •An Illustration

- •Forecast Basics

- •Forecasting with ARMA Errors

- •Forecasting from Equations with Expressions

- •Forecasting with Expression and PDL Specifications

- •Chapter 19. Specification and Diagnostic Tests

- •Background

- •Coefficient Tests

- •Residual Tests

- •Specification and Stability Tests

- •Applications

- •Part IV. Advanced Single Equation Analysis

- •Chapter 20. ARCH and GARCH Estimation

- •Basic ARCH Specifications

- •Estimating ARCH Models in EViews

- •Working with ARCH Models

- •Additional ARCH Models

- •Examples

- •Binary Dependent Variable Models

- •Estimating Binary Models in EViews

- •Procedures for Binary Equations

- •Ordered Dependent Variable Models

- •Estimating Ordered Models in EViews

- •Views of Ordered Equations

- •Procedures for Ordered Equations

- •Censored Regression Models

- •Estimating Censored Models in EViews

- •Procedures for Censored Equations

- •Truncated Regression Models

- •Procedures for Truncated Equations

- •Count Models

- •Views of Count Models

- •Procedures for Count Models

- •Demonstrations

- •Technical Notes

- •Chapter 22. The Log Likelihood (LogL) Object

- •Overview

- •Specification

- •Estimation

- •LogL Views

- •LogL Procs

- •Troubleshooting

- •Limitations

- •Examples

- •Part V. Multiple Equation Analysis

- •Chapter 23. System Estimation

- •Background

- •System Estimation Methods

- •How to Create and Specify a System

- •Working With Systems

- •Technical Discussion

- •Vector Autoregressions (VARs)

- •Estimating a VAR in EViews

- •VAR Estimation Output

- •Views and Procs of a VAR

- •Structural (Identified) VARs

- •Cointegration Test

- •Vector Error Correction (VEC) Models

- •A Note on Version Compatibility

- •Chapter 25. State Space Models and the Kalman Filter

- •Background

- •Specifying a State Space Model in EViews

- •Working with the State Space

- •Converting from Version 3 Sspace

- •Technical Discussion

- •Chapter 26. Models

- •Overview

- •An Example Model

- •Building a Model

- •Working with the Model Structure

- •Specifying Scenarios

- •Using Add Factors

- •Solving the Model

- •Working with the Model Data

- •Part VI. Panel and Pooled Data

- •Chapter 27. Pooled Time Series, Cross-Section Data

- •The Pool Workfile

- •The Pool Object

- •Pooled Data

- •Setting up a Pool Workfile

- •Working with Pooled Data

- •Pooled Estimation

- •Chapter 28. Working with Panel Data

- •Structuring a Panel Workfile

- •Panel Workfile Display

- •Panel Workfile Information

- •Working with Panel Data

- •Basic Panel Analysis

- •Chapter 29. Panel Estimation

- •Estimating a Panel Equation

- •Panel Estimation Examples

- •Panel Equation Testing

- •Estimation Background

- •Appendix A. Global Options

- •The Options Menu

- •Print Setup

- •Appendix B. Wildcards

- •Wildcard Expressions

- •Using Wildcard Expressions

- •Source and Destination Patterns

- •Resolving Ambiguities

- •Wildcard versus Pool Identifier

- •Appendix C. Estimation and Solution Options

- •Setting Estimation Options

- •Optimization Algorithms

- •Nonlinear Equation Solution Methods

- •Appendix D. Gradients and Derivatives

- •Gradients

- •Derivatives

- •Appendix E. Information Criteria

- •Definitions

- •Using Information Criteria as a Guide to Model Selection

- •References

- •Index

- •Symbols

- •.DB? files 266

- •.EDB file 262

- •.RTF file 437

- •.WF1 file 62

- •@obsnum

- •Panel

- •@unmaptxt 174

- •~, in backup file name 62, 939

- •Numerics

- •3sls (three-stage least squares) 697, 716

- •Abort key 21

- •ARIMA models 501

- •ASCII

- •file export 115

- •ASCII file

- •See also Unit root tests.

- •Auto-search

- •Auto-series

- •in groups 144

- •Auto-updating series

- •and databases 152

- •Backcast

- •Berndt-Hall-Hall-Hausman (BHHH). See Optimization algorithms.

- •Bias proportion 554

- •fitted index 634

- •Binning option

- •classifications 313, 382

- •Boxplots 409

- •By-group statistics 312, 886, 893

- •coef vector 444

- •Causality

- •Granger's test 389

- •scale factor 649

- •Census X11

- •Census X12 337

- •Chi-square

- •Cholesky factor

- •Classification table

- •Close

- •Coef (coefficient vector)

- •default 444

- •Coefficient

- •Comparison operators

- •Conditional standard deviation

- •graph 610

- •Confidence interval

- •Constant

- •Copy

- •data cut-and-paste 107

- •table to clipboard 437

- •Covariance matrix

- •HAC (Newey-West) 473

- •heteroskedasticity consistent of estimated coefficients 472

- •Create

- •Cross-equation

- •Tukey option 393

- •CUSUM

- •sum of recursive residuals test 589

- •sum of recursive squared residuals test 590

- •Data

- •Database

- •link options 303

- •using auto-updating series with 152

- •Dates

- •Default

- •database 24, 266

- •set directory 71

- •Dependent variable

- •Description

- •Descriptive statistics

- •by group 312

- •group 379

- •individual samples (group) 379

- •Display format

- •Display name

- •Distribution

- •Dummy variables

- •for regression 452

- •lagged dependent variable 495

- •Dynamic forecasting 556

- •Edit

- •See also Unit root tests.

- •Equation

- •create 443

- •store 458

- •Estimation

- •EViews

- •Excel file

- •Excel files

- •Expectation-prediction table

- •Expected dependent variable

- •double 352

- •Export data 114

- •Extreme value

- •binary model 624

- •Fetch

- •File

- •save table to 438

- •Files

- •Fitted index

- •Fitted values

- •Font options

- •Fonts

- •Forecast

- •evaluation 553

- •Foreign data

- •Formula

- •forecast 561

- •Freq

- •DRI database 303

- •F-test

- •for variance equality 321

- •Full information maximum likelihood 698

- •GARCH 601

- •ARCH-M model 603

- •variance factor 668

- •system 716

- •Goodness-of-fit

- •Gradients 963

- •Graph

- •remove elements 423

- •Groups

- •display format 94

- •Groupwise heteroskedasticity 380

- •Help

- •Heteroskedasticity and autocorrelation consistent covariance (HAC) 473

- •History

- •Holt-Winters

- •Hypothesis tests

- •F-test 321

- •Identification

- •Identity

- •Import

- •Import data

- •See also VAR.

- •Index

- •Insert

- •Instruments 474

- •Iteration

- •Iteration option 953

- •in nonlinear least squares 483

- •J-statistic 491

- •J-test 596

- •Kernel

- •bivariate fit 405

- •choice in HAC weighting 704, 718

- •Kernel function

- •Keyboard

- •Kwiatkowski, Phillips, Schmidt, and Shin test 525

- •Label 82

- •Last_update

- •Last_write

- •Latent variable

- •Lead

- •make covariance matrix 643

- •List

- •LM test

- •ARCH 582

- •for binary models 622

- •LOWESS. See also LOESS

- •in ARIMA models 501

- •Mean absolute error 553

- •Metafile

- •Micro TSP

- •recoding 137

- •Models

- •add factors 777, 802

- •solving 804

- •Mouse 18

- •Multicollinearity 460

- •Name

- •Newey-West

- •Nonlinear coefficient restriction

- •Wald test 575

- •weighted two stage 486

- •Normal distribution

- •Numbers

- •chi-square tests 383

- •Object 73

- •Open

- •Option setting

- •Option settings

- •Or operator 98, 133

- •Ordinary residual

- •Panel

- •irregular 214

- •unit root tests 530

- •Paste 83

- •PcGive data 293

- •Polynomial distributed lag

- •Pool

- •Pool (object)

- •PostScript

- •Prediction table

- •Principal components 385

- •Program

- •p-value 569

- •for coefficient t-statistic 450

- •Quiet mode 939

- •RATS data

- •Read 832

- •CUSUM 589

- •Regression

- •Relational operators

- •Remarks

- •database 287

- •Residuals

- •Resize

- •Results

- •RichText Format

- •Robust standard errors

- •Robustness iterations

- •for regression 451

- •with AR specification 500

- •workfile 95

- •Save

- •Seasonal

- •Seasonal graphs 310

- •Select

- •single item 20

- •Serial correlation

- •theory 493

- •Series

- •Smoothing

- •Solve

- •Source

- •Specification test

- •Spreadsheet

- •Standard error

- •Standard error

- •binary models 634

- •Start

- •Starting values

- •Summary statistics

- •for regression variables 451

- •System

- •Table 429

- •font 434

- •Tabulation

- •Template 424

- •Tests. See also Hypothesis tests, Specification test and Goodness of fit.

- •Text file

- •open as workfile 54

- •Type

- •field in database query 282

- •Units

- •Update

- •Valmap

- •find label for value 173

- •find numeric value for label 174

- •Value maps 163

- •estimating 749

- •View

- •Wald test 572

- •nonlinear restriction 575

- •Watson test 323

- •Weighting matrix

- •heteroskedasticity and autocorrelation consistent (HAC) 718

- •kernel options 718

- •White

- •Window

- •Workfile

- •storage defaults 940

- •Write 844

- •XY line

- •Yates' continuity correction 321

Forecast Basics—549

have constructed if, in 1990M01, you predicted values of HS from 1990M02 through 1996M01, given knowledge about the entire path of SP over that period.

The corresponding static forecast is displayed below:

Again, EViews backfills the values of the forecast series, HSF1, through 1990M01. This forecast is the one you would have constructed if, in 1990M01, you used all available data to estimate a model, and then used this estimated model to perform one-step ahead forecasts every month for the next six years.

The remainder of this chapter focuses on the details associated with the construction of these forecasts, the corresponding forecast evaluations, and forecasting in more complex settings involving equations with expressions or auto-updating series.

Forecast Basics

EViews stores the forecast results in the series specified in the Forecast name field. We will refer to this series as the forecast series.

The forecast sample specifies the observations for which EViews will try to compute fitted or forecasted values. If the forecast is not computable, a missing value will be returned. In some cases, EViews will carry out automatic adjustment of the sample to prevent a forecast consisting entirely of missing values (see “Adjustment for Missing Values” on

page 550, below). Note that the forecast sample may or may not overlap with the sample of observations used to estimate the equation.

For values not included in the forecast sample, there are two options. By default, EViews fills in the actual values of the dependent variable. If you turn off the Insert actuals for out-of-sample option, out-of-forecast-sample values will be filled with NAs.

550—Chapter 18. Forecasting from an Equation

As a consequence of these rules, all data in the forecast series will be overwritten during the forecast procedure. Existing values in the forecast series will be lost.

Computing Point Forecasts

For each observation in the forecast sample, EViews computes the fitted value of the dependent variable using the estimated parameters, the right-hand side exogenous variables, and either the actual or estimated values for lagged endogenous variables and residuals. The method of constructing these forecasted values depends upon the estimated model and user-specified settings.

To illustrate the forecasting procedure, we begin with a simple linear regression model with no lagged endogenous right-hand side variables, and no ARMA terms. Suppose that you have estimated the following equation specification:

y c x z

Now click on Forecast, specify a forecast period, and click OK.

For every observation in the forecast period, EViews will compute the fitted value of Y using the estimated parameters and the corresponding values of the regressors, X and Z:

ˆ |

= |

ˆ |

ˆ |

ˆ |

(18.1) |

yt |

c(1) + c(2)xt + c(3 )zt . |

||||

You should make certain that you have valid values for the exogenous right-hand side variables for all observations in the forecast period. If any data are missing in the forecast sample, the corresponding forecast observation will be an NA.

Adjustment for Missing Values

There are two cases when a missing value will be returned for the forecast value. First, if any of the regressors have a missing value, and second, if any of the regressors are out of the range of the workfile. This includes the implicit error terms in AR models.

In the case of forecasts with no dynamic components in the specification (i.e. with no lagged endogenous or ARMA error terms), a missing value in the forecast series will not affect subsequent forecasted values. In the case where there are dynamic components, however, a single missing value in the forecasted series will propagate throughout all future values of the series.

As a convenience feature, EViews will move the starting point of the sample forward where necessary until a valid forecast value is obtained. Without these adjustments, the user would have to figure out the appropriate number of presample values to skip, otherwise the forecast would consist entirely of missing values. For example, suppose you wanted to forecast dynamically from the following equation specification:

Forecast Basics—551

y c y(-1) ar(1)

If you specified the beginning of the forecast sample to the beginning of the workfile range, EViews will adjust forward the forecast sample by 2 observations, and will use the pre- forecast-sample values of the lagged variables (the loss of 2 observations occurs because the residual loses one observation due to the lagged endogenous variable so that the forecast for the error term can begin only from the third observation.)

Forecast Errors and Variances

Suppose the “true” model is given by:

yt = xt′β + t , |

(18.2) |

where t is an independent, and identically distributed, mean zero random disturbance, and β is a vector of unknown parameters. Below, we relax the restriction that the ’s be independent.

The true model generating y is not known, but we obtain estimates b of the unknown parameters β . Then, setting the error term equal to its mean value of zero, the (point) forecasts of y are obtained as:

ˆ |

= xt′b . |

(18.3) |

yt |

Forecasts are made with error, where the error is simply the difference between the actual and forecasted value et = yt − xt′b . Assuming that the model is correctly specified, there are two sources of forecast error: residual uncertainty and coefficient uncertainty.

Residual Uncertainty

The first source of error, termed residual or innovation uncertainty, arises because the innovations in the equation are unknown for the forecast period and are replaced with their expectations. While the residuals are zero in expected value, the individual values are non-zero; the larger the variation in the individual errors, the greater the overall error in the forecasts.

The standard measure of this variation is the standard error of the regression (labeled “S.E. of regression” in the equation output). Residual uncertainty is usually the largest source of forecast error.

In dynamic forecasts, innovation uncertainty is compounded by the fact that lagged dependent variables and ARMA terms depend on lagged innovations. EViews also sets these equal to their expected values, which differ randomly from realized values. This additional source of forecast uncertainty tends to rise over the forecast horizon, leading to a pattern of increasing forecast errors. Forecasting with lagged dependent variables and ARMA terms is discussed in more detail below.

552—Chapter 18. Forecasting from an Equation

Coefficient Uncertainty

The second source of forecast error is coefficient uncertainty. The estimated coefficients b of the equation deviate from the true coefficients β in a random fashion. The standard error of the estimated coefficient, given in the regression output, is a measure of the precision with which the estimated coefficients measure the true coefficients.

The effect of coefficient uncertainty depends upon the exogenous variables. Since the estimated coefficients are multiplied by the exogenous variables x in the computation of forecasts, the more the exogenous variables deviate from their mean values, the greater is the forecast uncertainty.

Forecast Variability

The variability of forecasts is measured by the forecast standard errors. For a single equation without lagged dependent variables or ARMA terms, the forecast standard errors are computed as:

forecast se = s 1 + xt′( X′ X)−1xt |

(18.4) |

where s is the standard error of regression. These standard errors account for both innovation (the first term) and coefficient uncertainty (the second term). Point forecasts made from linear regression models estimated by least squares are optimal in the sense that they have the smallest forecast variance among forecasts made by linear unbiased estimators.

Moreover, if the innovations are normally distributed, the forecast errors have a t-distribu- tion and forecast intervals can be readily formed.

If you supply a name for the forecast standard errors, EViews computes and saves a series of forecast standard errors in your workfile. You can use these standard errors to form forecast intervals. If you choose the Do graph option for output, EViews will plot the forecasts with plus and minus two standard error bands. These two standard error bands provide an approximate 95% forecast interval; if you (hypothetically) make many forecasts, the actual value of the dependent variable will fall inside these bounds 95 percent of the time.

Additional Details

EViews accounts for the additional forecast uncertainty generated when lagged dependent variables are used as explanatory variables (see “Forecasts with Lagged Dependent Variables” on page 555).

There are cases where coefficient uncertainty is ignored in forming the forecast standard error. For example, coefficient uncertainty is always ignored in equations specified by expression, for example, nonlinear least squares, and equations that include PDL (polynomial distributed lag) terms (“Forecasting with Expression and PDL Specifications” on page 567).

Forecast Basics—553

In addition, forecast standard errors do not account for GLS weights in estimated panel equations.

Forecast Evaluation

Suppose we construct a dynamic forecast for HS over the period 1990M02 to 1996M01 using our estimated housing equation. If the Forecast evaluation option is checked, and there are actual data for the forecasted variable for the forecast sample, EViews reports a table of statistical results evaluating the forecast:

Forecast: HSF

Actual: HS

Sample: 1990M02 1996M01

Include observations: 72

Root Mean Squared Error |

0.318700 |

Mean Absolute Error |

0.297261 |

Mean Absolute Percentage Error |

4.205889 |

Theil Inequality Coefficient |

0.021917 |

Bias Proportion |

0.869982 |

Variance Proportion |

0.082804 |

Covariance Proportion |

0.047214 |

|

|

Note that EViews cannot compute a forecast evaluation if there are no data for the dependent variable for the forecast sample.

The forecast evaluation is saved in one of two formats. If you turn on the Do graph option, the forecasts are included along with a graph of the forecasts. If you wish to display the evaluations in their own table, you should turn off the Do graph option in the Forecast dialog box.

Suppose the forecast sample is j = T + 1, T + 2, …, T + h , and denote the actual and

forecasted value in period t as yt |

ˆ |

|

|

|

|

|

|

|

||

and yt , respectively. The reported forecast error statis- |

||||||||||

tics are computed as follows: |

|

|

|

|

|

|

|

|

||

|

|

|

|

|

|

|

|

|

|

|

|

Root Mean Squared Error |

|

T + h |

ˆ |

|

|

2 |

⁄ h |

|

|

|

|

|

Σ |

|

|

|

||||

|

|

|

( yt − yt) |

|

|

|

||||

|

|

|

t = T + 1 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Mean Absolute Error |

|

T + h |

ˆ |

|

⁄ h |

|

|||

|

|

|

Σ |

|

|

|||||

|

|

|

yt − yt |

|

||||||

|

|

|

t = T + 1 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Mean Absolute Percentage |

|

T + h |

ˆ |

|

|

|

|

||

|

Error |

|

100 Σ |

|

yt − yt |

|

⁄ h |

|

||

|

|

|

--------------- |

|

|

|||||

|

|

|

t = T + 1 |

yt |

|

|

|

|

||

|

|

|

|

|

|

|

|

|

|

|

554—Chapter 18. Forecasting from an Equation

Theil Inequality Coefficient |

|

T + h |

|

2 |

|

|

|

|

|

Σ |

ˆ |

− yt) |

⁄ h |

|

|

|

|

( yt |

|

|

|||

|

|

t = T + 1 |

|

|

|

|

|

|

--------------------------------------------------------------------------------- |

||||||

|

T + h |

ˆ 2 |

|

T + h |

|

||

|

Σ |

⁄ h + |

Σ |

2 |

⁄ h |

||

|

yt |

yt |

|||||

|

t = T + 1 |

|

t = T + 1 |

|

|||

|

|

|

|

|

|

|

|

The first two forecast error statistics depend on the scale of the dependent variable. These should be used as relative measures to compare forecasts for the same series across different models; the smaller the error, the better the forecasting ability of that model according to that criterion. The remaining two statistics are scale invariant. The Theil inequality coefficient always lies between zero and one, where zero indicates a perfect fit.

The mean squared forecast error can be decomposed as:

|

|

|

|

ˆ |

|

|

|

|

|

2 |

|

|

|

|

ˆ |

|

|

|

|

2 |

|

|

|

2 |

|

|

|

|

|

|

|

|

|

− y |

) |

⁄ h |

= ( ( |

|

|

⁄ h) − y) |

+ ( sˆ − s |

|

) |

+ 2 |

( 1 |

− r) sˆ s |

|

||||||||||

|

|

Σ |

( y |

t |

|

Σ |

y |

t |

|

y |

|

y |

||||||||||||||||

|

|

|

|

|

t |

|

|

|

|

|

|

|

|

|

|

y |

|

|

|

|

y |

|||||||

|

|

ˆ |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

where |

Σ |

|

⁄ h , y , |

sˆ , s |

|

are the means and (biased) standard deviations of |

||||||||||||||||||||||

y |

t |

y |

||||||||||||||||||||||||||

|

|

|

|

|

|

|

|

y |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|||

and r is the correlation between yˆ and y . The proportions are defined as:

(18.5)

ˆ t and , y y

Bias Proportion |

ˆ |

|

|

|

|

2 |

|

( ( Σyt |

⁄ h) − |

y |

) |

|

|

|

--------------------------------------- |

|||||

|

ˆ |

− yt) |

2 |

⁄ h |

||

|

Σ ( yt |

|

||||

Variance Proportion |

( sy |

− sy) 2 |

|

|

|

|

|

------------------------------------ |

|||||

|

ˆ |

− yt) |

2 |

⁄ h |

||

|

Σ ( yt |

|

||||

Covariance Proportion |

2( 1 − r) sysy |

|

||||

|

------------------------------------ |

|||||

|

ˆ |

− yt) |

2 |

⁄ h |

||

|

Σ ( yt |

|

||||

•The bias proportion tells us how far the mean of the forecast is from the mean of the actual series.

•The variance proportion tells us how far the variation of the forecast is from the variation of the actual series.

•The covariance proportion measures the remaining unsystematic forecasting errors.

Note that the bias, variance, and covariance proportions add up to one.

If your forecast is “good”, the bias and variance proportions should be small so that most of the bias should be concentrated on the covariance proportions. For additional discussion of forecast evaluation, see Pindyck and Rubinfeld (1991, Chapter 12).

Forecasts with Lagged Dependent Variables—555

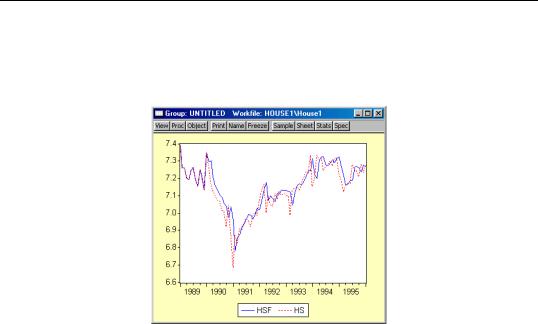

For the example output, the bias proportion is large, indicating that the mean of the forecasts does a poor job of tracking the mean of the dependent variable. To check this, we will plot the forecasted series together with the actual series in the forecast sample with the two standard error bounds. Suppose we saved the forecasts and their standard errors as HSF and HSFSE, respectively. Then the plus and minus two standard error series can be generated by the commands:

smpl 1990m02 1996m01

series hsf_high = hsf + 2*hsfse

series hsf_low = hsf - 2*hsfse

Create a group containing the four series. You can highlight the four series HS, HSF, HSF_HIGH, and HSF_LOW, double click on the selected area, and select Open Group, or you can select Quick/Show… and enter the four series names. Once you have the group open, select View/Graph/Line.

The forecasts completely miss the downturn at the start of the 1990’s, but, subsequent to the recovery, track the trend reasonably well from 1992 to 1996.

Forecasts with Lagged Dependent Variables

Forecasting is complicated by the presence of lagged dependent variables on the right-hand side of the equation. For example, we can augment the earlier specification to include the first lag of Y:

y c x z y(-1)

and click on the Forecast button and fill out the series names in the dialog as above. There is some question, however, as to how we should evaluate the lagged value of Y that

556—Chapter 18. Forecasting from an Equation

appears on the right-hand side of the equation. There are two possibilities: dynamic forecasting and static forecasting.

Dynamic Forecasting

If you select dynamic forecasting, EViews will perform a multi-step forecast of Y, beginning at the start of the forecast sample. For our single lag specification above:

•The initial observation in the forecast sample will use the actual value of lagged Y. Thus, if S is the first observation in the forecast sample, EViews will compute:

ˆ |

= |

ˆ |

ˆ |

ˆ |

ˆ |

, |

(18.6) |

yS |

c(1) + c(2 )xS + c(3)zS + c(4)yS − 1 |

||||||

where yS − 1 is the value of the lagged endogenous variable in the period prior to the start of the forecast sample. This is the one-step ahead forecast.

• Forecasts for subsequent observations will use the previously forecasted values of Y:

ˆ |

= |

ˆ |

ˆ |

ˆ |

ˆ ˆ |

(18.7) |

yS + k |

c(1) + c(2)xS + k + c(3)zS + k + c(4 )yS + k − 1 . |

|||||

• These forecasts may differ significantly from the one-step ahead forecasts.

If there are additional lags of Y in the estimating equation, the above algorithm is modified to account for the non-availability of lagged forecasted values in the additional period. For example, if there are three lags of Y in the equation:

• The first observation (S ) uses the actual values for all three lags, yS − 3 , yS − 2 , and

yS − 1 .

•The second observation ( S + 1 ) uses actual values for yS − 2 and, yS − 1 and the

forecasted value yˆ S of the first lag of yS + 1 .

• The third observation (S + 2 ) will use the actual values for yS − 1 , and forecasted values yˆ S + 1 and yˆ S for the first and second lags of yS + 2 .

• All subsequent observations will use the forecasted values for all three lags.

The selection of the start of the forecast sample is very important for dynamic forecasting. The dynamic forecasts are true multi-step forecasts (from the start of the forecast sample), since they use the recursively computed forecast of the lagged value of the dependent variable. These forecasts may be interpreted as the forecasts for subsequent periods that would be computed using information available at the start of the forecast sample.

Dynamic forecasting requires that data for the exogenous variables be available for every observation in the forecast sample, and that values for any lagged dependent variables be observed at the start of the forecast sample (in our example, yS − 1 , but more generally, any lags of y ). If necessary, the forecast sample will be adjusted.