- •brief contents

- •contents

- •preface

- •acknowledgments

- •about this book

- •What’s new in the second edition

- •Who should read this book

- •Roadmap

- •Advice for data miners

- •Code examples

- •Code conventions

- •Author Online

- •About the author

- •about the cover illustration

- •1 Introduction to R

- •1.2 Obtaining and installing R

- •1.3 Working with R

- •1.3.1 Getting started

- •1.3.2 Getting help

- •1.3.3 The workspace

- •1.3.4 Input and output

- •1.4 Packages

- •1.4.1 What are packages?

- •1.4.2 Installing a package

- •1.4.3 Loading a package

- •1.4.4 Learning about a package

- •1.5 Batch processing

- •1.6 Using output as input: reusing results

- •1.7 Working with large datasets

- •1.8 Working through an example

- •1.9 Summary

- •2 Creating a dataset

- •2.1 Understanding datasets

- •2.2 Data structures

- •2.2.1 Vectors

- •2.2.2 Matrices

- •2.2.3 Arrays

- •2.2.4 Data frames

- •2.2.5 Factors

- •2.2.6 Lists

- •2.3 Data input

- •2.3.1 Entering data from the keyboard

- •2.3.2 Importing data from a delimited text file

- •2.3.3 Importing data from Excel

- •2.3.4 Importing data from XML

- •2.3.5 Importing data from the web

- •2.3.6 Importing data from SPSS

- •2.3.7 Importing data from SAS

- •2.3.8 Importing data from Stata

- •2.3.9 Importing data from NetCDF

- •2.3.10 Importing data from HDF5

- •2.3.11 Accessing database management systems (DBMSs)

- •2.3.12 Importing data via Stat/Transfer

- •2.4 Annotating datasets

- •2.4.1 Variable labels

- •2.4.2 Value labels

- •2.5 Useful functions for working with data objects

- •2.6 Summary

- •3 Getting started with graphs

- •3.1 Working with graphs

- •3.2 A simple example

- •3.3 Graphical parameters

- •3.3.1 Symbols and lines

- •3.3.2 Colors

- •3.3.3 Text characteristics

- •3.3.4 Graph and margin dimensions

- •3.4 Adding text, customized axes, and legends

- •3.4.1 Titles

- •3.4.2 Axes

- •3.4.3 Reference lines

- •3.4.4 Legend

- •3.4.5 Text annotations

- •3.4.6 Math annotations

- •3.5 Combining graphs

- •3.5.1 Creating a figure arrangement with fine control

- •3.6 Summary

- •4 Basic data management

- •4.1 A working example

- •4.2 Creating new variables

- •4.3 Recoding variables

- •4.4 Renaming variables

- •4.5 Missing values

- •4.5.1 Recoding values to missing

- •4.5.2 Excluding missing values from analyses

- •4.6 Date values

- •4.6.1 Converting dates to character variables

- •4.6.2 Going further

- •4.7 Type conversions

- •4.8 Sorting data

- •4.9 Merging datasets

- •4.9.1 Adding columns to a data frame

- •4.9.2 Adding rows to a data frame

- •4.10 Subsetting datasets

- •4.10.1 Selecting (keeping) variables

- •4.10.2 Excluding (dropping) variables

- •4.10.3 Selecting observations

- •4.10.4 The subset() function

- •4.10.5 Random samples

- •4.11 Using SQL statements to manipulate data frames

- •4.12 Summary

- •5 Advanced data management

- •5.2 Numerical and character functions

- •5.2.1 Mathematical functions

- •5.2.2 Statistical functions

- •5.2.3 Probability functions

- •5.2.4 Character functions

- •5.2.5 Other useful functions

- •5.2.6 Applying functions to matrices and data frames

- •5.3 A solution for the data-management challenge

- •5.4 Control flow

- •5.4.1 Repetition and looping

- •5.4.2 Conditional execution

- •5.5 User-written functions

- •5.6 Aggregation and reshaping

- •5.6.1 Transpose

- •5.6.2 Aggregating data

- •5.6.3 The reshape2 package

- •5.7 Summary

- •6 Basic graphs

- •6.1 Bar plots

- •6.1.1 Simple bar plots

- •6.1.2 Stacked and grouped bar plots

- •6.1.3 Mean bar plots

- •6.1.4 Tweaking bar plots

- •6.1.5 Spinograms

- •6.2 Pie charts

- •6.3 Histograms

- •6.4 Kernel density plots

- •6.5 Box plots

- •6.5.1 Using parallel box plots to compare groups

- •6.5.2 Violin plots

- •6.6 Dot plots

- •6.7 Summary

- •7 Basic statistics

- •7.1 Descriptive statistics

- •7.1.1 A menagerie of methods

- •7.1.2 Even more methods

- •7.1.3 Descriptive statistics by group

- •7.1.4 Additional methods by group

- •7.1.5 Visualizing results

- •7.2 Frequency and contingency tables

- •7.2.1 Generating frequency tables

- •7.2.2 Tests of independence

- •7.2.3 Measures of association

- •7.2.4 Visualizing results

- •7.3 Correlations

- •7.3.1 Types of correlations

- •7.3.2 Testing correlations for significance

- •7.3.3 Visualizing correlations

- •7.4 T-tests

- •7.4.3 When there are more than two groups

- •7.5 Nonparametric tests of group differences

- •7.5.1 Comparing two groups

- •7.5.2 Comparing more than two groups

- •7.6 Visualizing group differences

- •7.7 Summary

- •8 Regression

- •8.1 The many faces of regression

- •8.1.1 Scenarios for using OLS regression

- •8.1.2 What you need to know

- •8.2 OLS regression

- •8.2.1 Fitting regression models with lm()

- •8.2.2 Simple linear regression

- •8.2.3 Polynomial regression

- •8.2.4 Multiple linear regression

- •8.2.5 Multiple linear regression with interactions

- •8.3 Regression diagnostics

- •8.3.1 A typical approach

- •8.3.2 An enhanced approach

- •8.3.3 Global validation of linear model assumption

- •8.3.4 Multicollinearity

- •8.4 Unusual observations

- •8.4.1 Outliers

- •8.4.3 Influential observations

- •8.5 Corrective measures

- •8.5.1 Deleting observations

- •8.5.2 Transforming variables

- •8.5.3 Adding or deleting variables

- •8.5.4 Trying a different approach

- •8.6 Selecting the “best” regression model

- •8.6.1 Comparing models

- •8.6.2 Variable selection

- •8.7 Taking the analysis further

- •8.7.1 Cross-validation

- •8.7.2 Relative importance

- •8.8 Summary

- •9 Analysis of variance

- •9.1 A crash course on terminology

- •9.2 Fitting ANOVA models

- •9.2.1 The aov() function

- •9.2.2 The order of formula terms

- •9.3.1 Multiple comparisons

- •9.3.2 Assessing test assumptions

- •9.4 One-way ANCOVA

- •9.4.1 Assessing test assumptions

- •9.4.2 Visualizing the results

- •9.6 Repeated measures ANOVA

- •9.7 Multivariate analysis of variance (MANOVA)

- •9.7.1 Assessing test assumptions

- •9.7.2 Robust MANOVA

- •9.8 ANOVA as regression

- •9.9 Summary

- •10 Power analysis

- •10.1 A quick review of hypothesis testing

- •10.2 Implementing power analysis with the pwr package

- •10.2.1 t-tests

- •10.2.2 ANOVA

- •10.2.3 Correlations

- •10.2.4 Linear models

- •10.2.5 Tests of proportions

- •10.2.7 Choosing an appropriate effect size in novel situations

- •10.3 Creating power analysis plots

- •10.4 Other packages

- •10.5 Summary

- •11 Intermediate graphs

- •11.1 Scatter plots

- •11.1.3 3D scatter plots

- •11.1.4 Spinning 3D scatter plots

- •11.1.5 Bubble plots

- •11.2 Line charts

- •11.3 Corrgrams

- •11.4 Mosaic plots

- •11.5 Summary

- •12 Resampling statistics and bootstrapping

- •12.1 Permutation tests

- •12.2 Permutation tests with the coin package

- •12.2.2 Independence in contingency tables

- •12.2.3 Independence between numeric variables

- •12.2.5 Going further

- •12.3 Permutation tests with the lmPerm package

- •12.3.1 Simple and polynomial regression

- •12.3.2 Multiple regression

- •12.4 Additional comments on permutation tests

- •12.5 Bootstrapping

- •12.6 Bootstrapping with the boot package

- •12.6.1 Bootstrapping a single statistic

- •12.6.2 Bootstrapping several statistics

- •12.7 Summary

- •13 Generalized linear models

- •13.1 Generalized linear models and the glm() function

- •13.1.1 The glm() function

- •13.1.2 Supporting functions

- •13.1.3 Model fit and regression diagnostics

- •13.2 Logistic regression

- •13.2.1 Interpreting the model parameters

- •13.2.2 Assessing the impact of predictors on the probability of an outcome

- •13.2.3 Overdispersion

- •13.2.4 Extensions

- •13.3 Poisson regression

- •13.3.1 Interpreting the model parameters

- •13.3.2 Overdispersion

- •13.3.3 Extensions

- •13.4 Summary

- •14 Principal components and factor analysis

- •14.1 Principal components and factor analysis in R

- •14.2 Principal components

- •14.2.1 Selecting the number of components to extract

- •14.2.2 Extracting principal components

- •14.2.3 Rotating principal components

- •14.2.4 Obtaining principal components scores

- •14.3 Exploratory factor analysis

- •14.3.1 Deciding how many common factors to extract

- •14.3.2 Extracting common factors

- •14.3.3 Rotating factors

- •14.3.4 Factor scores

- •14.4 Other latent variable models

- •14.5 Summary

- •15 Time series

- •15.1 Creating a time-series object in R

- •15.2 Smoothing and seasonal decomposition

- •15.2.1 Smoothing with simple moving averages

- •15.2.2 Seasonal decomposition

- •15.3 Exponential forecasting models

- •15.3.1 Simple exponential smoothing

- •15.3.3 The ets() function and automated forecasting

- •15.4 ARIMA forecasting models

- •15.4.1 Prerequisite concepts

- •15.4.2 ARMA and ARIMA models

- •15.4.3 Automated ARIMA forecasting

- •15.5 Going further

- •15.6 Summary

- •16 Cluster analysis

- •16.1 Common steps in cluster analysis

- •16.2 Calculating distances

- •16.3 Hierarchical cluster analysis

- •16.4 Partitioning cluster analysis

- •16.4.2 Partitioning around medoids

- •16.5 Avoiding nonexistent clusters

- •16.6 Summary

- •17 Classification

- •17.1 Preparing the data

- •17.2 Logistic regression

- •17.3 Decision trees

- •17.3.1 Classical decision trees

- •17.3.2 Conditional inference trees

- •17.4 Random forests

- •17.5 Support vector machines

- •17.5.1 Tuning an SVM

- •17.6 Choosing a best predictive solution

- •17.7 Using the rattle package for data mining

- •17.8 Summary

- •18 Advanced methods for missing data

- •18.1 Steps in dealing with missing data

- •18.2 Identifying missing values

- •18.3 Exploring missing-values patterns

- •18.3.1 Tabulating missing values

- •18.3.2 Exploring missing data visually

- •18.3.3 Using correlations to explore missing values

- •18.4 Understanding the sources and impact of missing data

- •18.5 Rational approaches for dealing with incomplete data

- •18.6 Complete-case analysis (listwise deletion)

- •18.7 Multiple imputation

- •18.8 Other approaches to missing data

- •18.8.1 Pairwise deletion

- •18.8.2 Simple (nonstochastic) imputation

- •18.9 Summary

- •19 Advanced graphics with ggplot2

- •19.1 The four graphics systems in R

- •19.2 An introduction to the ggplot2 package

- •19.3 Specifying the plot type with geoms

- •19.4 Grouping

- •19.5 Faceting

- •19.6 Adding smoothed lines

- •19.7 Modifying the appearance of ggplot2 graphs

- •19.7.1 Axes

- •19.7.2 Legends

- •19.7.3 Scales

- •19.7.4 Themes

- •19.7.5 Multiple graphs per page

- •19.8 Saving graphs

- •19.9 Summary

- •20 Advanced programming

- •20.1 A review of the language

- •20.1.1 Data types

- •20.1.2 Control structures

- •20.1.3 Creating functions

- •20.2 Working with environments

- •20.3 Object-oriented programming

- •20.3.1 Generic functions

- •20.3.2 Limitations of the S3 model

- •20.4 Writing efficient code

- •20.5 Debugging

- •20.5.1 Common sources of errors

- •20.5.2 Debugging tools

- •20.5.3 Session options that support debugging

- •20.6 Going further

- •20.7 Summary

- •21 Creating a package

- •21.1 Nonparametric analysis and the npar package

- •21.1.1 Comparing groups with the npar package

- •21.2 Developing the package

- •21.2.1 Computing the statistics

- •21.2.2 Printing the results

- •21.2.3 Summarizing the results

- •21.2.4 Plotting the results

- •21.2.5 Adding sample data to the package

- •21.3 Creating the package documentation

- •21.4 Building the package

- •21.5 Going further

- •21.6 Summary

- •22 Creating dynamic reports

- •22.1 A template approach to reports

- •22.2 Creating dynamic reports with R and Markdown

- •22.3 Creating dynamic reports with R and LaTeX

- •22.4 Creating dynamic reports with R and Open Document

- •22.5 Creating dynamic reports with R and Microsoft Word

- •22.6 Summary

- •afterword Into the rabbit hole

- •appendix A Graphical user interfaces

- •appendix B Customizing the startup environment

- •appendix C Exporting data from R

- •Delimited text file

- •Excel spreadsheet

- •Statistical applications

- •appendix D Matrix algebra in R

- •appendix E Packages used in this book

- •appendix F Working with large datasets

- •F.1 Efficient programming

- •F.2 Storing data outside of RAM

- •F.3 Analytic packages for out-of-memory data

- •F.4 Comprehensive solutions for working with enormous datasets

- •appendix G Updating an R installation

- •G.1 Automated installation (Windows only)

- •G.2 Manual installation (Windows and Mac OS X)

- •G.3 Updating an R installation (Linux)

- •references

- •index

- •Symbols

- •Numerics

- •23.1 The lattice package

- •23.2 Conditioning variables

- •23.3 Panel functions

- •23.4 Grouping variables

- •23.5 Graphic parameters

- •23.6 Customizing plot strips

- •23.7 Page arrangement

- •23.8 Going further

246 |

CHAPTER 10 Power analysis |

where ρis the population correlation between these two psychological variables. You’ve set your significance level to 0.05, and you want to be 90% confident that you’ll reject H0 if it’s false. How many observations will you need? This code provides the answer:

> pwr.r.test(r=.25, sig.level=.05, power=.90, alternative="greater")

approximate correlation power calculation (arctangh transformation)

n = 134 r = 0.25

sig.level = 0.05 power = 0.9

alternative = greater

Thus, you need to assess depression and loneliness in 134 participants in order to be 90% confident that you’ll reject the null hypothesis if it’s false.

10.2.4Linear models

For linear models (such as multiple regression), the pwr.f2.test() function can be used to carry out a power analysis. The format is

pwr.f2.test(u=, v=, f2=, sig.level=, power=)

where u and v are the numerator and denominator degrees of freedom and f2 is the effect size.

f 2 |

= |

R |

2 |

|

|

where |

R2 = population squared |

|

|

|

|

|

multiple correlation |

||||

|

|

|

|

|

||||

|

|

1− R 2 |

||||||

|

|

|

|

|

|

|

where |

R2A = variance accounted for in the |

|

2 |

RAB2 |

− RA2 |

population by variable set A |

||||

f |

|

= |

|

|

|

|

|

R2AB = variance accounted for in the |

|

|

|

|

|

|

|||

|

|

1− RAB2 |

||||||

population by variable set A and B together

The first formula for f2 is appropriate when you’re evaluating the impact of a set of predictors on an outcome. The second formula is appropriate when you’re evaluating the impact of one set of predictors above and beyond a second set of predictors (or covariates).

Let’s say you’re interested in whether a boss’s leadership style impacts workers’ satisfaction above and beyond the salary and perks associated with the job. Leadership style is assessed by four variables, and salary and perks are associated with three variables. Past experience suggests that salary and perks account for roughly 30% of the variance in worker satisfaction. From a practical standpoint, it would be interesting if leadership style accounted for at least 5% above this figure. Assuming a significance level of 0.05, how many subjects would be needed to identify such a contribution with 90% confidence?

Here, sig.level=0.05, power=0.90, u=3 (total number of predictors minus the number of predictors in set B), and the effect size is f2 = (.35 – .30)/(1 – .35) = 0.0769. Entering this into the function yields the following:

Implementing power analysis with the pwr package |

247 |

> pwr.f2.test(u=3, f2=0.0769, sig.level=0.05, power=0.90)

Multiple regression power calculation

u = 3

v = 184.2426 f2 = 0.0769

sig.level = 0.05 power = 0.9

In multiple regression, the denominator degrees of freedom equals N – k – 1, where N is the number of observations and k is the number of predictors. In this case, N – 7 – 1 = 185, which means the required sample size is N = 185 + 7 + 1 = 193.

10.2.5Tests of proportions

The pwr.2p.test() function can be used to perform a power analysis when comparing two proportions. The format is

pwr.2p.test(h=, n=, sig.level=, power=)

where h is the effect size and n is the common sample size in each group. The effect size h is defined as

h = 2 arcsin p1

p1 − 2 arcsin

− 2 arcsin p2

p2

and can be calculated with the function ES.h(p1, p2). For unequal ns, the desired function is

pwr.2p2n.test(h=, n1=, n2=, sig.level=, power=)

The alternative= option can be used to specify a two-tailed ("two.sided") or onetailed ("less" or "greater") test. A two-tailed test is the default.

Let’s say that you suspect that a popular medication relieves symptoms in 60% of users. A new (and more expensive) medication will be marketed if it improves symptoms in 65% of users. How many participants will you need to include in a study comparing these two medications if you want to detect a difference this large?

Assume that you want to be 90% confident in a conclusion that the new drug is better and 95% confident that you won’t reach this conclusion erroneously. You’ll use a one-tailed test because you’re only interested in assessing whether the new drug is better than the standard. The code looks like this:

> pwr.2p.test(h=ES.h(.65, .6), sig.level=.05, power=.9, alternative="greater")

Difference of proportion power calculation for binomial distribution (arcsine transformation)

h = 0.1033347 n = 1604.007

sig.level = 0.05 power = 0.9

alternative = greater

NOTE: same sample sizes

248 |

CHAPTER 10 Power analysis |

Based on these results, you’ll need to conduct a study with 1,605 individuals receiving the new drug and 1,605 receiving the existing drug in order to meet the criteria.

10.2.6Chi-square tests

Chi-square tests are often used to assess the relationship between two categorical variables. The null hypothesis is typically that the variables are independent versus a research hypothesis that they aren’t. The pwr.chisq.test() function can be used to evaluate the power, effect size, or requisite sample size when employing a chi-square test. The format is

pwr.chisq.test(w=, N=, df=, sig.level=, power=)

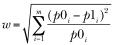

where w is the effect size, N is the total sample size, and df is the degrees of freedom. Here, effect size w is defined as

where p0i = cell probability in ith cell under H0 p1i = cell probability in ith cell under H1

The summation goes from 1 to m, where m is the number of cells in the contingency table. The function ES.w2(P) can be used to calculate the effect size corresponding to the alternative hypothesis in a two-way contingency table. Here, P is a hypothesized two-way probability table.

As a simple example, let’s assume that you’re looking at the relationship between ethnicity and promotion. You anticipate that 70% of your sample will be Caucasian, 10% will be African-American, and 20% will be Hispanic. Further, you believe that 60% of Caucasians tend to be promoted, compared with 30% for African-Americans and 50% for Hispanics. Your research hypothesis is that the probability of promotion follows the values in table 10.2.

Table 10.2 Proportion of individuals expected to be promoted based on the research hypothesis

Ethnicity |

Promoted |

Not promoted |

|

|

|

Caucasian |

0.42 |

0.28 |

African-American |

0.03 |

0.07 |

Hispanic |

0.10 |

0.10 |

|

|

|

For example, you expect that 42% of the population will be promoted Caucasians (.42 = .70 × .60) and 7% of the population will be nonpromoted African-Americans (.07 = .10 × .70). Let’s assume a significance level of 0.05 and that the desired power level is 0.90. The degrees of freedom in a two-way contingency table are (r–1)×(c–1), where r is the number of rows and c is the number of columns. You can calculate the hypothesized effect size with the following code:

Implementing power analysis with the pwr package |

249 |

>prob <- matrix(c(.42, .28, .03, .07, .10, .10), byrow=TRUE, nrow=3)

>ES.w2(prob)

[1] 0.1853198

Using this information, you can calculate the necessary sample size like this:

> pwr.chisq.test(w=.1853, df=2, sig.level=.05, power=.9)

Chi squared power calculation

w = 0.1853

N = 368.5317 df = 2

sig.level = 0.05 power = 0.9

NOTE: N is the number of observations

The results suggest that a study with 369 participants will be adequate to detect a relationship between ethnicity and promotion given the effect size, power, and significance level specified.

10.2.7Choosing an appropriate effect size in novel situations

In power analysis, the expected effect size is the most difficult parameter to determine. It typically requires that you have experience with the subject matter and the measures employed. For example, the data from past studies can be used to calculate effect sizes, which can then be used to plan future studies.

But what can you do when the research situation is completely novel and you have no past experience to call upon? In the area of behavioral sciences, Cohen (1988) attempted to provide benchmarks for “small,” “medium,” and “large” effect sizes for various statistical tests. These guidelines are provided in table 10.3.

Table 10.3 Cohen’s effect size benchmarks

Statistical method |

Effect size |

Suggested guidelines for effect size |

|||

measures |

|||||

|

|

|

|

||

|

|

|

|

|

|

|

|

Small |

Medium |

Large |

|

|

|

|

|

|

|

t-test |

d |

0.20 |

0.50 |

0.80 |

|

ANOVA |

f |

0.10 |

0.25 |

0.40 |

|

Linear models |

f2 |

0.02 |

0.15 |

0.35 |

|

Test of proportions |

h |

0.20 |

0.50 |

0.80 |

|

Chi-square |

w |

0.10 |

0.30 |

0.50 |

|

|

|

|

|

|

|

When you have no idea what effect size may be present, this table may provide some guidance. For example, what’s the probability of rejecting a false null hypothesis (that

250 |

CHAPTER 10 Power analysis |

is, finding a real effect) if you’re using a one-way ANOVA with 5 groups, 25 subjects per group, and a significance level of 0.05?

Using the pwr.anova.test() function and the suggestions in the f row of table 10.3, the power would be 0.118 for detecting a small effect, 0.574 for detecting a moderate effect, and 0.957 for detecting a large effect. Given the sample-size limitations, you’re only likely to find an effect if it’s large.

It’s important to keep in mind that Cohen’s benchmarks are just general suggestions derived from a range of social research studies and may not apply to your particular field of research. An alternative is to vary the study parameters and note the impact on such things as sample size and power. For example, again assume that you want to compare five groups using a one-way ANOVA and a 0.05 significance level. The following listing computes the sample sizes needed to detect a range of effect sizes and plots the results in figure 10.2.

Listing 10.1 Sample sizes for detecting significant effects in a one-way ANOVA

library(pwr)

es <- seq(.1, .5, .01) nes <- length(es)

samsize <- NULL for (i in 1:nes){

result <- pwr.anova.test(k=5, f=es[i], sig.level=.05, power=.9) samsize[i] <- ceiling(result$n)

}

plot(samsize,es, type="l", lwd=2, col="red", ylab="Effect Size",

xlab="Sample Size (per cell)",

main="One Way ANOVA with Power=.90 and Alpha=.05")

One Way ANOVA with Power=.90 and Alpha=.05

|

0.5 |

|

|

|

|

|

|

0.4 |

|

|

|

|

|

Effect Size |

0.3 |

|

|

|

|

|

|

0.2 |

|

|

|

|

|

|

0.1 |

|

|

|

|

|

|

50 |

100 |

150 |

200 |

250 |

300 |

Figure 10.2 Sample size needed to detect various effect sizes in a one-way ANOVA with five groups (assuming a power of 0.90 and significance level of 0.05)

Sample Size (per cell)