- •brief contents

- •contents

- •preface

- •acknowledgments

- •about this book

- •What’s new in the second edition

- •Who should read this book

- •Roadmap

- •Advice for data miners

- •Code examples

- •Code conventions

- •Author Online

- •About the author

- •about the cover illustration

- •1 Introduction to R

- •1.2 Obtaining and installing R

- •1.3 Working with R

- •1.3.1 Getting started

- •1.3.2 Getting help

- •1.3.3 The workspace

- •1.3.4 Input and output

- •1.4 Packages

- •1.4.1 What are packages?

- •1.4.2 Installing a package

- •1.4.3 Loading a package

- •1.4.4 Learning about a package

- •1.5 Batch processing

- •1.6 Using output as input: reusing results

- •1.7 Working with large datasets

- •1.8 Working through an example

- •1.9 Summary

- •2 Creating a dataset

- •2.1 Understanding datasets

- •2.2 Data structures

- •2.2.1 Vectors

- •2.2.2 Matrices

- •2.2.3 Arrays

- •2.2.4 Data frames

- •2.2.5 Factors

- •2.2.6 Lists

- •2.3 Data input

- •2.3.1 Entering data from the keyboard

- •2.3.2 Importing data from a delimited text file

- •2.3.3 Importing data from Excel

- •2.3.4 Importing data from XML

- •2.3.5 Importing data from the web

- •2.3.6 Importing data from SPSS

- •2.3.7 Importing data from SAS

- •2.3.8 Importing data from Stata

- •2.3.9 Importing data from NetCDF

- •2.3.10 Importing data from HDF5

- •2.3.11 Accessing database management systems (DBMSs)

- •2.3.12 Importing data via Stat/Transfer

- •2.4 Annotating datasets

- •2.4.1 Variable labels

- •2.4.2 Value labels

- •2.5 Useful functions for working with data objects

- •2.6 Summary

- •3 Getting started with graphs

- •3.1 Working with graphs

- •3.2 A simple example

- •3.3 Graphical parameters

- •3.3.1 Symbols and lines

- •3.3.2 Colors

- •3.3.3 Text characteristics

- •3.3.4 Graph and margin dimensions

- •3.4 Adding text, customized axes, and legends

- •3.4.1 Titles

- •3.4.2 Axes

- •3.4.3 Reference lines

- •3.4.4 Legend

- •3.4.5 Text annotations

- •3.4.6 Math annotations

- •3.5 Combining graphs

- •3.5.1 Creating a figure arrangement with fine control

- •3.6 Summary

- •4 Basic data management

- •4.1 A working example

- •4.2 Creating new variables

- •4.3 Recoding variables

- •4.4 Renaming variables

- •4.5 Missing values

- •4.5.1 Recoding values to missing

- •4.5.2 Excluding missing values from analyses

- •4.6 Date values

- •4.6.1 Converting dates to character variables

- •4.6.2 Going further

- •4.7 Type conversions

- •4.8 Sorting data

- •4.9 Merging datasets

- •4.9.1 Adding columns to a data frame

- •4.9.2 Adding rows to a data frame

- •4.10 Subsetting datasets

- •4.10.1 Selecting (keeping) variables

- •4.10.2 Excluding (dropping) variables

- •4.10.3 Selecting observations

- •4.10.4 The subset() function

- •4.10.5 Random samples

- •4.11 Using SQL statements to manipulate data frames

- •4.12 Summary

- •5 Advanced data management

- •5.2 Numerical and character functions

- •5.2.1 Mathematical functions

- •5.2.2 Statistical functions

- •5.2.3 Probability functions

- •5.2.4 Character functions

- •5.2.5 Other useful functions

- •5.2.6 Applying functions to matrices and data frames

- •5.3 A solution for the data-management challenge

- •5.4 Control flow

- •5.4.1 Repetition and looping

- •5.4.2 Conditional execution

- •5.5 User-written functions

- •5.6 Aggregation and reshaping

- •5.6.1 Transpose

- •5.6.2 Aggregating data

- •5.6.3 The reshape2 package

- •5.7 Summary

- •6 Basic graphs

- •6.1 Bar plots

- •6.1.1 Simple bar plots

- •6.1.2 Stacked and grouped bar plots

- •6.1.3 Mean bar plots

- •6.1.4 Tweaking bar plots

- •6.1.5 Spinograms

- •6.2 Pie charts

- •6.3 Histograms

- •6.4 Kernel density plots

- •6.5 Box plots

- •6.5.1 Using parallel box plots to compare groups

- •6.5.2 Violin plots

- •6.6 Dot plots

- •6.7 Summary

- •7 Basic statistics

- •7.1 Descriptive statistics

- •7.1.1 A menagerie of methods

- •7.1.2 Even more methods

- •7.1.3 Descriptive statistics by group

- •7.1.4 Additional methods by group

- •7.1.5 Visualizing results

- •7.2 Frequency and contingency tables

- •7.2.1 Generating frequency tables

- •7.2.2 Tests of independence

- •7.2.3 Measures of association

- •7.2.4 Visualizing results

- •7.3 Correlations

- •7.3.1 Types of correlations

- •7.3.2 Testing correlations for significance

- •7.3.3 Visualizing correlations

- •7.4 T-tests

- •7.4.3 When there are more than two groups

- •7.5 Nonparametric tests of group differences

- •7.5.1 Comparing two groups

- •7.5.2 Comparing more than two groups

- •7.6 Visualizing group differences

- •7.7 Summary

- •8 Regression

- •8.1 The many faces of regression

- •8.1.1 Scenarios for using OLS regression

- •8.1.2 What you need to know

- •8.2 OLS regression

- •8.2.1 Fitting regression models with lm()

- •8.2.2 Simple linear regression

- •8.2.3 Polynomial regression

- •8.2.4 Multiple linear regression

- •8.2.5 Multiple linear regression with interactions

- •8.3 Regression diagnostics

- •8.3.1 A typical approach

- •8.3.2 An enhanced approach

- •8.3.3 Global validation of linear model assumption

- •8.3.4 Multicollinearity

- •8.4 Unusual observations

- •8.4.1 Outliers

- •8.4.3 Influential observations

- •8.5 Corrective measures

- •8.5.1 Deleting observations

- •8.5.2 Transforming variables

- •8.5.3 Adding or deleting variables

- •8.5.4 Trying a different approach

- •8.6 Selecting the “best” regression model

- •8.6.1 Comparing models

- •8.6.2 Variable selection

- •8.7 Taking the analysis further

- •8.7.1 Cross-validation

- •8.7.2 Relative importance

- •8.8 Summary

- •9 Analysis of variance

- •9.1 A crash course on terminology

- •9.2 Fitting ANOVA models

- •9.2.1 The aov() function

- •9.2.2 The order of formula terms

- •9.3.1 Multiple comparisons

- •9.3.2 Assessing test assumptions

- •9.4 One-way ANCOVA

- •9.4.1 Assessing test assumptions

- •9.4.2 Visualizing the results

- •9.6 Repeated measures ANOVA

- •9.7 Multivariate analysis of variance (MANOVA)

- •9.7.1 Assessing test assumptions

- •9.7.2 Robust MANOVA

- •9.8 ANOVA as regression

- •9.9 Summary

- •10 Power analysis

- •10.1 A quick review of hypothesis testing

- •10.2 Implementing power analysis with the pwr package

- •10.2.1 t-tests

- •10.2.2 ANOVA

- •10.2.3 Correlations

- •10.2.4 Linear models

- •10.2.5 Tests of proportions

- •10.2.7 Choosing an appropriate effect size in novel situations

- •10.3 Creating power analysis plots

- •10.4 Other packages

- •10.5 Summary

- •11 Intermediate graphs

- •11.1 Scatter plots

- •11.1.3 3D scatter plots

- •11.1.4 Spinning 3D scatter plots

- •11.1.5 Bubble plots

- •11.2 Line charts

- •11.3 Corrgrams

- •11.4 Mosaic plots

- •11.5 Summary

- •12 Resampling statistics and bootstrapping

- •12.1 Permutation tests

- •12.2 Permutation tests with the coin package

- •12.2.2 Independence in contingency tables

- •12.2.3 Independence between numeric variables

- •12.2.5 Going further

- •12.3 Permutation tests with the lmPerm package

- •12.3.1 Simple and polynomial regression

- •12.3.2 Multiple regression

- •12.4 Additional comments on permutation tests

- •12.5 Bootstrapping

- •12.6 Bootstrapping with the boot package

- •12.6.1 Bootstrapping a single statistic

- •12.6.2 Bootstrapping several statistics

- •12.7 Summary

- •13 Generalized linear models

- •13.1 Generalized linear models and the glm() function

- •13.1.1 The glm() function

- •13.1.2 Supporting functions

- •13.1.3 Model fit and regression diagnostics

- •13.2 Logistic regression

- •13.2.1 Interpreting the model parameters

- •13.2.2 Assessing the impact of predictors on the probability of an outcome

- •13.2.3 Overdispersion

- •13.2.4 Extensions

- •13.3 Poisson regression

- •13.3.1 Interpreting the model parameters

- •13.3.2 Overdispersion

- •13.3.3 Extensions

- •13.4 Summary

- •14 Principal components and factor analysis

- •14.1 Principal components and factor analysis in R

- •14.2 Principal components

- •14.2.1 Selecting the number of components to extract

- •14.2.2 Extracting principal components

- •14.2.3 Rotating principal components

- •14.2.4 Obtaining principal components scores

- •14.3 Exploratory factor analysis

- •14.3.1 Deciding how many common factors to extract

- •14.3.2 Extracting common factors

- •14.3.3 Rotating factors

- •14.3.4 Factor scores

- •14.4 Other latent variable models

- •14.5 Summary

- •15 Time series

- •15.1 Creating a time-series object in R

- •15.2 Smoothing and seasonal decomposition

- •15.2.1 Smoothing with simple moving averages

- •15.2.2 Seasonal decomposition

- •15.3 Exponential forecasting models

- •15.3.1 Simple exponential smoothing

- •15.3.3 The ets() function and automated forecasting

- •15.4 ARIMA forecasting models

- •15.4.1 Prerequisite concepts

- •15.4.2 ARMA and ARIMA models

- •15.4.3 Automated ARIMA forecasting

- •15.5 Going further

- •15.6 Summary

- •16 Cluster analysis

- •16.1 Common steps in cluster analysis

- •16.2 Calculating distances

- •16.3 Hierarchical cluster analysis

- •16.4 Partitioning cluster analysis

- •16.4.2 Partitioning around medoids

- •16.5 Avoiding nonexistent clusters

- •16.6 Summary

- •17 Classification

- •17.1 Preparing the data

- •17.2 Logistic regression

- •17.3 Decision trees

- •17.3.1 Classical decision trees

- •17.3.2 Conditional inference trees

- •17.4 Random forests

- •17.5 Support vector machines

- •17.5.1 Tuning an SVM

- •17.6 Choosing a best predictive solution

- •17.7 Using the rattle package for data mining

- •17.8 Summary

- •18 Advanced methods for missing data

- •18.1 Steps in dealing with missing data

- •18.2 Identifying missing values

- •18.3 Exploring missing-values patterns

- •18.3.1 Tabulating missing values

- •18.3.2 Exploring missing data visually

- •18.3.3 Using correlations to explore missing values

- •18.4 Understanding the sources and impact of missing data

- •18.5 Rational approaches for dealing with incomplete data

- •18.6 Complete-case analysis (listwise deletion)

- •18.7 Multiple imputation

- •18.8 Other approaches to missing data

- •18.8.1 Pairwise deletion

- •18.8.2 Simple (nonstochastic) imputation

- •18.9 Summary

- •19 Advanced graphics with ggplot2

- •19.1 The four graphics systems in R

- •19.2 An introduction to the ggplot2 package

- •19.3 Specifying the plot type with geoms

- •19.4 Grouping

- •19.5 Faceting

- •19.6 Adding smoothed lines

- •19.7 Modifying the appearance of ggplot2 graphs

- •19.7.1 Axes

- •19.7.2 Legends

- •19.7.3 Scales

- •19.7.4 Themes

- •19.7.5 Multiple graphs per page

- •19.8 Saving graphs

- •19.9 Summary

- •20 Advanced programming

- •20.1 A review of the language

- •20.1.1 Data types

- •20.1.2 Control structures

- •20.1.3 Creating functions

- •20.2 Working with environments

- •20.3 Object-oriented programming

- •20.3.1 Generic functions

- •20.3.2 Limitations of the S3 model

- •20.4 Writing efficient code

- •20.5 Debugging

- •20.5.1 Common sources of errors

- •20.5.2 Debugging tools

- •20.5.3 Session options that support debugging

- •20.6 Going further

- •20.7 Summary

- •21 Creating a package

- •21.1 Nonparametric analysis and the npar package

- •21.1.1 Comparing groups with the npar package

- •21.2 Developing the package

- •21.2.1 Computing the statistics

- •21.2.2 Printing the results

- •21.2.3 Summarizing the results

- •21.2.4 Plotting the results

- •21.2.5 Adding sample data to the package

- •21.3 Creating the package documentation

- •21.4 Building the package

- •21.5 Going further

- •21.6 Summary

- •22 Creating dynamic reports

- •22.1 A template approach to reports

- •22.2 Creating dynamic reports with R and Markdown

- •22.3 Creating dynamic reports with R and LaTeX

- •22.4 Creating dynamic reports with R and Open Document

- •22.5 Creating dynamic reports with R and Microsoft Word

- •22.6 Summary

- •afterword Into the rabbit hole

- •appendix A Graphical user interfaces

- •appendix B Customizing the startup environment

- •appendix C Exporting data from R

- •Delimited text file

- •Excel spreadsheet

- •Statistical applications

- •appendix D Matrix algebra in R

- •appendix E Packages used in this book

- •appendix F Working with large datasets

- •F.1 Efficient programming

- •F.2 Storing data outside of RAM

- •F.3 Analytic packages for out-of-memory data

- •F.4 Comprehensive solutions for working with enormous datasets

- •appendix G Updating an R installation

- •G.1 Automated installation (Windows only)

- •G.2 Manual installation (Windows and Mac OS X)

- •G.3 Updating an R installation (Linux)

- •references

- •index

- •Symbols

- •Numerics

- •23.1 The lattice package

- •23.2 Conditioning variables

- •23.3 Panel functions

- •23.4 Grouping variables

- •23.5 Graphic parameters

- •23.6 Customizing plot strips

- •23.7 Page arrangement

- •23.8 Going further

322 |

CHAPTER 14 Principal components and factor analysis |

In the remainder of this chapter, we’ll carefully consider each of the steps, starting with PCA. At the end of the chapter, you’ll find a detailed flow chart of the possible steps in PCA/EFA (figure 14.7). The chart will make more sense once you’ve read through the intervening material.

14.2 Principal components

The goal of PCA is to replace a large number of correlated variables with a smaller number of uncorrelated variables while capturing as much information in the original variables as possible. These derived variables, called principal components, are linear combinations of the observed variables. Specifically, the first principal component

PC1 = a1X1 + a2X2 + ... + akXk

is the weighted combination of the k observed variables that accounts for the most variance in the original set of variables. The second principal component is the linear combination that accounts for the most variance in the original variables, under the constraint that it’s orthogonal (uncorrelated) to the first principal component. Each subsequent component maximizes the variance accounted for, while at the same time remaining uncorrelated with all previous components. Theoretically, you can extract as many principal components as there are variables. But from a practical viewpoint, you hope that you can approximate the full set of variables with a much smaller set of components. Let’s look at a simple example.

The dataset USJudgeRatings contains lawyers’ ratings of state judges in the US Superior Court. The data frame contains 43 observations on 12 numeric variables. The variables are listed in table 14.2.

Table 14.2 Variables in the USJudgeRatings dataset

Variable |

Description |

Variable |

Description |

|

|

|

|

CONT |

Number of contacts of lawyer with judge |

PREP |

Preparation for trial |

INTG |

Judicial integrity |

FAMI |

Familiarity with law |

DMNR |

Demeanor |

ORAL |

Sound oral rulings |

DILG |

Diligence |

WRIT |

Sound written rulings |

CFMG |

Case flow managing |

PHYS |

Physical ability |

DECI |

Prompt decisions |

RTEN |

Worthy of retention |

|

|

|

|

From a practical point of view, can you summarize the 11 evaluative ratings (INTG to RTEN) with a smaller number of composite variables? If so, how many will you need, and how will they be defined? Because the goal is to simplify the data, you’ll approach this problem using PCA. The data are in raw score format, and there are no missing values. Therefore, your next step is deciding how many principal components you’ll need.

Principal components |

323 |

14.2.1Selecting the number of components to extract

Several criteria are available for deciding how many components to retain in a PCA. They include

■Basing the number of components on prior experience and theory

■Selecting the number of components needed to account for some threshold cumulative amount of variance in the variables (for example, 80%)

■Selecting the number of components to retain by examining the eigenvalues of the k × k correlation matrix among the variables

The most common approach is based on the eigenvalues. Each component is associated with an eigenvalue of the correlation matrix. The first PC is associated with the largest eigenvalue, the second PC with the second-largest eigenvalue, and so on. The Kaiser–Harris criterion suggests retaining components with eigenvalues greater than 1. Components with eigenvalues less than 1 explain less variance than contained in a single variable. In the Cattell Scree test, the eigenvalues are plotted against their component numbers. Such plots typically demonstrate a bend or elbow, and the components above this sharp break are retained. Finally, you can run simulations, extracting eigenvalues from random data matrices of the same size as the original matrix. If an eigenvalue based on real data is larger than the average corresponding eigenvalues from a set of random data matrices, that component is retained. The approach is called parallel analysis (see Hayton, Allen, and Scarpello, 2004, for more details).

You can assess all three eigenvalue criteria at the same time via the fa.parallel() function. For the 11 ratings (dropping the CONT variable), the necessary code is as follows:

library(psych)

fa.parallel(USJudgeRatings[,-1], fa="pc", n.iter=100,

show.legend=FALSE, main="Scree plot with parallel analysis")

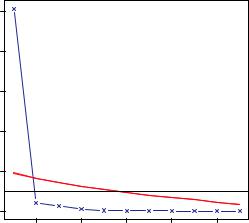

This code produces the graph shown in figure 14.2. The plot displays the scree test based on the observed eigenvalues (as straightline segments and x’s), the mean eigenvalues derived from 100 random data matrices (as dashed lines), and the eigenvalues greater than 1 criteria (as a horizontal line at y=1).

Figure 14.2 Assessing the number of principal components to retain for the USJudgeRatings example. A scree plot (the line with x’s), eigenvalues greater than 1 criteria (horizontal line), and parallel analysis with 100 simulations (dashed line) suggest retaining a single component.

Scree plot with parallel analysis

|

10 |

|

|

|

|

components |

6 8 |

|

|

|

|

of principal |

4 |

|

|

|

|

eigen values |

2 |

|

|

|

|

|

0 |

|

|

|

|

|

2 |

4 |

6 |

8 |

10 |

Factor Number

324 |

CHAPTER 14 Principal components and factor analysis |

All three criteria suggest that a single component is appropriate for summarizing this dataset. Your next step is to extract the principal component using the principal() function.

14.2.2Extracting principal components

As indicated earlier, the principal() function performs a principal components analysis starting with either a raw data matrix or a correlation matrix. The format is

principal(r, nfactors=, rotate=, scores=)

where

■r is a correlation matrix or a raw data matrix.

■nfactors specifies the number of principal components to extract (1 by default).

■rotate indicates the rotation to be applied (varimax by default; see section 14.2.3).

■scores specifies whether to calculate principal-component scores (false by default).

To extract the first principal component, you can use the code in the following listing.

Listing 14.1 Principal components analysis of USJudgeRatings

>library(psych)

>pc <- principal(USJudgeRatings[,-1], nfactors=1)

>pc

Principal Components Analysis

Call: principal(r = USJudgeRatings[, -1], nfactors=1)

Standardized loadings based upon correlation matrix

|

PC1 |

h2 |

u2 |

INTG |

0.92 |

0.84 |

0.157 |

DMNR |

0.91 |

0.83 |

0.166 |

DILG 0.97 0.94 0.061 |

|||

CFMG 0.96 |

0.93 |

0.072 |

|

DECI 0.96 |

0.92 |

0.076 |

|

PREP |

0.98 |

0.97 |

0.030 |

FAMI |

0.98 |

0.95 |

0.047 |

ORAL 1.00 |

0.99 0.009 |

|

WRIT 0.99 |

0.98 0.020 |

|

PHYS 0.89 |

0.80 |

0.201 |

RTEN 0.99 |

0.97 |

0.028 |

|

|

PC1 |

SS loadings |

10.13 |

|

Proportion Var |

0.92 |

|

[... additional output omitted ...]

Here, you’re inputting the raw data without the CONT variable and specifying that one unrotated component should be extracted. (Rotation is explained in section 14.3.3.) Because PCA is performed on a correlation matrix, the raw data is automatically converted to a correlation matrix before the components are extracted.

Principal components |

325 |

The column labeled PC1 contains the component loadings, which are the correlations of the observed variables with the principal component(s). If you extracted more than one principal component, there would be columns for PC2, PC3, and so on. Component loadings are used to interpret the meaning of components. You can see that each variable correlates highly with the first component (PC1). It therefore appears to be a general evaluative dimension.

The column labeled h2 contains the component communalities—the amount of variance in each variable explained by the components. The u2 column contains the component uniquenesses—the amount of variance not accounted for by the components (or 1 – h2). For example, 80% of the variance in physical ability (PHYS) ratings is accounted for by the first PC, and 20% isn’t. PHYS is the variable least well represented by a one-component solution.

The row labeled SS Loadings contains the eigenvalues associated with the components. The eigenvalues are the standardized variance associated with a particular component (in this case, the value for the first component is 10). Finally, the row labeled Proportion Var represents the amount of variance accounted for by each component. Here you see that the first principal component accounts for 92% of the variance in the 11 variables.

Let’s consider a second example, one that results in a solution with more than one principal component. The dataset Harman23.cor contains data on 8 body measurements for 305 girls. In this case, the dataset consists of the correlations among the variables rather than the original data (see table 14.3).

Table 14.3 Correlations among body measurements for 305 girls (Harman23.cor)

|

Height |

Arm |

Forearm |

Lower |

Weight |

Bitro |

Chest |

Chest |

|

span |

leg |

diameter |

girth |

width |

|||

|

|

|

|

|||||

|

|

|

|

|

|

|

|

|

Height |

1.00 |

0.85 |

0.80 |

0.86 |

0.47 |

0.40 |

0.30 |

0.38 |

Arm span |

0.85 |

1.00 |

0.88 |

0.83 |

0.38 |

0.33 |

0.28 |

0.41 |

Forearm |

0.80 |

0.88 |

1.00 |

0.80 |

0.38 |

0.32 |

0.24 |

0.34 |

Lower leg |

0.86 |

0.83 |

0.8 |

1.00 |

0.44 |

0.33 |

0.33 |

0.36 |

Weight |

0.47 |

0.38 |

0.38 |

0.44 |

1.00 |

0.76 |

0.73 |

0.63 |

Bitro diameter |

0.40 |

0.33 |

0.32 |

0.33 |

0.76 |

1.00 |

0.58 |

0.58 |

Chest girth |

0.30 |

0.28 |

0.24 |

0.33 |

0.73 |

0.58 |

1.00 |

0.54 |

Chest width |

0.38 |

0.41 |

0.34 |

0.36 |

0.63 |

0.58 |

0.54 |

1.00 |

|

|

|

|

|

|

|

|

|

Source: H. H. Harman, Modern Factor Analysis, Third Edition Revised, University of Chicago Press, 1976, Table 2.3.

Again, you wish to replace the original physical measurements with a smaller number of derived variables. You can determine the number of components to extract using

326 |

CHAPTER 14 Principal components and factor analysis |

Scree plot with parallel analysis

components |

3 4 |

|

|

|

|

|

|

|

of principal |

2 |

|

|

|

|

|

|

|

eigen values |

1 |

|

|

|

|

|

|

|

|

0 |

|

|

|

|

|

|

|

|

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

Factor Number

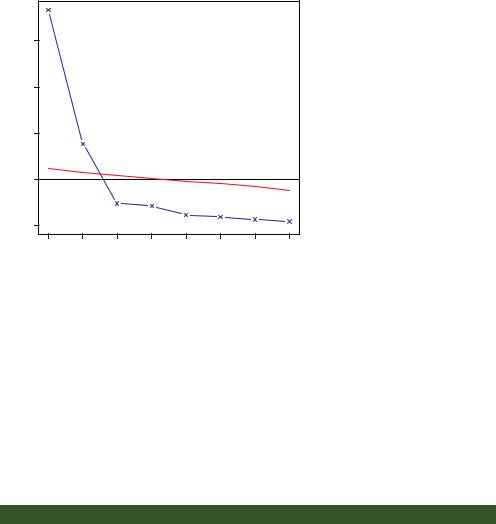

Figure 14.3 Assessing the number of principal components to retain for the body measurements example.

The scree plot (line with x’s), eigenvalues greater than 1 criteria (horizontal line), and parallel analysis with 100 simulations (dashed line) suggest retaining two components.

the following code. In this case, you need to identify the correlation matrix (the cov component of the Harman23.cor object) and specify the sample size (n.obs):

library(psych)

fa.parallel(Harman23.cor$cov, n.obs=302, fa="pc", n.iter=100, show.legend=FALSE, main="Scree plot with parallel analysis")

The resulting graph is displayed in figure 14.3.

You can see from the plot that a two-component solution is suggested. As in the first example, the Kaiser–Harris criteria, scree test, and parallel analysis agree. This won’t always be the case, and you may need to extract different numbers of components and select the solution that appears most useful. The next listing extracts the first two principal components from the correlation matrix.

Listing 14.2 Principal components analysis of body measurements

>library(psych)

>pc <- principal(Harman23.cor$cov, nfactors=2, rotate="none")

>pc

Principal Components Analysis |

|

|||

Call: principal(r = |

Harman23.cor$cov, nfactors = 2, rotate = "none") |

|||

Standardized loadings based upon correlation matrix |

||||

|

PC1 |

PC2 |

h2 |

u2 |

height |

0.86 |

-0.37 0.88 0.123 |

||

arm.span |

0.84 |

-0.44 0.90 0.097 |

||

forearm |

0.81 |

-0.46 0.87 0.128 |

||

lower.leg |

0.84 |

-0.40 |

0.86 |

0.139 |

weight |

0.76 |

0.52 |

0.85 |

0.150 |

bitro.diameter 0.67 |

0.53 |

0.74 |

0.261 |

|