- •List of Tables

- •List of Figures

- •Table of Notation

- •Preface

- •Boolean retrieval

- •An example information retrieval problem

- •Processing Boolean queries

- •The extended Boolean model versus ranked retrieval

- •References and further reading

- •The term vocabulary and postings lists

- •Document delineation and character sequence decoding

- •Obtaining the character sequence in a document

- •Choosing a document unit

- •Determining the vocabulary of terms

- •Tokenization

- •Dropping common terms: stop words

- •Normalization (equivalence classing of terms)

- •Stemming and lemmatization

- •Faster postings list intersection via skip pointers

- •Positional postings and phrase queries

- •Biword indexes

- •Positional indexes

- •Combination schemes

- •References and further reading

- •Dictionaries and tolerant retrieval

- •Search structures for dictionaries

- •Wildcard queries

- •General wildcard queries

- •Spelling correction

- •Implementing spelling correction

- •Forms of spelling correction

- •Edit distance

- •Context sensitive spelling correction

- •Phonetic correction

- •References and further reading

- •Index construction

- •Hardware basics

- •Blocked sort-based indexing

- •Single-pass in-memory indexing

- •Distributed indexing

- •Dynamic indexing

- •Other types of indexes

- •References and further reading

- •Index compression

- •Statistical properties of terms in information retrieval

- •Dictionary compression

- •Dictionary as a string

- •Blocked storage

- •Variable byte codes

- •References and further reading

- •Scoring, term weighting and the vector space model

- •Parametric and zone indexes

- •Weighted zone scoring

- •Learning weights

- •The optimal weight g

- •Term frequency and weighting

- •Inverse document frequency

- •The vector space model for scoring

- •Dot products

- •Queries as vectors

- •Computing vector scores

- •Sublinear tf scaling

- •Maximum tf normalization

- •Document and query weighting schemes

- •Pivoted normalized document length

- •References and further reading

- •Computing scores in a complete search system

- •Index elimination

- •Champion lists

- •Static quality scores and ordering

- •Impact ordering

- •Cluster pruning

- •Components of an information retrieval system

- •Tiered indexes

- •Designing parsing and scoring functions

- •Putting it all together

- •Vector space scoring and query operator interaction

- •References and further reading

- •Evaluation in information retrieval

- •Information retrieval system evaluation

- •Standard test collections

- •Evaluation of unranked retrieval sets

- •Evaluation of ranked retrieval results

- •Assessing relevance

- •A broader perspective: System quality and user utility

- •System issues

- •User utility

- •Results snippets

- •References and further reading

- •Relevance feedback and query expansion

- •Relevance feedback and pseudo relevance feedback

- •The Rocchio algorithm for relevance feedback

- •Probabilistic relevance feedback

- •When does relevance feedback work?

- •Relevance feedback on the web

- •Evaluation of relevance feedback strategies

- •Pseudo relevance feedback

- •Indirect relevance feedback

- •Summary

- •Global methods for query reformulation

- •Vocabulary tools for query reformulation

- •Query expansion

- •Automatic thesaurus generation

- •References and further reading

- •XML retrieval

- •Basic XML concepts

- •Challenges in XML retrieval

- •A vector space model for XML retrieval

- •Evaluation of XML retrieval

- •References and further reading

- •Exercises

- •Probabilistic information retrieval

- •Review of basic probability theory

- •The Probability Ranking Principle

- •The 1/0 loss case

- •The PRP with retrieval costs

- •The Binary Independence Model

- •Deriving a ranking function for query terms

- •Probability estimates in theory

- •Probability estimates in practice

- •Probabilistic approaches to relevance feedback

- •An appraisal and some extensions

- •An appraisal of probabilistic models

- •Bayesian network approaches to IR

- •References and further reading

- •Language models for information retrieval

- •Language models

- •Finite automata and language models

- •Types of language models

- •Multinomial distributions over words

- •The query likelihood model

- •Using query likelihood language models in IR

- •Estimating the query generation probability

- •Language modeling versus other approaches in IR

- •Extended language modeling approaches

- •References and further reading

- •Relation to multinomial unigram language model

- •The Bernoulli model

- •Properties of Naive Bayes

- •A variant of the multinomial model

- •Feature selection

- •Mutual information

- •Comparison of feature selection methods

- •References and further reading

- •Document representations and measures of relatedness in vector spaces

- •k nearest neighbor

- •Time complexity and optimality of kNN

- •The bias-variance tradeoff

- •References and further reading

- •Exercises

- •Support vector machines and machine learning on documents

- •Support vector machines: The linearly separable case

- •Extensions to the SVM model

- •Multiclass SVMs

- •Nonlinear SVMs

- •Experimental results

- •Machine learning methods in ad hoc information retrieval

- •Result ranking by machine learning

- •References and further reading

- •Flat clustering

- •Clustering in information retrieval

- •Problem statement

- •Evaluation of clustering

- •Cluster cardinality in K-means

- •Model-based clustering

- •References and further reading

- •Exercises

- •Hierarchical clustering

- •Hierarchical agglomerative clustering

- •Time complexity of HAC

- •Group-average agglomerative clustering

- •Centroid clustering

- •Optimality of HAC

- •Divisive clustering

- •Cluster labeling

- •Implementation notes

- •References and further reading

- •Exercises

- •Matrix decompositions and latent semantic indexing

- •Linear algebra review

- •Matrix decompositions

- •Term-document matrices and singular value decompositions

- •Low-rank approximations

- •Latent semantic indexing

- •References and further reading

- •Web search basics

- •Background and history

- •Web characteristics

- •The web graph

- •Spam

- •Advertising as the economic model

- •The search user experience

- •User query needs

- •Index size and estimation

- •Near-duplicates and shingling

- •References and further reading

- •Web crawling and indexes

- •Overview

- •Crawling

- •Crawler architecture

- •DNS resolution

- •The URL frontier

- •Distributing indexes

- •Connectivity servers

- •References and further reading

- •Link analysis

- •The Web as a graph

- •Anchor text and the web graph

- •PageRank

- •Markov chains

- •The PageRank computation

- •Hubs and Authorities

- •Choosing the subset of the Web

- •References and further reading

- •Bibliography

- •Author Index

DRAFT! © April 1, 2009 Cambridge University Press. Feedback welcome. |

237 |

12 Language models for information retrieval

A common suggestion to users for coming up with good queries is to think of words that would likely appear in a relevant document, and to use those words as the query. The language modeling approach to IR directly models that idea: a document is a good match to a query if the document model is likely to generate the query, which will in turn happen if the document contains the query words often. This approach thus provides a different realization of some of the basic ideas for document ranking which we saw in Section 6.2 (page 117). Instead of overtly modeling the probability P(R = 1|q, d) of relevance of a document d to a query q, as in the traditional probabilistic approach to IR (Chapter 11), the basic language modeling approach instead builds a probabilistic language model Md from each document d, and ranks documents based on the probability of the model generating the query:

P(q|Md).

In this chapter, we first introduce the concept of language models (Section 12.1) and then describe the basic and most commonly used language modeling approach to IR, the Query Likelihood Model (Section 12.2). After some comparisons between the language modeling approach and other approaches to IR (Section 12.3), we finish by briefly describing various extensions to the language modeling approach (Section 12.4).

12.1Language models

12.1.1Finite automata and language models

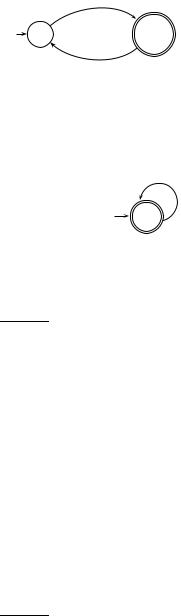

What do we mean by a document model generating a query? A traditional GENERATIVE MODEL generative model of a language, of the kind familiar from formal language theory, can be used either to recognize or to generate strings. For example, the finite automaton shown in Figure 12.1 can generate strings that include the examples shown. The full set of strings that can be generated is called

LANGUAGE the language of the automaton.1

Online edition (c) 2009 Cambridge UP

238

LANGUAGE MODEL

(12.1)

12 Language models for information retrieval

Iwish

Iwish I wish

Iwish I wish I wish

I |

wish |

I wish I wish I wish I wish I wish I wish |

|

. . . |

|||

|

|

||

|

|

CANNOT GENERATE: wish I wish |

Figure 12.1 A simple finite automaton and some of the strings in the language it generates. → shows the start state of the automaton and a double circle indicates a (possible) finishing state.

|

the |

0.2 |

|

a |

0.1 |

|

frog |

0.01 |

q1 |

toad |

0.01 |

said |

0.03 |

|

|

likes |

0.02 |

P(STOP|q1) = 0.2 |

that |

0.04 |

. . . |

. . . |

Figure 12.2 A one-state finite automaton that acts as a unigram language model. We show a partial specification of the state emission probabilities.

If instead each node has a probability distribution over generating different terms, we have a language model. The notion of a language model is inherently probabilistic. A language model is a function that puts a probability measure over strings drawn from some vocabulary. That is, for a language model M over an alphabet Σ:

∑ P(s) = 1

s Σ

One simple kind of language model is equivalent to a probabilistic finite automaton consisting of just a single node with a single probability distribution over producing different terms, so that ∑t V P(t) = 1, as shown in Figure 12.2. After generating each word, we decide whether to stop or to loop around and then produce another word, and so the model also requires a probability of stopping in the finishing state. Such a model places a probability distribution over any sequence of words. By construction, it also provides a model for generating text according to its distribution.

1. Finite automata can have outputs attached to either their states or their arcs; we use states here, because that maps directly on to the way probabilistic automata are usually formalized.

Online edition (c) 2009 Cambridge UP

12.1 Language models |

|

|

239 |

||

|

|

|

|

|

|

|

Model M1 |

|

Model M2 |

|

|

|

the |

0.2 |

the |

0.15 |

|

|

a |

0.1 |

a |

0.12 |

|

|

frog |

0.01 |

frog |

0.0002 |

|

|

toad |

0.01 |

toad |

0.0001 |

|

|

said |

0.03 |

said |

0.03 |

|

|

likes |

0.02 |

likes |

0.04 |

|

|

that |

0.04 |

that |

0.04 |

|

|

dog |

0.005 |

dog |

0.01 |

|

|

cat |

0.003 |

cat |

0.015 |

|

|

monkey |

0.001 |

monkey |

0.002 |

|

|

. . . |

. . . |

. . . |

. . . |

|

Figure 12.3 Partial specification of two unigram language models.

Example 12.1: To find the probability of a word sequence, we just multiply the probabilities which the model gives to each word in the sequence, together with the probability of continuing or stopping after producing each word. For example,

(12.2) |

P(frog said that toad likes frog) = |

(0.01 × 0.03 × 0.04 × 0.01 × 0.02 × 0.01) |

|

|

×(0.8 × 0.8 × 0.8 × 0.8 × 0.8 × 0.8 × 0.2) |

|

≈ |

0.000000000001573 |

As you can see, the probability of a particular string/document, is usually a very small number! Here we stopped after generating frog the second time. The first line of numbers are the term emission probabilities, and the second line gives the probability of continuing or stopping after generating each word. An explicit stop probability is needed for a finite automaton to be a well-formed language model according to Equation (12.1). Nevertheless, most of the time, we will omit to include STOP and (1 − STOP) probabilities (as do most other authors). To compare two models for a

LIKELIHOOD RATIO data set, we can calculate their likelihood ratio, which results from simply dividing the probability of the data according to one model by the probability of the data according to the other model. Providing that the stop probability is fixed, its inclusion will not alter the likelihood ratio that results from comparing the likelihood of two lan-

guage models generating a string. Hence, it will not alter the ranking of documents.2 Nevertheless, formally, the numbers will no longer truly be probabilities, but only proportional to probabilities. See Exercise 12.4.

Example 12.2: Suppose, now, that we have two language models M1 and M2, shown partially in Figure 12.3. Each gives a probability estimate to a sequence of

2. In the IR context that we are leading up to, taking the stop probability to be fixed across models seems reasonable. This is because we are generating queries, and the length distribution of queries is fixed and independent of the document from which we are generating the language model.

Online edition (c) 2009 Cambridge UP

240 |

12 Language models for information retrieval |

terms, as already illustrated in Example 12.1. The language model that gives the higher probability to the sequence of terms is more likely to have generated the term sequence. This time, we will omit STOP probabilities from our calculations. For the sequence shown, we get:

(12.3) |

s |

frog |

said |

that |

toad |

likes |

that |

dog |

|

M1 |

0.01 |

0.03 |

0.04 |

0.01 |

0.02 |

0.04 |

0.005 |

|

M2 |

0.0002 |

0.03 |

0.04 |

0.0001 |

0.04 |

0.04 |

0.01 |

P(s|M1) = 0.00000000000048 P(s|M2) = 0.000000000000000384

and we see that P(s|M1) > P(s|M2). We present the formulas here in terms of products of probabilities, but, as is common in probabilistic applications, in practice it is usually best to work with sums of log probabilities (cf. page 258).

12.1.2Types of language models

How do we build probabilities over sequences of terms? We can always use the chain rule from Equation (11.1) to decompose the probability of a sequence of events into the probability of each successive event conditioned on earlier events:

(12.4) |

P(t1t2t3t4) = P(t1)P(t2|t1)P(t3|t1t2)P(t4|t1t2t3) |

|

The simplest form of language model simply throws away all conditioning |

|

context, and estimates each term independently. Such a model is called a |

UNIGRAM LANGUAGE |

unigram language model: |

MODEL |

|

(12.5) |

Puni(t1t2t3t4) = P(t1)P(t2)P(t3)P(t4) |

BIGRAM LANGUAGE |

There are many more complex kinds of language models, such as bigram |

MODEL |

language models, which condition on the previous term, |

(12.6) |

Pbi(t1t2t3t4) = P(t1)P(t2|t1)P(t3|t2)P(t4|t3) |

|

and even more complex grammar-based language models such as proba- |

|

bilistic context-free grammars. Such models are vital for tasks like speech |

|

recognition, spelling correction, and machine translation, where you need |

|

the probability of a term conditioned on surrounding context. However, |

|

most language-modeling work in IR has used unigram language models. |

|

IR is not the place where you most immediately need complex language |

|

models, since IR does not directly depend on the structure of sentences to |

|

the extent that other tasks like speech recognition do. Unigram models are |

|

often sufficient to judge the topic of a text. Moreover, as we shall see, IR lan- |

|

guage models are frequently estimated from a single document and so it is |

Online edition (c) 2009 Cambridge UP