- •Introduction to adjustment calculus

- •Introduction to adjustment calculus (Third Corrected Edition)

- •Introduction

- •2. Fundamentals of the mathematical theory of probability

- •If d'cd; then p (d1) £ lf

- •Is called the mean (average) of the actual sample. We can show that m equals also to:

- •3.1.4 Variance of a Sample

- •Is called the variance (dispersion) the actual sample. The square root 2

- •In the interval [6,10] is nine. This number

- •VVII?I 0-0878'

- •In this case, the new histogram of the sample £ is shown in Figure 3.5.

- •Is usually called the r-th moment of the pdf (random variable); more precisely; the r-th moment of the pdf about zero. On the other hand, the r-th central moment of the pdf is given by:

- •3.2.4 Basic Postulate (Hypothesis) of Statistics, Testing

- •3.3.4 Covariance and Variance-Covariance Matrix

- •X and X of a multivariate X as

- •It is not difficult to see that the variance-covariance matrix can also be written in terms of the mathematical expectation as follows:

- •3.3.6 Mean and Variance-Covariance Matrix of a Multisample The mean of a multisample (3.48) is defined as

- •4.2 Random (Accidental) Errors

- •It should be noted that the term иrandom error" is used rather freely in practice.

- •In order to be able to use the tables of the standard normal

- •X, we first have to standardize X, I.E. To transform X to t using

- •Is a normally distributed random

- •4.10 Other Measures of Dispersion

- •The average or mean error a of the sample l is defined as

- •5. Least-squares principle

- •5.2 The Sample Mean as "The Maximum Probability Estimator"

- •5.4 Least-Sqaures Principle for Random Multivariate

- •In very much the same way as we postulated

- •The relationship between e and e for a mathematical model

- •6.4.4 Variance Covariance Matrix of the Mean of a Multisample

- •Itself and can be interpreted as a measure of confidence we have in the correctness of the mean £. Evidently, our confidence increases with the number of observations.

- •6.4.6 Parametric Adjustment

- •In this section, we are going to deal with the adjustment of the linear model (6.67), I.E.

- •It can be easily linearized by Taylor's series expansion, I.E.

- •In which we neglect the higher order terms. Putting ax for X-X , al for

- •The system of normal equations (6.76) has a solution X

- •In sections 6.4.2 and 6.4.3. In this case, the observation equations will be

- •In matrix form we can write

- •In metres.

- •6.4.7 Variance-Covariance Matrix of the Parametric Adjustment Solution Vector, Variance Factor and Weight Coefficient Matrix

- •I.E. We know the relative variances and covariances of the observations only. This means that we have to work with the weight matrix к£- 1

- •If we develop the quadratic form V pv 3) considering the observations l to be influenced by random errors only, we get an estimate к for the assumed factor к given by

- •Variance factor к plays. It can be regarded as the variance of unit

- •In metres,

- •Is satisfied. This can be verified by writing

- •Into a . О

- •6.U.10 Conditional Adjustment

- •In this section we are going to deal with the adjustment of the linear model (6.68), I.E.

- •For the adjustment, the above model is reformulated as:

- •Is not as straightforward, as it is in the parametric case (section 6.4.6)

- •VeRn VeRn

- •Into the above vector we get 0.0

- •0.0 In metres .

- •In metres.

- •Areas under the standard normal curve from 0 to t

- •Van der Waerden, b.L., 1969: Mathematical Statistics, Springer-Verlag.

4.10 Other Measures of Dispersion

So far, we have dealt with two measures of dispersion of a sample namely: The root mean square error (RMS) mentioned in section 4.7* and the range (Ra) mentioned in section 3-1.5- Besides the RMS and the range of a sample the following measures of dispersion (spread) are often used.

The average or mean error a of the sample l is defined as

Ill*

which is the mean of the absolute values of the residuals.

The most probable error p^, of the sample L, is defined as the

error for which:

P(|r| < p ) = P(|r| > p ) я o,50 в e

(U.31)

which means that there is '50$ probability that the residual is smaller and 5Q% probability that the residu&l is larger than pe.

The most probable error of a random sample can be computed by constructing

the CDF of the corresponding absolute values of the sample residuals, and

take the value of r which corresponds to the CDF = 0.5 as the value of p^ .

Both a and p can be defined for the continuous distributions as e . e

well. For instance, by considering the normal PDF, N(^,0^; x), we can write:

00

a = / |x| ф (x) dx

00 (х-м )2

= / |x| exp (_.-_£. у ах . (k.33)

a /( 2тг ) -оо 2a

X X

Similarly for p , by taking the symmetry of the normal curve into account,

we can write:

1 (У -P.) (x-Pv)2

Vm^Tj f exp (-—^) too 0.25 (U.3U)

p(* < \ - pe) = V\ - pe} *

x -00 2a

x

and

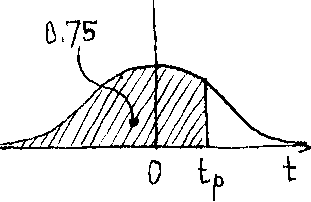

P(x < ux + pe) = *N(jix + pe) = 0.75 (U.35)'

where 4* is the normal CDF. N

It can Ъе shown for the normal PDF N(u , a ;x) that "a " "a and "p "

-XX X с e

are related to each other by the following approximate relation:

a = 1.25 а = 1.5 p ,

x e ^e

or

(h.36)

a : а x e

i.o ;' o.8o I о.бт .

The relative or proportional error r^ , of the sample L, is defined

as the ratio between the sample RMS and the sample mean, i.e.

r = ST I %. (4.37)

e L

In practice, the relative error is usually used "to describe the uncertainty of the result, i.e. the sample mean. In that case, the relative error is defined as:

(U.38)

r = S / I

6 ,7

where S is the standard deviation of the mean I and will be derived later

I

in Chapter 6.. In this respect, one often hears expressions like "proportional accuracy 3 ppm (parts per million)", which simply means that the relative error is 3/10^ = 3 * 10 ^. It should be noted that unlike the other measures of dispersion, the relative error is unitless.

The idea of the confidence intervals is based on the assumption of normality of the sample, i.e. the postulated parent normal PDF (N (£, S ;£)) for the random sample L. It is very common to represent the

sample L by its mean 1 and its standard deviation S as

L

11SL]

or

[I- - S < A < 5 + S ] ,

Й.39)

and refer to it as the "68% confidence interval" of I. This is based on

the fact that the probability P(y - a < I < у + о A is approximately 0.68 for

X/ X/ —1 — Xj Xj

the normal PDF (see section 4.5).

Similarly, one can talk about the "95% confidence interval", the "99% confidence interval", etc. In general, the confidence interval of I is expressed as:

[I + К S ] (4.4o)

where К is determined in such a way as to make

P(y£ - Ka£ < I < y£+Ka£)equal to 0.95, 0.99, etc. The values (I - KST) and (I + KST) are called the lower and the

L Li

upper confidence limits.

Example 4.7'- Let us compute the average errorдthe relative error and the 95% confidence interval for the sample of observations L given in example 4.6.

The average error is computed using equation (4.31) and the

fourth column of the given table in example 4.6 as:

10 ±

a я _ z |r, j = — (1.90) = 0-19 m .

e io i=i 1 10

The relative error of the sample is computed from equation (4.37) and the results obtained in example 4.6 as:

re = SL 1 1 " 9тШ = .^-Effi •

The 95% confidence interval of I is

[I - К S <£<jE + KST] . L — — Li

where the number К is computed so that

P(y£ - Ka£ < I < HA+Ka£) = 0.95 -

This is identical to the probability P(-K < t < K) obtained from the standard normal tables (see example 4.3, the last case). Hence we can write:

Р(-К < t < К) = P(t < К) - P(t < -К) = 0.95

from which we get

P(t < K) = 0.975. Using the table for the standard normal variable of Appendix II - В we get:

К = I.96 ,

(in practice К = 2 is usually used for the 95% confidence interval.) The 95% confidence interval of & then becomes

[973.05 - 1.96 (0.2*0 < £ < 973.05 + 1.96(6.210 ]; that is:

[972.58 < £ < 973.52] m

or

[973.05 + 0Л7] m.

A H(h)

x

Example h.8: Given a random variable x assumed to have a normal distribution N (35 э 1*; x) э compute the most probable error. From the assumed PDF we have: у =35 and a = k.

x

The

most

probable

error

p^

is

computed

so

that

P(y

-

p

<

x

<

у

+

p

)

=

x

e

-

-

x

re

P p e e where t = — = 1— . pa k ■r x

The above probability statement can be rewritten as' (equation

(u.35)):

P(x < ja + p ) = P(t < t ) = 0.75 - x 1 e - P

(Figure U.Tb '

Ф К/Ш

From

the

table

in

Appendix

II

-

B,

we

obtain

t

=

0.675

К/Ш

From

the

table

in

Appendix

II

-

B,

we

obtain

t

=

0.675

P

p

=

k

t

=

Ij.

(0.675)

= 2.7 .

e p —L

Figure к. 7b

Note that in the second case of example ^.3, the value 3.375 is nothing else but the most probable error of the given random variable h.

4.11 Exercise 4

Prove that the Gaussian PDF given by equation (U.1+) 5 has two points of inflection at abscissas + /С/2.

For the Gaussian PDF given by equation (H.8), determine approximately the probabilities: P(-2a < e < 2a ) and P(-3a < e < 3a )

£ - - £ £ - - £

by integrating the PDF, then check your results by using the standard normal tables.

3. Prove by direct evaluation that the standard normal PDF has a standard deviation equals to one.

k. Show that the standard deviation a, average error a^ and the most probable error p^ of the normal PDF satisfy the following approximate relations:

a : a ; p = 1.0 ; 0.80 : 0.67.

Determine: the average error, the most probable error, the relative error and the 90% confidence interval of the random sample given in the second problem of exercise 35 section 3.4.

Assume that the sample H = (-5, -4, -3, -2, »1, 0, 1, 2, 3, 4, 5) is hypothesized (postulated) to have a Gaussian distribution. Transform this sample so that the transformed (new) sample will have:

(i) Normal distribution with mean equal 10.

(ii) Standard normal distribution.

7. Given a random variable x distributed as N (25 , 10; x), determine the following probabilities:

(i) P(x < 28.5) %

(ii) P(x < 22.5) %

(iii) P(x > 27.5) ;

(iv) P(l6.75 < x < 23.82)j

(v) P (|x-25| < 1.25).

8. For the random variable in the previous problem, determine the values Z. such that

i

(i) P(x < Zx) = 0.65, (ii) P(x < Z ) = 0.33 > (v) P(|x-25| > Z ) = 0.50.

(ii) P(x > Z2) = 0.025 ; (iv) P(|x-25| < Zu) = 0.33 ,

Л

Л

The above figure shows a surveying technique to determine the height h of a tower CD, which cannot be measured directly. The observed quantiti are:

£ = the horizontal distance AB > a ,8 = the horizontal angles at A and В ; 9 = the vertical angle of D at В .

The field results of these observations are given in the following table

Average

temperature during the observations time was T =

20°

F.

The

following information was given to the observer:

(iy

The micrometer of the vertical circle of the used theodolite

was not adjusted to read 001

00"

when

the corresponding bubble axis is horizontal; it reads -

(00f

30")•

(ii)

The nominal length of the used tape is 20

m

at the calibration temperature Tq

=

60°

F,

and

the coefficient of expansion of the tape material is

у

=

5

.

10~5

/

1°

F

. |

|||||

Mm) |

a |

3 |

e |

||

1А5.6З |

65° 32' |

03" |

37° 13' 08" |

k2° 53' |

15" |

• 55 |

32 |

0U |

13 11 |

52 |

30 |

• 59 |

31 |

59 |

13 10 |

53 |

00 |

.65 |

32 |

01 |

13 13 |

51 |

00 |

• 58 |

31 |

58 |

13 06 |

52 |

15 |

|

|

|

13 12 |

52 |

U5 |

|

|

|

|

51 |

15- |

|

|

|

|

53 |

00 |

|

|

|

|

51 |

|

|

|

|

|

.52 |

15 |

* |

|

|

|

|

|

Required

(i) Compute the estimated values for the quantities £, a, g and 9 *

(ii) For each of the above observed quantities compute its standard de- viation and its average error.

(iii) Compare the precision of these observed quantities (by comparing the respective relative errors)•

(iv) Assume that each of these observed quantities has a postulated normal parent PDF, construct the 95% confidence interval for each quantity.

(v) Compute the estimated value of the towerTs height h to the nearest centimeter с

12 3