- •Вопрос 1 Structure of a Speech Coding System

- •Вопрос 3 Desirable Properties of a Speech Coder

- •Вопрос 4 About Coding Delay

- •Вопрос 5 classification of speech coders

- •Вопрос 6 Origin of Speech Signals

- •Вопрос 7 Structure of the Human Auditory System

- •Вопрос 8 Absolute Threshold

- •Вопрос 9 speech coding standards

- •Вопрос 10 pitch period estimation

- •Вопрос 11 linear prediction

- •Вопрос 12 Error Minimization

- •Вопрос 13/14 Prediction Schemes

- •0 10 20

- •Вопрос 15 long-term linear prediction

- •0 0.5 1

- •0 0.5 1

- •Вопрос 16/17 Linear Predictive Coding (lpc)

- •16. Speech encoding. Lpc encoder

- •Overview

- •Lpc coefficient representations

- •Applications

- •20 / 21 . Speech encoding. Celp coder

- •22/23. Speech encoding. Ld-celp coder

- •14.1 Strategies to achieve low delay

- •24/25 Speech encoding. Acelp (g.729) coder

- •35. Jpeg2000 in video compression(mjpeg)

- •36. Coding for high quality moving pictures(mpeg-2)

Вопрос 13/14 Prediction Schemes

Different prediction schemes are used in various applications and are decided by system requirements. Generally, two main techniques are applied in speech coding: internal prediction and external prediction. Figure 4.3 illustrates the schemes. For internal prediction, the LPCs derived from the estimated autocorrelation values using the frame’s data are applied to process the frame’s data themselves. In exter- nal prediction, however, the derived LPCs are used in a future frame; that is, the

Interval

where the

autocorrelation

Interval

where the

autocorrelation

Interval where the autocorrelation values are estimated.

+1

N

m

N+1

N

m

N m m

m

N m m

The LPCs derived from the

estimated autocorrelation values are used to predict the signal samples within the same interval.

Interval where the

derived LPCs from the estimated autocorrelation values are used to predict the signal samples.

LPCs associated with the frame are not derived from the data residing within the frame, but from the signal’s past. The reason why external prediction can be used is because the signal statistics change slowly with time. If the frame is not excessively long, its properties can be derived from the not so distant past.

Many speech coding algorithms use internal prediction, where the LPCs of a given frame are derived from the data pertaining to the frame. Thus, the resultant LPCs capture the statistics of the frame accurately. Typical length of the frame varies from 160 to 240 samples. A longer frame has the advantage of less computa- tional complexity and lower bit-rate, since calculation and transmission of LPCs are done less frequently. However, a longer coding delay results from the fact that the system has to wait longer for sample collection. Also, due to the changing nature of a nonstationary environment, the LPCs derived from a long frame might not be able to produce good prediction gain. On the other hand, a shorter frame requires more frequent update of the LPCs, resulting in a more accurate representation of the sig- nal statistics. Drawbacks include higher computational load and bit-rate. Most internal prediction schemes rely on nonrecursive autocorrelation estimation methods, where a finite-length window is used to extract the signal samples.

External prediction is prevalently used in those applications where low coding delay is the prime concern. In that case, a much shorter frame must be used (on the order of 20 samples, such as the LD-CELP standard—Chapter 14). A recursive autocorrelation estimation technique is normally applied so that the LPCs are derived from the samples before the time instant n ¼ m — N þ 1 (Figure 4.3). Note that the shape of the window associated with a recursive autocorrelation estimation technique puts more emphasis on recent samples. Thus, the statistics associated with the estimates are very close to the actual properties of the frame itself, even though the estimation is not based on the data internal to the frame.

In many instances, the notions of internal and external become fuzzy. As we will see later in the book, many LP analysis schemes adopted by standardized coders are based on estimating several (usually two) sets of LPCs from contiguous analysis intervals. These coefficients are combined in a specific way and applied to a given interval for the prediction task. We skip the details for now, which are covered thoroughly in Chapter 8, when interpolation of LPCs is introduced.

Prediction Gain

Prediction gain is given here using a similar definition as presented in the last sec- tion, with the expectations changed to summations

PG½m] ¼ 10 log10

.P

n¼m—Nþ1

Pm

s2½n].

2 ; ð4:23Þ

where

n¼m—Nþ1 e ½n]

M

e½n] ¼ s½n]— ^s½n]¼ s½n]þ X ai½m]s½n — i]; n ¼ m — N þ 1; ... ; m: ð4:24Þ

i¼1

The LPCs ai½m] are found from the samples inside the interval½m — N þ 1; m] for internal prediction, and n < m — N þ 1 for external prediction. Note that the pre- diction gain defined in (4.23) is a function of the time variable m. In practice, the

average performance of a prediction scheme is often measured by the segmental prediction gain, defined with

SPG ¼ AfPG½m]g; ð4:25Þ

which is the time average of the prediction gain for each frame in the decibel domain.

Example 4.2 White noise is generated using a random number generator with uniform distribution and unit variance. This signal is then filtered by an AR synthe- sizer with

|

a1 ¼ 1:534 |

a2 ¼ 1 |

a3 ¼ 0:587 a4 ¼ 0:347 |

a5 ¼ 0:08 |

|

a6 ¼ —0:061 |

a7 ¼ —0:172 |

a8 ¼ —0:156 a9 ¼ —0:157 |

a10 ¼ —0:141 |

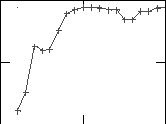

The frame of the resultant AR signal is used for LP analysis, with a length of 240 samples. Nonrecursive autocorrelation estimation using a Hamming window is applied. LP analysis is performed with prediction order ranging from 2 to 20; pre- diction error and prediction gain are found for each case. Figure 4.4 summarizes the results, where we can see that the prediction gain grows initially from M ¼ 2 and is maximized when M ¼ 10. Further increasing the prediction order will not provide additional gain; in fact, it can even reduce it. This is an expected result since the AR model used to generate the signal has order ten.

10

10

PG 9.5

9