- •Contents

- •Preface

- •Acknowledgments

- •About the author

- •About the cover illustration

- •Higher product quality

- •Less rework

- •Better work alignment

- •Remember

- •Deriving scope from goals

- •Specifying collaboratively

- •Illustrating using examples

- •Validating frequently

- •Evolving a documentation system

- •A practical example

- •Business goal

- •An example of a good business goal

- •Scope

- •User stories for a basic loyalty system

- •Key Examples

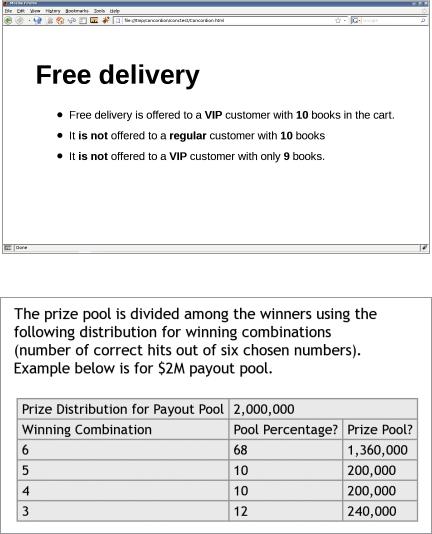

- •Key examples: Free delivery

- •Free delivery

- •Examples

- •Living documentation

- •Remember

- •Tests can be good documentation

- •Remember

- •How to begin changing the process

- •Focus on improving quality

- •Start with functional test automation

- •When: Testers own test automation

- •Use test-driven development as a stepping stone

- •When: Developers have a good understanding of TDD

- •How to begin changing the team culture

- •Avoid “agile” terminology

- •When: Working in an environment that’s resistant to change

- •Ensure you have management support

- •Don’t make test automation the end goal

- •Don’t focus on a tool

- •Keep one person on legacy scripts during migration

- •When: Introducing functional automation to legacy systems

- •Track who is running—and not running—automated checks

- •When: Developers are reluctant to participate

- •Global talent management team at ultimate software

- •Sky Network services

- •Dealing with sign-off and traceability

- •Get sign-off on exported living documentation

- •When: Signing off iteration by iteration

- •When: Signing off longer milestones

- •Get sign-off on “slimmed down use cases”

- •When: Regulatory sign-off requires details

- •Introduce use case realizations

- •When: All details are required for sign-off

- •Warning signs

- •Watch out for tests that change frequently

- •Watch out for boomerangs

- •Watch out for organizational misalignment

- •Watch out for just-in-case code

- •Watch out for shotgun surgery

- •Remember

- •Building the right scope

- •Understand the “why” and “who”

- •Understand where the value is coming from

- •Understand what outputs the business users expect

- •Have developers provide the “I want” part of user stories

- •When: Business users trust the development team

- •Collaborating on scope without high-level control

- •Ask how something would be useful

- •Ask for an alternative solution

- •Make sure teams deliver complete features

- •When: Large multisite projects

- •Further information

- •Remember

- •Why do we need to collaborate on specifications?

- •The most popular collaborative models

- •Try big, all-team workshops

- •Try smaller workshops (“Three Amigos”)

- •Pair-writing

- •When: Mature products

- •Have developers frequently review tests before an iteration

- •When: Analysts writing tests

- •Try informal conversations

- •When: Business stakeholders are readily available

- •Preparing for collaboration

- •Hold introductory meetings

- •When: Project has many stakeholders

- •Involve stakeholders

- •Undertake detailed preparation and review up front

- •When: Remote Stakeholders

- •Prepare only initial examples

- •Don’t hinder discussion by overpreparing

- •Choosing a collaboration model

- •Remember

- •Illustrating using examples: an example

- •Examples should be precise

- •Don’t have yes/no answers in your examples

- •Avoid using abstract classes of equivalence

- •Ask for an alternative way to check the functionality

- •When: Complex/legacy infrastructures

- •Examples should be realistic

- •Avoid making up your own data

- •When: Data-driven projects

- •Get basic examples directly from customers

- •When: Working with enterprise customers

- •Examples should be easy to understand

- •Avoid the temptation to explore every combinatorial possibility

- •Look for implied concepts

- •Illustrating nonfunctional requirements

- •Get precise performance requirements

- •When: Performance is a key feature

- •Try the QUPER model

- •When: Sliding scale requirements

- •Use a checklist for discussions

- •When: Cross-cutting concerns

- •Build a reference example

- •When: Requirements are impossible to quantify

- •Remember

- •Free delivery

- •Examples should be precise and testable

- •When: Working on a legacy system

- •Don’t get trapped in user interface details

- •When: Web projects

- •Use a descriptive title and explain the goal using a short paragraph

- •Show and keep quiet

- •Don’t overspecify examples

- •Start with basic examples; then expand through exploring

- •When: Describing rules with many parameter combinations

- •In order to: Make the test easier to understand

- •When: Dealing with complex dependencies/referential integrity

- •Apply defaults in the automation layer

- •Don’t always rely on defaults

- •When: Working with objects with many attributes

- •Remember

- •Is automation required at all?

- •Starting with automation

- •When: Working on a legacy system

- •Plan for automation upfront

- •Don’t postpone or delegate automation

- •Avoid automating existing manual test scripts

- •Gain trust with user interface tests

- •Don’t treat automation code as second-grade code

- •Describe validation processes in the automation layer

- •Don’t replicate business logic in the test automation layer

- •Automate along system boundaries

- •When: Complex integrations

- •Don’t check business logic through the user interface

- •Automate below the skin of the application

- •Automating user interfaces

- •Specify user interface functionality at a higher level of abstraction

- •When: User interface contains complex logic

- •Avoid recorded UI tests

- •Set up context in a database

- •Test data management

- •Avoid using prepopulated data

- •When: Specifying logic that’s not data driven

- •Try using prepopulated reference data

- •When: Data-driven systems

- •Pull prototypes from the database

- •When: Legacy data-driven systems

- •Remember

- •Reducing unreliability

- •When: Working on a system with bad automated test support

- •Identify unstable tests using CI test history

- •Set up a dedicated continuous validation environment

- •Employ fully automated deployment

- •Create simpler test doubles for external systems

- •When: Working with external reference data sources

- •Selectively isolate external systems

- •When: External systems participate in work

- •Try multistage validation

- •When: Large/multisite groups

- •Execute tests in transactions

- •Run quick checks for reference data

- •When: Data-driven systems

- •Wait for events, not for elapsed time

- •Make asynchronous processing optional

- •Getting feedback faster

- •Introduce business time

- •When: Working with temporal constraints

- •Break long test packs into smaller modules

- •Avoid using in-memory databases for testing

- •When: Data-driven systems

- •Separate quick and slow tests

- •When: A small number of tests take most of the time to execute

- •Keep overnight packs stable

- •When: Slow tests run only overnight

- •Create a current iteration pack

- •Parallelize test runs

- •When: You can get more than one test Environment

- •Try disabling less risky tests

- •When: Test feedback is very slow

- •Managing failing tests

- •Create a known regression failures pack

- •Automatically check which tests are turned off

- •When: Failing tests are disabled, not moved to a separate pack

- •Remember

- •Living documentation should be easy to understand

- •Look for higher-level concepts

- •Avoid using technical automation concepts in tests

- •When: Stakeholders aren’t technical

- •Living documentation should be consistent

- •When: Web projects

- •Document your building blocks

- •Living documentation should be organized for easy access

- •Organize current work by stories

- •Reorganize stories by functional areas

- •Organize along UI navigation routes

- •When: Documenting user interfaces

- •Organize along business processes

- •When: End-to-end use case traceability required

- •Listen to your living documentation

- •Remember

- •Starting to change the process

- •Optimizing the process

- •The current process

- •The result

- •Key lessons

- •Changing the process

- •The current process

- •Key lessons

- •Changing the process

- •Optimizing the process

- •Living documentation as competitive advantage

- •Key lessons

- •Changing the process

- •Improving collaboration

- •The result

- •Key lessons

- •Changing the process

- •Living documentation

- •Current process

- •Key lessons

- •Changing the process

- •Current process

- •Key lessons

- •Collaboration requires preparation

- •There are many different ways to collaborate

- •Looking at the end goal as business process documentation is a useful model

- •Long-term value comes from living documentation

- •Index

20 Speciication by Example

of the underlying infrastructure or how emerging technologies can be applied. Testers know where to look for potential issues, and the team should work to prevent those issues. All this information needs to be captured when designing speciications.

Specifying collaboratively enables us to harness the knowledge and experience of the whole team. It also creates a collective ownership of speciications, making everyone more engaged in the delivery process.

Illustrating using examples

Natural language is ambiguous and context dependent. Requirements written in such language alone can’t provide a full and unambiguous context for development or testing. Developers and testers have to interpret requirements to produce software and test scripts, and different people might interpret tricky concepts differently.

This is especially problematic when something that seems obvious actually requires domain expertise or knowledge of jargon to be fully understood. Small differences in understanding have a cumulative effect, often leading to problems that require rework after delivery. This causes unnecessary delays.

Instead of waiting for speciications to be expressed precisely for the irst time in a programming language during implementation, successful teams illustrate speciications using examples. The team works with the business users to identify key examples that describe the expected functionality. During this process, developers and testers often suggest additional examples that illustrate edge cases or address areas of the system that are particularly problematic. This lushes out functional gaps and inconsistencies and ensures that everyone involved has a shared understanding of what needs to be delivered, avoiding rework that results from misinterpretation and translation.

If the system works correctly for all the key examples, then it meets the speciication that everyone agreed on. Key examples effectively deine what the software needs to do. They’re both the target for development and an objective evaluation criterion to check to see whether the development is done.

If the key examples are easy to understand and communicate, they can be effectively used as unambiguous and detailed requirements.

Reining the speciication

An open discussion during collaboration builds a shared understanding of the domain, but resulting examples often feature more detail than is necessary. For example, business users think about the user-interface perspective, so they offer examples of how something should work when clicking links and illing in ields. Such verbose descriptions constrain the system; detailing how something should be done rather than what is required is wasteful. Surplus details make the examples harder to communicate and understand.

Chapter 2 Key process patterns |

21 |

Key examples must be concise to be useful. By reining the speciication, successful teams remove extraneous information and create a concrete and precise context for development and testing. They deine the target with the right amount of detail to implement and verify it. They identify what the software is supposed to do, not how it does it.

Reined examples can be used as acceptance criteria for delivery; development isn’t done until the system works correctly for all examples. After providing additional information to make key examples easier to understand, teams create speciications with examples, which is a speciication of work, an acceptance test, and a future functional regression test.

Automating validation without changing speciications

Once a team agrees on speciications with examples and reines them, the team can use them as a target for implementation and a means to validate the product. The system will be validated many times with these tests during development to ensure that it meets the target. Running these checks manually would introduce unnecessary delays, and the feedback would be slow.

Quick feedback is an essential part of developing software in short iterations or in low mode, so we need to make the process of validating the system cheap and eficient. An obvious solution is automation. But this automation is conceptually different from the usual developer or tester automation.

If we automate the validation of the key examples using traditional programming (unit) automation tools or traditional functional-test automation tools, we risk introducing problems if details get lost between the business speciication and technical automation. Technically automated speciications will become inaccessible to business users. When the requirements change (and that’s when, not if ) or when developers or testers need further clariication, we won’t be able to use the speciication we previously automated. We could keep the key examples both as tests and in a more readable form, such as Word documents or web pages, but as soon as there’s more than one version of the truth, we’ll have synchronization issues. That’s why paper documentation is never ideal.

To get the most out of key examples, successful teams automate validation without changing the information. They literally keep everything in the speciication the same during automation—there’s no risk of mistranslation. As they automate validation without changing speciications, the key examples look nearly the same as they did on a whiteboard: comprehensible and accessible to all team members.

An automated Speciication with Examples that is comprehensible and accessible to all team members becomes an executable speciication. We can use it as a target for development and easily check if the system does what was agreed on, and we can use that same document to get clariication from business users. If we need to change the speciication, we have to do so in only one place.

22 Speciication by Example

If you’ve never seen a tool for automating executable speciications, this might seem unbelievable, but look at igure 2.2 and igure 2.3. They show executable speciications fully automated with two popular tools, Concordion and FitNesse.

Figure 2.2 An executable speciication automated with Concordion

Figure 2.3 An executable speciication automated with FitNesse

Many other automation frameworks don’t require any translation of key examples. This book focuses on the practices used by successful teams to implement Speciication by Example rather than tools. To learn more about the tools, visit http://speciicationby example.com, where you will be able to download articles explaining the most popular tools. See also the “Tools” section in the appendix for a list of suggested resources.

Chapter 2 Key process patterns |

23 |

Tests are speciications; speciications are tests

When a speciication is described with very concrete examples, it can be used to test the system. After such speciication is automated, it becomes an executable

acceptance test. Because I |

write |

about |

only |

these |

types |

of |

speciications |

and |

||||||||||||||

tests |

in |

this |

book, |

I’ll |

use |

thespeciicationswords |

and tests interchangeably; |

for |

|

|

|

|||||||||||

the |

purposes |

of |

this |

book, |

there’s |

no |

diference |

between |

them. |

|

|

|

|

|||||||||

This |

doesn’t |

mean |

there |

aren’t |

other |

kinds |

of |

tests—for |

example, |

exploratory |

||||||||||||

tests or usability tests are |

not speciications. The context-driven testing com- |

|||||||||||||||||||||

munity |

tries |

to |

distinguish |

between these classes of tests by |

using |

the |

name |

|||||||||||||||

checks |

for deterministic |

validations |

that |

can |

be |

automated |

and |

tests |

for |

non- |

||||||||||||

deterministic |

validations |

that |

|

|

|

|

|

|

|

|

† |

insightthe. |

|

|

||||||||

require human opinion and expertIn |

|

|

||||||||||||||||||||

context-driven language, this |

book addresses only designing and automating |

|

||||||||||||||||||||

checks. |

With |

Speciication |

by |

Example, testers use expert opinion |

and |

insight to |

||||||||||||||||

design |

good |

checks |

in |

collaboration |

with |

other |

members |

of |

the |

team. Testers |

||||||||||||

don’t |

execute these |

checks |

manually, |

which |

means |

they |

have |

more |

time |

for |

||||||||||||

other |

kinds |

of |

tests. |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|||

|

|

|

|

|

|

|

|

|

|

|||||||||||||

† See |

www.developsense.com/blog/2009/08/testing-vs-checking |

|

|

|

|

|

|

|

||||||||||||||

Validating frequently

In order to eficiently support a software system, we have to know what it does and why. In many cases, the only way to do this is to drill down into programming code or ind someone who can do that for us. Code is often the only thing we can really trust; most written documentation is outdated before the project is delivered. Programmers are oracles of knowledge and bottlenecks of information.

Executable speciications can easily be validated against the system. If this validation is frequent, then we can have as much conidence in the executable speciications as we have in the code.

By checking all executable speciications frequently, teams I talked to quickly discover any differences between the system and the speciications. Because executable speciications are easy to understand, the teams can discuss the changes with their business users and decide how to address the problems. They constantly synchronize their systems and executable speciications.