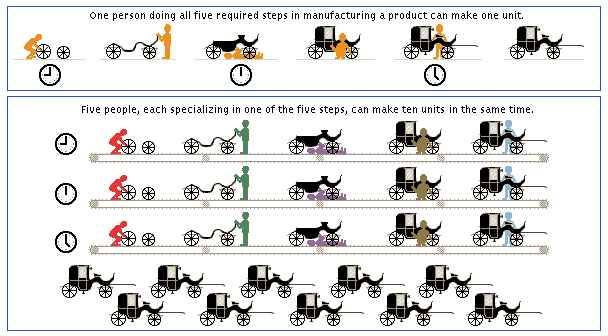

- •Division of Labor in Industry

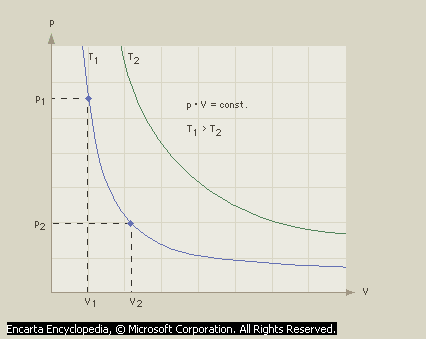

- •Boyle’s Law

- •Iron manufacturing originated about 3500 years ago when iron ore was accidentally heated in the presence of charcoal. The oxygen-laden ore was reduced to a product similar to modern wrought iron.

- •Pioneer Space Probe

- •Galileo Orbiter and Probe

- •Gemini Spacecraft

- •Apollo Command and Service Module

- •Skylab Space Station

- •Space-Shuttle Orbiter

- •Galileo Orbiter Trajectory

Industrial Revolution

|

I |

|

INTRODUCTION |

Industrial Revolution, widespread replacement of manual labor by machines that began in Britain in the 18th century and is still continuing in some parts of the world. The Industrial Revolution was the result of many fundamental, interrelated changes that transformed agricultural economies into industrial ones. The most immediate changes were in the nature of production: what was produced, as well as where and how. Goods that had traditionally been made in the home or in small workshops began to be manufactured in the factory. Productivity and technical efficiency grew dramatically, in part through the systematic application of scientific and practical knowledge to the manufacturing process. Efficiency was also enhanced when large groups of business enterprises were located within a limited area. The Industrial Revolution led to the growth of cities as people moved from rural areas into urban communities in search of work.

The changes brought by the Industrial Revolution overturned not only traditional economies, but also whole societies. Economic changes caused far-reaching social changes, including the movement of people to cities, the availability of a greater variety of material goods, and new ways of doing business. The Industrial Revolution was the first step in modern economic growth and development. Economic development was combined with superior military technology to make the nations of Europe and their cultural offshoots, such as the United States, the most powerful in the world in the 18th and 19th centuries.

The Industrial Revolution began in Great Britain during the last half of the 18th century and spread through regions of Europe and to the United States during the following century. In the 20th century industrialization on a wide scale extended to parts of Asia and the Pacific Rim. Today mechanized production and modern economic growth continue to spread to new areas of the world, and much of humankind has yet to experience the changes typical of the Industrial Revolution.

The Industrial Revolution is called a revolution because it changed society both significantly and rapidly. Over the course of human history, there has been only one other group of changes as significant as the Industrial Revolution. This is what anthropologists call the Neolithic Revolution, which took place in the later part of the Stone Age. In the Neolithic Revolution, people moved from social systems based on hunting and gathering to much more complex communities that depended on agriculture and the domestication of animals. This led to the rise of permanent settlements and, eventually, urban civilizations. The Industrial Revolution brought a shift from the agricultural societies created during the Neolithic Revolution to modern industrial societies.

The social changes brought about by the Industrial Revolution were significant. As economic activities in many communities moved from agriculture to manufacturing, production shifted from its traditional locations in the home and the small workshop to factories. Large portions of the population relocated from the countryside to the towns and cities where manufacturing centers were found. The overall amount of goods and services produced expanded dramatically, and the proportion of capital invested per worker grew. New groups of investors, businesspeople, and managers took financial risks and reaped great rewards.

In the long run the Industrial Revolution has brought economic improvement for most people in industrialized societies. Many enjoy greater prosperity and improved health, especially those in the middle and the upper classes of society. There have been costs, however. In some cases, the lower classes of society have suffered economically. Industrialization has brought factory pollutants and greater land use, which have harmed the natural environment. In particular, the application of machinery and science to agriculture has led to greater land use and, therefore, extensive loss of habitat for animals and plants. In addition, drastic population growth following industrialization has contributed to the decline of natural habitats and resources. These factors, in turn, have caused many species to become extinct or endangered.

|

II |

|

GREAT BRITAIN LEADS THE WAY |

Ever since the Renaissance (14th century to 17th century), Europeans had been inventing and using ever more complex machinery. Particularly important were improvements in transportation, such as faster ships, and communication, especially printing. These improvements played a key role in the development of the Industrial Revolution by encouraging the movement of new ideas and mechanisms, as well as the people who knew how to build and run them.

Then, in the 18th century in Britain, new production methods were introduced in several key industries, dramatically altering how these industries functioned. These new methods included different machines, fresh sources of power and energy, and novel forms of organizing business and labor. For the first time technical and scientific knowledge was applied to business practices on a large scale. Humankind had begun to develop mass production. The result was an increase in material goods, usually selling for lower prices than before.

The Industrial Revolution began in Great Britain because social, political, and legal conditions there were particularly favorable to change. Property rights, such as those for patents on mechanical improvements, were well established. More importantly, the predictable, stable rule of law in Britain meant that monarchs and aristocrats were less likely to arbitrarily seize earnings or impose taxes than they were in many other countries. As a result, earnings were safer, and ambitious businesspeople could gain wealth, social prestige, and power more easily than could people on the European continent. These factors encouraged risk taking and investment in new business ventures, both crucial to economic growth.

In addition, Great Britain’s government pursued a relatively hands-off economic policy. This free-market approach was made popular through British philosopher and economist Adam Smith and his book The Wealth of Nations (1776). The hands-off policy permitted fresh methods and ideas to flourish with little interference or regulation.

Britain’s nurturing social and political setting encouraged the changes that began in a few trades to spread to others. Gradually the new ways of production transformed more and more parts of the British economy, although older methods continued in many industries. Several industries played key roles in Britain’s industrialization. Iron and steel manufacture, the production of steam engines, and textiles were all powerful influences, as was the rise of a machine-building sector able to spread mechanization to other parts of the economy.

|

A |

|

Changes in Industry |

Modern industry requires power to run its machinery. During the development of the Industrial Revolution in Britain, coal was the main source of power. Even before the 18th century, some British industries had begun using the country’s plentiful coal supply instead of wood, which was much scarcer. Coal was adopted by the brewing, metalworking, and glass and ceramics industries, demonstrating its potential for use in many industrial processes.

|

A1 |

|

Iron and Coal |

A major breakthrough in the use of coal occurred in 1709 at Coalbrookedale in the valley of the Severn River. There English industrialist Abraham Darby successfully used coke—a high-carbon, converted form of coal—to produce iron from iron ore. Using coke eliminated the need for charcoal, a more expensive, less efficient fuel. Metal makers thereafter discovered ways of using coal and coke to speed the production of raw iron, bar iron, and other metals.

The most important advance in iron production occurred in 1784 when Englishman Henry Cort invented new techniques for rolling raw iron, a finishing process that shapes iron into the desired size and form. These advances in metalworking were an important part of industrialization. They enabled iron, which was relatively inexpensive and abundant, to be used in many new ways, such as building heavy machinery. Iron was well suited for heavy machinery because of its strength and durability. Because of these new developments iron came to be used in machinery for many industries.

Iron was also vital to the development of railroads, which improved transportation. Better transportation made commerce easier, and along with the growth of commerce enabled economic growth to spread to additional regions. In this way, the changes of the Industrial Revolution reinforced each other, working together to transform the British economy.

|

A2 |

|

Steam |

If iron was the key metal of the Industrial Revolution, the steam engine was perhaps the most important machine technology. Inventions and improvements in the use of steam for power began prior to the 18th century, as they had with iron. As early as 1689, English engineer Thomas Savery created a steam engine to pump water from mines. Thomas Newcomen, another English engineer, developed an improved version by 1712. Scottish inventor and mechanical engineer James Watt made the most significant improvements, allowing the steam engine to be used in many industrial settings, not just in mining. Early mills had run successfully with water power, but the advancement of using the steam engine meant that a factory could be located anywhere, not just close to water.

In 1775 Watt formed an engine-building and engineering partnership with manufacturer Matthew Boulton. This partnership became one of the most important businesses of the Industrial Revolution. Boulton & Watt served as a kind of creative technical center for much of the British economy. They solved technical problems and spread the solutions to other companies. Similar firms did the same thing in other industries and were especially important in the machine tool industry. This type of interaction between companies was important because it reduced the amount of research time and expense that each business had to spend working with its own resources. The technological advances of the Industrial Revolution happened more quickly because firms often shared information, which they then could use to create new techniques or products.

Like iron production, steam engines found many uses in a variety of other industries, including steamboats and railroads. Steam engines are another example of how some changes brought by industrialization led to even more changes in other areas.

|

A3 |

|

Textiles |

The industry most often associated with the Industrial Revolution is the textile industry. In earlier times, the spinning of yarn and the weaving of cloth occurred primarily in the home, with most of the work done by people working alone or with family members. This pattern lasted for many centuries. In 18th-century Great Britain a series of extraordinary innovations reduced and then replaced the human labor required to make cloth. Each advance created problems elsewhere in the production process that led to further improvements. Together they made a new system to supply clothing.

The first important invention in textile production came in 1733. British inventor John Kay created a device known as the flying shuttle, which partially mechanized the process of weaving. By 1770 British inventor and industrialist James Hargreaves had invented the spinning jenny, a machine that spins a number of threads at once, and British inventor and cotton manufacturer Richard Arkwright had organized the first production using water-powered spinning. These developments permitted a single spinner to make numerous strands of yarn at the same time. By about 1779 British inventor Samuel Crompton introduced a machine called the mule, which further improved mechanized spinning by decreasing the danger that threads would break and by creating a finer thread.

Throughout the textile industry, specialized machines powered either by water or steam appeared. Row upon row of these innovative, highly productive machines filled large, new mills and factories. Soon Britain was supplying cloth to countries throughout the world. This industry seemed to many people to be the embodiment of an emerging, mechanized civilization.

The most important results of these changes were enormous increases in the output of goods per worker. A single spinner or weaver, for example, could now turn out many times the volume of yarn or cloth that earlier workers had produced. This marvel of rising productivity was the central economic achievement that made the Industrial Revolution such a milestone in human history.

|

B |

|

Changes in Society |

The Industrial Revolution also had considerable impact upon the nature of work. It significantly changed the daily lives of ordinary men, women, and children in the regions where it took root and grew.

|

B1 |

|

Growth of Cities |

One of the most obvious changes to people’s lives was that more people moved into the urban areas where factories were located. Many of the agricultural laborers who left villages were forced to move. Beginning in the early 18th century, more people in rural areas were competing for fewer jobs. The rural population had risen sharply as new sources of food became available, and death rates declined due to fewer plagues and wars. At the same time, many small farms disappeared. This was partly because new enclosure laws required farmers to put fences or hedges around their fields to prevent common grazing on the land. Some small farmers who could not afford to enclose their fields had to sell out to larger landholders and search for work elsewhere. These factors combined to provide a ready work force for the new industries.

New manufacturing towns and cities grew dramatically. Many of these cities were close to the coalfields that supplied fuel to the factories. Factories had to be close to sources of power because power could not be distributed very far. The names of British factory cities soon symbolized industrialization to the wider world: Liverpool, Birmingham, Leeds, Glasgow, Sheffield, and especially Manchester. In the early 1770s Manchester numbered only 25,000 inhabitants. By 1850, after it had become a center of cotton manufacturing, its population had grown to more than 350,000.

In pre-industrial England, more than three-quarters of the population lived in small villages. By the mid-19th century, however, the country had made history by becoming the first nation with half its population in cities. By 1850 millions of British people lived in crowded, grim industrial cities. Reformers began to speak of the mills and factories as dark, evil places.

|

B2 |

|

Effects on Labor |

The movement of people away from agriculture and into industrial cities brought great stresses to many people in the labor force. Women in households who had earned income from spinning found the new factories taking away their source of income. Traditional handloom weavers could no longer compete with the mechanized production of cloth. Skilled laborers sometimes lost their jobs as new machines replaced them.

In the factories, people had to work long hours under harsh conditions, often with few rewards. Factory owners and managers paid the minimum amount necessary for a work force, often recruiting women and children to tend the machines because they could be hired for very low wages. Soon critics attacked this exploitation, particularly the use of child labor.

The nature of work changed as a result of division of labor, an idea important to the Industrial Revolution that called for dividing the production process into basic, individual tasks. Each worker would then perform one task, rather than a single worker doing the entire job. Such division of labor greatly improved productivity, but many of the simplified factory jobs were repetitive and boring. Workers also had to labor for many hours, often more than 12 hours a day, sometimes more than 14, and people worked six days a week. Factory workers faced strict rules and close supervision by managers and overseers. The clock ruled life in the mills.

By about the 1820s, income levels for most workers began to improve, and people adjusted to the different circumstances and conditions. By that time, Britain had changed forever. The economy was expanding at a rate that was more than twice the pace at which it had grown before the Industrial Revolution. Although vast differences existed between the rich and the poor, most of the population enjoyed some of the fruits of economic growth. The widespread poverty and constant threat of mass starvation that had haunted the preindustrial age lessened in industrial Britain. Although the overall health and material conditions of the populace clearly improved, critics continued to point to urban crowding and the harsh working conditions for many in the mills.

|

III |

|

THE INDUSTRIAL REVOLUTION IN THE UNITED STATES |

The economic successes of the British soon led other nations to try to follow the same path. In northern Europe, mechanics and investors in France, Belgium, Holland, and some of the German states set out to imitate Britain’s successful example. In the young United States, Secretary of the Treasury Alexander Hamilton called for an Industrial Revolution in his Report on Manufactures (1791). Many Americans felt that the United States had to become economically strong in order to maintain its recently won independence from Great Britain. In cities up and down the Atlantic Coast, leading citizens organized associations devoted to the encouragement of manufactures.

The Industrial Revolution unfolded in the United States even more vigorously than it had in Great Britain. The young nation began as a weak, loose association of former colonies with a traditional economy. More than three-quarters of the labor force worked in agriculture in 1790. Americans soon enjoyed striking success in mechanization, however. This was clear in 1851 when producers from many nations gathered to display their industrial triumphs at the first World’s Fair, at the Crystal Palace in London. There, it was the work of Americans that attracted the most attention. Shortly after that, the British government dispatched a special committee to the United States to study the manufacturing accomplishments of its former colonies. By the end of the century, the United States was the world leader in manufacturing, unfolding what became known as the Second Industrial Revolution. The American economy had emerged as the largest and most productive on the globe.

|

A |

|

American Advantages |

The United States enjoyed many advantages that made it fertile ground for an Industrial Revolution. A rich, sparsely inhabited continent lay open to exploitation and development. It proved relatively easy for the United States government to buy or seize vast lands across North America from Native Americans, from European nations, and from Mexico. In addition, the American population was highly literate, and most felt that economic growth was desirable. With settlement stretched across the continent from the Atlantic Ocean to the Pacific Ocean, the United States enjoyed a huge internal market. Within its distant borders there was remarkably free movement of goods, people, capital, and ideas.

The young nation also inherited many advantages from Great Britain. The stable legal and political systems that had encouraged enterprise and rewarded initiative in Great Britain also did so, with minor variations, in the United States. No nation was more open to social mobility, at least for white male Protestants. Others—particularly African Americans, Native Americans, other minorities, and women—found the atmosphere much more difficult. In the context of the times, however, the United States was relatively open to change. It quickly adopted many of the technologies, forms of organization, and attitudes shaping the new industrial world, and then proceeded to generate its own advances.

One initial American advantage was the fact that the United States shared the language and much of the culture of Great Britain, the pioneering industrial nation. This helped Americans transfer technology to the United States. As descriptions of new machines and processes appeared in print, Americans read about them eagerly and tried their own versions of the inventions sweeping Britain.

Critical to furthering industrialization in the United States were machines and knowledgeable people. Although the British tried to prevent skilled mechanics from leaving Britain and advanced machines from being exported, those efforts mostly proved ineffective. Americans worked actively to encourage such transfers, even offering bounties (special monetary rewards) to encourage people with knowledge of the latest methods and devices to move to the United States.

The most dramatic early example of a successful technical transfer is the case of Samuel Slater. Slater was an important figure in a leading British textile firm who sailed to the United States masquerading as a farmer. He eventually moved to Rhode Island, where he worked with mechanics, machine builders, and merchants to create the first important textile mill in the United States. Slater had worked as an apprentice under Richard Arkwright, and Slater’s mill used Arkwright’s innovative system of mechanized spinning. The firm of Almy, Brown, and Slater inspired many imitators and gave birth to a vast textile industry in New England.

The lure of the open, growing United States was strong. Its opportunities attracted knowledgeable, ambitious individuals not only from Britain but from other European countries as well. In 1800, for example, a young Frenchman named Eleuthère Irénée du Pont de Nemours brought to the United States his knowledge of the latest French advances in chemistry and gunpowder making. In 1802 he founded what would become one of the largest and most successful American businesses, E. I. du Pont de Nemours and Company, better known simply as DuPont.

|

B |

|

American Challenges |

Soon the United States was pioneering on its own. Because local circumstances and conditions in the United States were somewhat different than those in Britain, industrialization also developed somewhat differently. Although the United States had many natural resources in abundance, some were more plentiful than others. The profusion of wood in North America, for example, led Americans to use that material much more than Europeans did. They burned wood widely as fuel and also made use of it in machinery and in construction. Taking advantage of the vast forest resources in their country, Americans built the world’s best woodworking machines.

Transportation and communication were special challenges in a nation that stretched across the North American continent. Economic growth depended on tying together the resources, markets, and people of this large area. Despite the general conviction that private enterprise was best, the government played an active role in uniting the country, particularly by building roads. From 1815 to 1860 state and local governments also provided almost three-quarters of the financing for canal construction and related improvements to waterways.

When the British began building railroads, Americans embraced this new technology eagerly, and substantial public money was invested in rail systems. By 1860 more than half the railroad tracks in the world were in the United States. The most critical 19th-century improvement in communication, the telegraph, was invented by American Samuel F. B. Morse. The telegraph allowed messages to be sent long distances almost instantly by using a code of electronic pulses passing over a wire. The railroad and the telegraph spread across North America and helped create a national market, which in turn encouraged additional improvements in transportation and communication.

Another challenge in the United States was a relative shortage of labor. Much more than in continental Europe or in Britain, labor was in chronically short supply in the United States. This led industrialists to develop machinery to replace human labor.

|

C |

|

Changes in Industry |

Americans soon demonstrated a great talent for mechanization. Famed American arms maker Samuel Colt summarized his fellow citizens’ faith in technology when he declared in 1851, “There is nothing that cannot be produced by machinery.”

|

C1 |

|

Continuous-Process Manufacturing |

An important American development was continuous-process manufacturing. In continuous-process manufacturing, large quantities of the same product, such as cigarettes or canned food, are made in a nonstop operation. The process runs continuously, except for repairs to or maintenance of the machinery used. In the late 18th century, inventor Oliver Evans of Delaware created a remarkable water-powered flour mill. In Evans’s mill, machinery elevated the grain to the top of the mill and then moved it mechanically through various processing steps, eventually producing flour at the bottom of the mill. The process greatly reduced the need for manual labor and cut milling costs dramatically. Mills modeled after Evans’s were built along the Delaware and Brandywine rivers and Chesapeake Bay, and by the time of the American Revolution (1775-1783) they were arguably the most productive in the world. Similar milling technology was also used to grind snuff and other tobacco products in the same region.

As the 19th century passed, Americans improved continuous-process technology and expanded its use. The basic principle of utilizing gravity-powered and mechanized systems to move and process materials proved applicable in many settings. The meatpacking industry in the Midwest employed a form of this technology, as did many industries using distilling and refining processes. Items made using continuous-process manufacturing included kerosene, gasoline, and other petroleum products, as well as many processed foods. Mechanized, continuous processing yielded uniform quantity production with a minimum need for human labor.

|

C2 |

|

The American System |

In a closely related development, by the mid-19th century American manufacturers shaped a set of techniques later known as the American system of production. This system involved using special-purpose machines to produce large quantities of similar, sometimes interchangeable, parts that would then be assembled into a finished product. The American system extended the idea of division of labor from workers to specialized machines. Instead of a worker making a small part of a finished product, a machine made the part, speeding the process and allowing manufacturers to produce goods more quickly. This method also enabled goods of much more uniform quality than those made by hand labor. The American system appeared first in New England in the manufacture of clocks, locks, axes, and shovels. Around the same time, the federal armories used an advanced version of this same system to produce large numbers of firearms, coining the term armory practice.

Soon a group of knowledgeable mechanics and engineers spread the American system. Many industries began to use special-purpose machines to produce large quantities of similar or even interchangeable parts for assembly into finished goods. The American system was used by inventor and manufacturer Cyrus Hall McCormick to produce his innovative reapers; Samuel Colt used it to make revolver pistols; and inventor Isaac Merrit Singer produced his popular sewing machines using this system. These kinds of products won prizes and attracted much attention at the Crystal Palace exhibition of 1851.

|

D |

|

The Second Industrial Revolution |

As American manufacturing technology spread to new industries, it ushered in what many have called the Second Industrial Revolution. The first had come on a wave of new inventions in iron making, in textiles, in the centrally powered factory, and in new ways of organizing business and work. In the latter 19th century, a second wave of technical and organizational advances carried industrial society to new levels. While Great Britain had been the birthplace of the first revolution, the second occurred most powerfully in the United States.

With the second revolution came many new processes. Iron and steel manufacturing was transformed in the 1850s and 1860s by vastly more productive technologies, the Bessemer process and the open-hearth furnace. The Bessemer process, developed by British inventor Henry Bessemer, enabled steel to be produced more efficiently by using blasts of air to convert crude iron into steel. The open-hearth furnace, created by German-born British inventor William Siemens, allowed steelmakers to achieve temperatures high enough to burn away impurities in crude iron.

In addition, factories and their production output became much larger than they had been in the first stage of the Industrial Revolution. Some industries concentrated production in fewer but bigger and more productive facilities. In addition, some industries boosted production in existing (not necessarily larger) factories. This growth was enabled by a variety of factors, including technological and scientific progress; improved management; and expanding markets due to larger populations, rising incomes, and better transportation and communications.

American industrialist Andrew Carnegie built a giant iron and steel empire using huge new plants. John D. Rockefeller, another American industrialist, did the same in petroleum refining. Soon there were enormous advances in science-based industries—for example, chemicals, electrical power, and electrical machinery. Just as in the first revolution, these changes prompted further innovations, which led to further economic growth.

It was in the automobile industry that continuous-process methods and the American system combined to greatest effect. In 1903 American industrialist Henry Ford founded the Ford Motor Company. His production innovation was the moving assembly line, which brought together many mass-produced parts to create automobiles. Ford’s moving assembly line gave the world the fullest expression yet of the Second Industrial Revolution, and his production triumphs in the second decade of the 20th century signaled the crest of the new industrial age.

|

D1 |

|

Organization and Work |

Just as important as advances in manufacturing technology was a wave of changes in how business was structured and work was organized. Beginning with the large railroad companies, business leaders learned how to operate and coordinate many different economic activities across broad geographic areas. During the first phase of the Industrial Revolution, many factories had grown into large organizations, but even by 1875 few firms coordinated production and marketing across many business units. Leaders such as Carnegie and Rockefeller changed this, and firms grew much larger in numerous industries, giving birth to the modern corporation.

Within the business unit, Americans pioneered novel ways of organizing work. Engineers studied and modified production, seeking the most efficient ways to lay out a factory, move materials, route jobs, and control work through precise scheduling. Industrial engineer Frederick W. Taylor and his followers sought both efficiency and contented workers. They believed that they could achieve those results through precise measurement and analysis of each aspect of a job. Taylor’s The Principles of Scientific Management (1911) became the most influential book of the Second Industrial Revolution. By the early 20th century, Ford’s mass production techniques and Taylor’s scientific management principles had come to symbolize America’s place as the leading industrial nation.

|

D2 |

|

Changes in Agriculture |

As it had done in Britain, industrialization brought deep and often distressing shifts to American society. The influence of rural life declined, and the relative economic importance of agriculture dwindled. Although the amount of land under cultivation and the number of people earning a living from agriculture expanded, the growth of commerce, manufacturing, and the service industries steadily eclipsed farming’s significance. The proportion of the work force dependent on agriculture shrank constantly from the time of the first federal census in 1790. From that time until the end of the 19th century, farm workers dropped from about 75 percent of the work force to about 40 percent.

New technology was introduced in agriculture. The scarcity of labor and the growth of markets for agricultural products encouraged the introduction of machinery to the farms. Machinery increased productivity so that fewer hands could produce more food per acre. New plows, seed drills, cultivators, mowers, and threshers, as well as the reaper, all appeared by 1860. After that, better harvesters and binding machines came into use, as did the harvester-threshers known as combines. Farmers also used limited steam power in the late 19th century, and by about 1905 they began using gasoline-powered tractors. At about the same time, Americans began to apply science systematically to agriculture, such as by using genetics as a basis for plant breeding. These techniques, plus fertilizers and pesticides, helped to increase farm productivity.

|

E |

|

Changes in Society |

As in Britain, the Industrial Revolution in the United States led to major social changes. Urban population grew, rural population declined, and the nature of labor changed dramatically.

|

E1 |

|

Growth of Cities |

As a result of the shift in economic importance from agriculture to manufacturing, American cities grew both in number and in population. From 1860 to 1900 the number of urban areas in the United States expanded fivefold. Even more striking was the explosion in the growth of big cities. In 1860 there were only 9 American cities with more than 100,000 inhabitants; by 1900 there were 38. Like the British critics of the preceding century, many Americans viewed these industrial and commercial centers as dark and dirty places crowded with exploited workers. But whatever the drawbacks of city life, urban growth in the United States was unstoppable, fueled both by the movement of rural Americans and a swelling tide of immigrants from Europe. In 1790 only about 5 percent of the American population lived in cities; today more than 75 percent does. This long-term trend is characteristic of societies experiencing industrialization and is evident today in regions of Asia and Latin America that are now undergoing an industrial revolution.

|

E2 |

|

Effects on Labor |

Division of Labor in Industry

Division of labor is a basic tenet of industrialization. In division of labor, each worker is assigned to a different task, or step, in the manufacturing process, and as a result, total production increases. As this illustration shows, one person performing all five steps in the manufacture of a product can make one unit in a day. Five workers, each specializing in one of the five steps, can make 10 units in the same amount of time.

Industrialization brought to the United States conflicts and stresses similar to the ones encountered in Britain and in Europe. Those who had a stake in the traditional economy lost ground as mechanized production replaced household manufacturing. Often, skilled workers found their income and their status under attack from the new machines and the relentless division of labor. Businesses had always enjoyed considerable power in their relationships with the labor force, but the balance tipped even more in their favor as firms grew larger.

In order to counter the power of business, workers tried to form trade unions to represent them and bargain for rights. Initially they had only limited success. Occasional strikes, sometimes violent, appeared as signs of underlying tensions. Until the Great Depression of the 1930s, skilled craft workers were almost the only groups able to sustain unions. The most successful of these unions were those in the American Federation of Labor. They did not seek fundamental social or economic change, such as socialists advocated; instead they accepted industrial society and concentrated on improving the wages and working conditions of their members.

Eventually the United States digested the tensions and dislocations caused by the coming of industry and the growth of cities. The government began to enact regulations and antitrust laws to counter the worst excesses of big business. The Sherman Antitrust Act of 1890 was created to prevent corporate trusts, monopoly enterprises formed to reduce competition and allow essentially a single business firm to control the price of a product. Laws such as the Fair Labor Standards Act, enacted in 1938, mandated worker protections, including the maximum 8-hour workday and 40-hour workweek. Above all, the rising incomes and high rates of economic growth proved calming. Material progress convinced most Americans that industrialization had been a positive development, although the challenge of balancing business growth and worker rights remains an issue to this day.

|

IV |

|

THE INDUSTRIAL REVOLUTION AROUND THE WORLD |

After the first appearance of industrialization in Britain, many other nations eagerly pursued similar changes. In the 19th century the Industrial Revolution spread not only to the United States, but also to Germany, France, Belgium, and much of the rest of western Europe. Often, skilled British workers and knowledgeable entrepreneurs moved to other countries and taught the manufacturing techniques they had learned in Britain.

Change happened somewhat differently in each setting because of varying resources, political conditions, and social and economic circumstances. In France, industrial development was somewhat delayed by political turmoil and a lack of coal, but the central government played a more active role in development than Britain’s had. Both countries created railroad networks, for example, but the British did so entirely through private companies, while the French central government funded much of its country’s railways. Craft production, in which people make decorative or functional items by hand, also remained a more significant element in the French economy than it did in Britain. In some industries, such as furniture manufacturing, the extent of mechanization was not as great as it had been in Great Britain.

In Germany the central government’s role was also greater than it had been in Great Britain. This was partly because the German government wanted to hasten the process and catch up with British industrialization. Germany used its rich iron and coal resources to develop heavy industry, such as iron and steel manufacture. It also proved to be an environment that encouraged big businesses and cooperation among large firms. The German banking sector, for example, was dominated by a few large banks that coordinated efforts to increase industry.

In Russia, the government made repeated efforts to enable industrialization, sometimes hiring foreigners to build and operate whole factories. On the whole, however, industrialization spread more slowly there, and the Russian economy remained overwhelmingly agricultural for a long time. Even in largely industrialized areas, such as western Europe and the United States, some areas lagged behind in industrial development. Southern Italy, Spain, and the American South remained largely agrarian until much later than their neighbors. In Asia, industrialization varied, although as a whole it came much later than Western European development.

In Japan, the first industrial Asian nation, the central government made industrialization a national goal during the late 19th century. Industrialization in some areas of China began in the early 20th century and increased near the end of the century. Other Asian and Pacific Rim countries, such as South Korea and Taiwan, began to industrialize after the 1960s.

In Southeast Asia, sub-Saharan Africa, India, and much of Latin America—areas that were colonies of Western nations, or that were dominated by other nations for long periods—industrialization was much more delayed than in many other areas. The legacies of colonialism made widespread change difficult because the society and economy of colonies were heavily controlled by and dependent on the parent country.

Although different cultures produced distinctive variations of an industrial revolution, the similarities are striking. Mechanization and urbanization were central to each area in which the Industrial Revolution succeeded, as were accompanying tensions and disruptions. In most societies, the truly revolutionary changes came during the first 75 to 100 years after the process of industrialization began. After that, factory production dominated manufacturing, and most people moved to cities.

|

V |

|

COSTS AND BENEFITS |

The modern, industrial societies created by the Industrial Revolution have come at some cost. The nature of work became worse for many people, and industrialization placed great pressures on traditional family structures as work moved outside the home. The economic and social distances between groups within industrial societies are often very wide, as is the disparity between rich industrial nations and poorer neighboring countries. The natural environment has also suffered from the effects of the Industrial Revolution. Pollution, deforestation, and the destruction of animal and plant habitats continue to increase as industrialization spreads.

Perhaps the greatest benefits of industrialization are increased material well-being and improved healthcare for many people in industrial societies. Modern industrial life also provides a constantly changing flood of new goods and services, giving consumers more choices. With both its negative aspects and its benefits, the Industrial Revolution has been one of the most influential and far-reaching movements in human history.

Recycling

|

I |

|

INTRODUCTION |

Recycling, collection, processing, and reuse of materials that would otherwise be thrown away. Materials ranging from precious metals to broken glass, from old newspapers to plastic spoons, can be recycled. The recycling process reclaims the original material and uses it in new products.

In general, using recycled materials to make new products costs less and requires less energy than using new materials. Recycling can also reduce pollution, either by reducing the demand for high-pollution alternatives or by minimizing the amount of pollution produced during the manufacturing process. Recycling decreases the amount of land needed for trash dumps by reducing the volume of discarded waste.

Recycling can be done internally (within a company) or externally (after a product is sold and used). In the paper industry, for example, internal recycling occurs when leftover stock and trimmings are salvaged to help make more new product. Since the recovered material never left the manufacturing plant, the final product is said to contain preconsumer waste. External recycling occurs when materials used by the customer are returned for processing into new products. Materials ready to be recycled in this manner, such as empty beverage containers, are called postconsumer waste.

|

II |

|

TYPES OF MATERIALS RECYCLED |

Just about any material can be recycled. On an industrial scale, the most commonly recycled materials are those that are used in large quantities—metals such as steel and aluminum, plastics, paper, glass, and certain chemicals.

|

A |

|

Steel |

There are two methods of making steel using recycled material: the basic oxygen furnace (BOF) method and the electric arc furnace (EAF) method. The BOF method involves mixing molten scrap steel in a furnace with new steel. About 28 percent of the new product is recycled steel. Steel made by the BOF method typically is used to make sheet-steel products like cans, automobiles, and appliances. The EAF method normally uses 100 percent recycled steel. Scrap steel is placed in a furnace and melted by electricity that arcs between two carbon electrodes. Limestone and other materials are added to the molten steel to remove impurities. Steel produced by the EAF method usually is formed into beams, reinforcing bars, and thick plate.

Approximately 68 percent of all steel is recycled, making it one of the world’s most recycled materials. In 1994 37 billion steel cans, weighing 2,408,478 metric tons (2,654,892 U.S. tons), were used in the United States, of which 53 percent were recycled. In 1995 more than 60 million metric tons (70 million U.S. tons) of scrap steel were recycled in the United States.

|

B |

|

Aluminum |

Recycling aluminum in the United States provides a stable, domestic aluminum supply amounting to approximately one-third of the industry’s requirement. In contrast, most of the ore required to produce new aluminum must be imported from Jamaica, Australia, Surinam, Guyana, and Guinea. About 2 kg (about 4 lb) of ore, a mixture of aluminum oxides called bauxite, are needed to make 0.5 kg (1 lb) of aluminum.

The U.S. aluminum industry has recognized the advantage of a domestic aluminum supply and has established systems for collection, transportation, and processing. For this reason, aluminum cans almost always produce a profit in community recycling programs. A number of states require deposits for beverage containers and have established redemption centers at supermarkets. The overall recycling rate of all forms of aluminum is about 35 percent.

Cans brought to collection centers are crushed, baled, and shipped to regional mills or reclamation plants. The cans are then shredded to reduce volume and heated to remove coatings and moisture. Next, they are put into a furnace, melted, and formed into ingots, or bars, weighing 10,000 kg (30,000 lb) or more. The ingots go to another mill to be rolled into sheets. The sheets are sent to a container plant and cut into disks from which new cans are formed. The cans are printed with the beverage makers’ logos and are shipped (with tops separate) to the filling plant.

About 100 billion aluminum beverage cans are used each year in the United States and about 65 percent of these are then recycled. The average aluminum can in the United States contains 40 percent postconsumer recycled aluminum. About 97 percent of all soft drink cans and 99 percent of all beer cans are made of aluminum.

|

C |

|

Plastics |

Plastics are more difficult to recycle than metal, paper, or glass. One problem is that any of seven categories of plastics can be used for containers alone. For effective recycling, the different types cannot be mixed. Most states require that plastic containers have identification codes so they can be more easily identified and separated. The code assigns a particular number to each of the seven plastics used in packaging. The number 1 refers to polyethylene teraphthalate (PET) and the number 2 refers to high-density polyethylene (HDPE). PET can be made into carpet, or fiberfill for ski jackets and clothing. HDPE can be recycled into construction fencing, landfill liners, and a variety of other products. Plastics coded with the number 6 are polystyrene (PS), which can be recycled into cafeteria trays, combs, and other items.

The recycling process for plastic normally involves cleaning it, shredding it into flakes, then melting the flakes into pellets. The pellets are melted into a final product. Some products work best with only a small percentage of recycled content. Other products, such as HDPE plastic milk cases, can be made successfully with 100 percent recycled content. The plastic container industry has concentrated on weight reduction and source reduction. For example, the one-gallon HDPE milk container that weighed about 120 gm (about 4.2 oz) in the 1960s weighed just 65 gm (about 2.3 oz) in 1996.

In the United States, the overall recycling of plastic was under 4.7 percent in 1994, with the recycling rate of plastic containers at about 19 percent. Most discarded plastic is in the form of plastic containers. Plastics made up about 9 percent of the waste stream by weight in 1995.

|

D |

|

Paper and Paper Products |

Paper products that can be recycled include cardboard containers, wrapping paper, and office paper. The most commonly recycled paper product is newsprint.

In newspaper recycling, old newspapers are collected and searched for contaminants such as plastic bags and aluminum foil. The paper goes to a processing plant where it is mixed with hot water and turned into pulp in a machine that works much like a big kitchen blender. The pulp is screened and filtered to remove smaller contaminants. The pulp then goes to a large vat where the ink separates from the paper fibers and floats to the surface. The ink is skimmed off, dried and reused as ink or burned as boiler fuel. The cleaned pulp is mixed with new wood fibers to be made into paper again.

Paper and paper products such as corrugated board constitute about 40 percent of the discards in the United States, making it the most plentiful single item in landfills. Experts estimate the average office worker generates about 7 kg (about 15 lb) of wastepaper (about 1,500 sheets) per month. Every ton of paper that is recycled saves about 1.4 cu m (about 50 cu ft) of landfill space. One ton of recycled paper saves 17 pulpwood trees (trees used to produce paper).

|

E |

|

Glass |

Scrap glass taken from the glass manufacturing process, called cullet, has been internally recycled for years. The scrap glass is economical to use as a raw material because it melts at lower temperatures than other raw materials, thus saving fuel and operating costs.

Glass that is to be recycled must be relatively free from impurities and sorted by color. Glass containers are the most commonly recycled form of glass, and their colors are flint (clear), amber (brown), and green. Other glass, such as window glass, pottery, and cooking utensils, are considered contaminants because they have different compositions than glass used in containers. The recycled glass is melted in a furnace and formed into new products.

Glass containers make up 90 percent of the total glass used in the United States. The 1994 recycling rate for glass was about 33 percent. Other uses for recycled glass include glass art and decorative tiles. Cullet mixed with asphalt forms a paving material called glassphalt.

|

F |

|

Chemicals and Hazardous Waste |

Household hazardous wastes include drain cleaners, oven cleaners, window cleaners, disinfectants, motor oil, paints, paint thinners, and pesticides. Most municipalities ban hazardous waste from the regular trash. Periodically, citizens are alerted that they can take their hazardous waste to a collection point where trained workers sort it, recycle what they can, and package the remainder in special leak-proof containers called lab packs, for safe disposal. Typical materials recycled from the collection drives are motor oil, paint, antifreeze, and tires.

Business and industry have made much progress in reducing both the hazardous waste they generate and its toxicity. Although large quantities of chemical solvents are used in cleaning processes, technology has been developed to clean and reuse solvents that used to be discarded. Even the vapors evaporated from the process are recovered and put back into the recycled solvent. Some processes that formerly used solvents no longer require them.

|

G |

|

Nuclear Waste |

Certain types of nuclear waste can be recycled, while other types are considered too dangerous to recycle. Low-level wastes include radioactive material from research activities, medical wastes, and contaminated machinery from nuclear reactors. Nickel is the major metal of construction in the nuclear power field and much of it is recycled after surface contamination has been removed.

High-level wastes come from the reprocessing of spent fuel (partially depleted reactor fuel) and from the processing of nuclear weapons. These wastes emit gamma radiation, which can cause birth defects, disease, and death. High-level nuclear waste is so toxic it is not normally recycled. Instead, it is fused into inert glass tubes encased in stainless steel cylinders, which are then stored underground.

Spent fuel can be reprocessed and recycled into new fuel elements, although fuel reprocessing was banned in the United States in 1977 and has never been resumed for legal, political, and economic reasons. However, spent fuel is being reprocessed in other countries such as Japan, Russia, and France. Spent fuel elements in the United States are kept in storage pools at each reactor site.

|

III |

|

REASONS FOR RECYCLING |

Rare materials, such as gold and silver, are recycled because acquiring new supplies is expensive. Other materials may not be as expensive to replace, but they are recycled to conserve energy, reduce pollution, conserve land, and to save money.

|

A |

|

Resource Conservation |

Recycling conserves natural resources by reducing the need for new material. Some natural resources are renewable, meaning they can be replaced, and some are not. Paper, corrugated board, and other paper products come from renewable timber sources. Trees harvested to make those products can be replaced by growing more trees. Iron and aluminum come from nonrenewable ore deposits. Once a deposit is mined, it cannot be replaced.

|

B |

|

Energy Conservation |

Recycling saves energy by reducing the need to process new material, which usually requires more energy than the recycling process. The amount of energy saved in recycling one aluminum can is equivalent to the energy in the gasoline that would fill half of that same can. To make an aluminum can from recycled metal takes only 5 percent of the total energy needed to produce the same aluminum can from unrecycled materials, a 95 percent energy savings. Recycled paper and paperboard require 75 percent less energy to produce than new products. Significant energy savings result in the recycling of steel and glass, as well.

|

C |

|

Pollution Reduction |

Recycling reduces pollution because recycling a product creates less pollution than producing a new one. For every ton of newspaper recycled, 7 fewer kg (16 lb) of air pollutants are pumped into the atmosphere. Recycling can also reduce pollution by recycling safer products to replace those that pollute. Some countries still use chlorofluorocarbons (CFCs) to manufacture foam products such as cups and plates. Many scientists suspect that CFCs harm the atmosphere’s protective layer of ozone. Using recycled plastic instead for those products eliminates the creation of harmful CFCs.

|

D |

|

Land Conservation |

Recycling saves valuable landfill space, land that must be set aside for dumping trash, construction debris, and yard waste (see Solid Waste Disposal: Landfill). In the United States, each person on average discards almost a ton of municipal solid waste (MSW) per year. MSW is raw, untreated garbage of the kind discarded by homes and small businesses. Waste from industry and agriculture normally is not part of MSW, but construction and demolition wastes are. The United States has the highest MSW discard level of any country in the world.

Landfills fill up quickly and acceptable sites for new ones are difficult to find because of objections by neighbors to noise and smells, and the hazard of leaks into underground water supplies. The two major ways to reduce the need for new landfills are to generate less initial waste and to recycle products that would normally be considered waste.

In 1994 about 6.8 million metric tons (7.5 million U.S. tons) of food and yard debris were composted in the United States, accounting for about one-sixth of the overall 23.6 percent recycling rate. The combined effort of reducing waste and recycling resulted in 41 million fewer metric tons (45 million U.S. tons) of material going to landfills.

Solid waste can also be burned instead of buried in the ground. Typically, waste-to-energy (WTE) facilities burn trash to heat water for steam-turbine electrical generators. This WTE recycling keeps another 16 percent of municipal solid waste out of the landfills.

|

E |

|

Economic Savings |

Recycling in the short term is not always economically profitable or a break-even financial operation. Most experts contend, however, that the economic consequences of recycling are positive in the long term. Recycling will save money if potential landfill sites are used for more productive purposes and by reducing the number of pollution-related illnesses.

|

IV |

|

HISTORY |

People have recycled materials throughout history. Metal tools and weapons have been melted, reformed, and reused since they came in use thousands of years ago. The iron, steel, and paper industries have almost always used recycled materials. Recycling rates were modest in the United States up through the 1960s, although rates increased during World War II (1939-1945). Since the 1960s, recycling has steadily increased. Recycling in the United States between 1960 and 1994 rose from 5.35 million metric tons (5.9 million U.S. tons) per year to 44.7 million metric tons (49.3 million U.S. tons). In 1930 about 7 percent of municipal solid waste was recycled. By 1994 that amount had climbed to 23.6 percent. Experts predict the MSW recycling rate will reach 30 percent by the year 2000.

European countries have a long history of recycling and, in some cases, stiff requirements. In 1991 the German parliament approved legislation setting recycling targets of 80 to 90 percent for packaging materials and banned the sale of products from companies that do not cooperate. France has set specific recycling goals. Other countries with significant overall recycling rates include Spain at 29 percent, Switzerland at 28 percent, and Japan at 23 percent.

Steam

Steam, water in vapor state, used in the generation of power and on a large scale in many industrial processes. The techniques of generating and using steam, therefore, are important components of engineering technology. The generation of electricity is largely accomplished by first generating steam, whether the heat is produced by burning coal or gas or by the nuclear fission of uranium (see Nuclear Energy; Steam Engine; Turbine). Steam also is still much in use for space heating purposes (see Heating, Ventilating, and Air Conditioning), and it propels most of the world's naval vessels and commercial ships (see Ships and Shipbuilding).

The boiling point of water at sea-level atmospheric pressure (760 torr or 14.7 lb/sq in) is about 100° C (212° F). At this critical temperature, the addition of 970.3 Btu of heat will convert 0.454 kg (1 lb) of water to 0.454 kg of steam at the same temperature. For water under pressure, the boiling point rises with the increase of pressure up to a pressure of 218 atmospheres of pressure (165,000 torr or 3200 lb/sq in). At this pressure, water boils at a temperature of 374° C (705° F), its critical point. Beyond critical pressure and temperature there is no distinction between liquid water and steam.

Pure steam is a dry and invisible vapor. In many cases, however, when water is boiling, a quantity of small droplets, or particles, of water are taken up with the steam, and the resulting mixture is visible as a white vapor. A similar effect occurs when dry steam is exhausted into the comparatively cool atmosphere. Some of the steam cools and condenses, forming the familiar white vapor seen when a kettle boils on a stove. Such steam is said to be wet.

Steam that is heated to the exact boiling point corresponding to the existing pressure is called saturated steam. Heating steam beyond this temperature produces so-called superheated steam. Superheating also occurs if saturated steam is compressed or if saturated steam is throttled by passing the steam through a valve from a high-pressure vessel to a low-pressure vessel. Throttling causes the temperature of the steam to drop somewhat, but the temperature of the throttled steam is still higher than that of saturated steam at the corresponding pressure. Steam in its superheated state is generally used in modern power generation systems.

Energy Supply, World

|

I |

|

INTRODUCTION |

Energy Supply, World, combined resources by which the nations of the world attempt to meet their energy needs. Energy is the basis of industrial civilization; without energy, modern life would cease to exist. During the 1970s the world began a painful adjustment to the vulnerability of energy supplies. In the long run, conserving energy resources may provide the time needed to develop new sources of energy, such as hydrogen fuel cells, or to further develop alternative energy sources, such as solar energy and wind energy. While this development occurs, however, the world will continue to be vulnerable to disruptions in the supply of oil, which, after World War II (1939-1945), became the most favored energy source.

|

II |

|

BACKGROUND OF TODAY’S SITUATION |

Wood was the first and, for most of human history, the major source of energy. It was readily available, because extensive forests grew in many parts of the world and the amount of wood needed for heating and cooking was relatively modest. Certain other energy sources, found only in localized areas, were also used in ancient times: asphalt, coal, and peat from surface deposits and oil from seepages of underground deposits.

This situation changed when wood began to be used during the Middle Ages to make charcoal. The charcoal was heated with metal ore to break up chemical compounds and free the metal. As forests were cut and wood supplies dwindled at the onset of the Industrial Revolution in the mid-18th century, charcoal was replaced by coke (produced from coal) in the reduction of ores. Coal, which also began to be used to drive steam engines, became the dominant energy source as the Industrial Revolution proceeded.

|

A |

|

Growth of Petroleum Use |

Although for centuries petroleum (also known as crude oil) had been used in small quantities for purposes as diverse as medicine and ship caulking, the modern petroleum era began when a commercial well was brought into production in Pennsylvania in 1859. The oil industry in the United States expanded rapidly as refineries sprang up to make oil products from crude oil. The oil companies soon began exporting their principal product, kerosene—used for lighting—to all areas of the world. The development of the internal-combustion engine and the automobile at the end of the 19th century created a vast new market for another major product, gasoline. A third major product, heavy oil, began to replace coal in some energy markets after World War II.

The major oil companies, which are based principally in the United States, initially found large oil supplies in the United States. As a result, oil companies from other countries—especially Britain, the Netherlands, and France—began to search for oil in many parts of the world, especially the Middle East. The British brought the first field there (in Iran) into production just before World War I (1914-1918). During World War I, the U.S. oil industry produced two-thirds of the world’s oil supply from domestic sources and imported another one-sixth from Mexico. At the end of the war and before the discovery of the productive East Texas fields in 1930, however, the United States, with its reserves strained by the war, became a net oil importer for a few years.

During the next three decades, with occasional federal support, the U.S. oil companies were enormously successful in expanding in the rest of the world. By 1955 the five major U.S. oil companies produced two-thirds of the oil for the world oil market(not including North America and the Soviet bloc). Two British-based companies produced almost one-third of the world’s oil supply, and the French produced a mere one-fiftieth. The next 15 years were a period of serenity for energy supplies. The seven major U.S. and British oil companies provided the world with increasing quantities of cheap oil. The world price was about a dollar a barrel, and during this time the United States was largely self-sufficient, with its imports limited by a quota.

|

B |

|

Formation of OPEC |

Two series of events coincided to change this secure supply of cheap oil into an insecure supply of expensive oil. In 1960, enraged by unilateral cuts in oil prices by the seven big oil companies, the governments of the major oil-exporting countries formed the Organization of Petroleum Exporting Countries, or OPEC. OPEC’s goal was to try to prevent further cuts in the price that the member countries—Venezuela and four countries around the Persian Gulf—received for oil. They succeeded, but for a decade they were unable to raise prices. In the meantime, increasing oil consumption throughout the world, especially in Europe and Japan, where oil displaced coal as a primary source of energy, caused an enormous expansion in the demand for oil products.

|

C |

|

The Energy Crisis |

The year 1973 brought an end to the era of secure, cheap oil. In October, as a result of the Arab-Israeli War, the Arab oil-producing countries cut back oil production and embargoed oil shipments to the United States and the Netherlands. Although the Arab cutbacks represented a loss of less than 7 percent in world supply, they created panic on the part of oil companies, consumers, oil traders, and some governments. Wild bidding for crude oil ensued when a few producing nations began to auction off some of their oil. This bidding encouraged the OPEC nations, which now numbered 13, to raise the price of all their crude oil to a level as high as eight times that of a few years earlier. The world oil scene gradually calmed, as a worldwide recession brought on in part by the higher oil prices trimmed the demand for oil. In the meantime, most OPEC governments took over ownership of the oil fields in their countries.

In 1978 a second oil crisis began when, as a result of the revolution that eventually drove the Shah of Iran from his throne, Iranian oil production and exports dropped precipitously. Because Iran had been a major exporter, consumers again panicked. A replay of 1973 events, complete with wild bidding, again forced up oil prices during 1979. The outbreak of war between Iran and Iraq in 1980 gave a further boost to oil prices. By the end of 1980 the price of crude oil stood at 19 times what it had been just ten years earlier.

The very high oil prices again contributed to a worldwide recession and gave energy conservation a big push. As oil demand slackened and supplies increased, the world oil market slumped. Significant increases in non-OPEC oil supplies, such as those in the North Sea, Mexico, Brazil, Egypt, China, and India, pushed oil prices even lower. Production in the Soviet Union reached 11.42 million barrels per day by 1989, accounting for 19.2 percent of world production in that year.

Despite the low world oil prices that have prevailed since 1986, concern over disruption has continued to be a major focus of energy policy in the industrialized countries. The short-term increases in prices following Iraq’s invasion of Kuwait in 1990 reinforced this concern. Owing to its vast reserves, the Middle East will continue to be the major source of oil for the foreseeable future. However, new discoveries in the Caspian Sea region suggest that countries such as Kazakhstan may become major sources of petroleum in the 21st century.

|

D |

|

Current Status |

In the 1990s, oil production by non-OPEC countries remained strong and production by OPEC countries rebounded. The result at the end of the 20th century was a world oil surplus and prices (when adjusted for inflation) that were lower than in 1972.

Experts are uncertain about future oil supplies and prices. Low prices have spurred greater oil consumption, and experts question how long world petroleum reserves can keep pace with increased demand. Many of the world’s leading petroleum geologists believe the world oil supply will peak around 80 million barrels per day between 2010 and 2020. (In 1998 world consumption was approximately 70 million barrels per day.) On the other hand, many economists believe that even modestly higher oil prices might lead to greater supply, since the oil companies would then have the economic incentive to exploit less accessible oil deposits.

Natural gas may be increasingly used in place of oil for applications such as power generation and transportation. One reason is that world reserves of natural gas have doubled since 1976, in part because of the discovery of major deposits of natural gas in Russia and in the Middle East. New facilities and pipelines are being constructed to help process and transport this natural gas from production wells to consumers.

|

III |

|

PETROLEUM AND NATURAL GAS |

Petroleum (crude oil) and natural gas are found in commercial quantities in sedimentary basins in more than 50 countries in all parts of the world. The largest deposits are in the Middle East, which contains more than half the known oil reserves and almost one-third of the known natural-gas reserves. The United States contains only about 2 percent of the known oil reserves and 3 percent of the known natural-gas reserves.

|

A |

|

Drilling |

Geologists and other scientists have developed techniques that indicate the possibility of oil or gas being found deep in the ground. These techniques include taking aerial photographs of special surface features, sending shock waves through the earth and reflecting them back into instruments, and measuring the earth’s gravity and magnetic field with sensitive meters. Nevertheless, the only method by which oil or gas can be found is by drilling a hole into the reservoir. In some cases oil companies spend many millions of dollars drilling in promising areas, only to find dry holes. For a long time, most wells were drilled on land, but after World War II drilling commenced in shallow water from platforms supported by legs that rested on the sea bottom. Later, floating platforms were developed that could drill at water depths of 1,000 m (3,300 ft) or more. Large oil and gas fields have been found offshore: in the United States, mainly off the Gulf Coast; in Europe, primarily in the North Sea; in Russia, in the Barents Sea and the Kara Sea; and off Newfoundland and Brazil. Most major finds in the future may be offshore.

|

B |

|

Production |

As crude oil or natural gas is produced from an oil or gas field, the pressure in the reservoir that forces the material to the surface gradually declines. Eventually, the pressure will decline so much that the remaining oil or gas will not migrate through the porous rock to the well. When this point is reached, most of the gas in a gas field will have been produced, but less than one-third of the oil will have been extracted. Part of the remaining oil can be recovered by using water or carbon dioxide gas to push the oil to the well, but even then, one-fourth to one-half of the oil is usually left in the reservoir. In an effort to extract this remaining oil, oil companies have begun to use chemicals to push the oil to the well, or to use fire or steam in the reservoir to make the oil flow more easily. New techniques that allow operators to drill horizontally, as well as vertically, into very deep structures have dramatically reduced the cost of finding natural gas and oil supplies.

Crude oil is transported to refineries by pipelines, barges, or giant oceangoing tankers. Refineries contain a series of processing units that separate the different constituents of the crude oil by heating them to different temperatures, chemically modifying them, and then blending them to make final products. These final products are principally gasoline, kerosene, diesel oil, jet fuel, home heating oil, heavy fuel oil, lubricants, and feedstocks, or starting materials, for petrochemicals.

Natural gas is transported, usually by pipelines, to customers who burn it for fuel or, in some cases, make petrochemicals from chemicals extracted, or “stripped,” from it. Natural gas can be liquefied at very low temperatures and transported in special ships. This method is much more costly than transporting oil by tanker. Oil and natural gas compete in a number of markets, especially in generating heat for homes, offices, factories, and industrial processes.

|

C |

|

Pollution Problems |

In its early days, the oil industry generated considerable environmental pollution. Through the years, however, under the dual influences of improved technology and more stringent regulations, it has become much cleaner. The effluents from refineries have decreased greatly and, although well blowouts still occur, new technology has tended to make them relatively rare. The policing of the oceans, on the other hand, is much more difficult. Oceangoing ships are still a major source of oil spills. In 1990 the Congress of the United States passed legislation requiring tankers to be double hulled by the end of the decade.

Another source of pollution connected with the oil industry is the sulfur in crude oil. Regulations of national and local governments restrict the amount of sulfur dioxide that can be discharged by factories and utilities burning fuel oil. Because removing sulfur is expensive, however, regulations still allow some sulfur dioxide to be discharged into the air.

Many scientists believe that another potential environmental problem from refining and burning large amounts of oil and other fossil fuels (such as coal and natural gas) occurs when carbon dioxide (a by-product of the burning of fossil fuels), methane (which exists in natural gas and is also a by-product of refining petroleum), and other by-product gases accumulate in the atmosphere. These gases are known as greenhouse gases, because they trap some of the energy from the Sun that penetrates Earth’s atmosphere. This energy, trapped in the form of heat, maintains Earth at a temperature that is hospitable to life. Certain amounts of greenhouse gases occur naturally in the atmosphere. However, the immense quantities of petroleum, coal, and other fossil fuels burned during the world’s rapid industrialization over the last 200 years are a contributing source of higher levels of carbon dioxide in the atmosphere. During that time period, these levels have increased by about 28 percent. This increase in atmospheric carbon dioxide, coupled with the continuing loss of the world’s forests (which absorb carbon dioxide), has led many scientists to predict a rise in global temperature. This increase in global temperature might disrupt weather patterns, disrupt ocean currents, lead to more violent storms, and create other environmental problems. In 1992 representatives of over 150 countries convened in Rio de Janeiro, Brazil, and agreed on the need to reduce the world’s emissions of greenhouse gases. In 1997 world delegations again convened, this time in Kyōto, Japan. During the Kyōto meeting, representatives of 160 nations signed an agreement known as the Kyōto Protocol, which would require 38 industrialized nations to limit emissions of greenhouse gases to levels that are an average of 5 percent below the emission levels of 1990. In order to reduce their fossil fuel emissions to achieve these levels, the industrialized nations would have to shift their energy mix toward energy sources that do not produce as much carbon dioxide, such as natural gas, or to alternative energy sources, such as hydroelectric energy, solar energy, wind energy, or nuclear energy. While the governments of some industrialized nations have ratified the Kyōto Protocol, others have not, including that of the United States.

|

D |

|

Reserves |

Oil shale, heavy oil deposits, and tar sands are the most prevalent forms of petroleum found in the world. Reserves of these sources are many times more abundant than the world’s total known reserves of crude oil. Because of the high cost of converting shale oil and tar sands into usable petroleum products, however, only a small percentage of the available material is processed commercially. An industry to make oil products from tar sands has been started in Canada, and Venezuela is looking at the prospects of developing the vast reserves of tar sands in its Orinoco River basin. Nevertheless, the quantity of oil products produced from these two raw materials is small compared with the total production of conventional crude oil. Until world petroleum prices increase, the quantity of oil produced from oil shale and tar sands will likely remain small relative to the production of conventional crude oil.

|

IV |

|

COAL |

Coal is a general term for a wide variety of solid materials that are high in carbon content. Most coal is burned by electric utility companies to produce steam to turn their generators. Some coal is used in factories to provide heat for buildings and industrial processes. A special, high-quality coal is turned into metallurgical coke for use in making steel.

|

A |

|

Reserves |