- •Contents

- •List of Figures

- •List of Tables

- •Acknowledgments

- •Introduction to MPI

- •Overview and Goals

- •Background of MPI-1.0

- •Background of MPI-1.1, MPI-1.2, and MPI-2.0

- •Background of MPI-1.3 and MPI-2.1

- •Background of MPI-2.2

- •Who Should Use This Standard?

- •What Platforms Are Targets For Implementation?

- •What Is Included In The Standard?

- •What Is Not Included In The Standard?

- •Organization of this Document

- •MPI Terms and Conventions

- •Document Notation

- •Naming Conventions

- •Semantic Terms

- •Data Types

- •Opaque Objects

- •Array Arguments

- •State

- •Named Constants

- •Choice

- •Addresses

- •Language Binding

- •Deprecated Names and Functions

- •Fortran Binding Issues

- •C Binding Issues

- •C++ Binding Issues

- •Functions and Macros

- •Processes

- •Error Handling

- •Implementation Issues

- •Independence of Basic Runtime Routines

- •Interaction with Signals

- •Examples

- •Point-to-Point Communication

- •Introduction

- •Blocking Send and Receive Operations

- •Blocking Send

- •Message Data

- •Message Envelope

- •Blocking Receive

- •Return Status

- •Passing MPI_STATUS_IGNORE for Status

- •Data Type Matching and Data Conversion

- •Type Matching Rules

- •Type MPI_CHARACTER

- •Data Conversion

- •Communication Modes

- •Semantics of Point-to-Point Communication

- •Buffer Allocation and Usage

- •Nonblocking Communication

- •Communication Request Objects

- •Communication Initiation

- •Communication Completion

- •Semantics of Nonblocking Communications

- •Multiple Completions

- •Non-destructive Test of status

- •Probe and Cancel

- •Persistent Communication Requests

- •Send-Receive

- •Null Processes

- •Datatypes

- •Derived Datatypes

- •Type Constructors with Explicit Addresses

- •Datatype Constructors

- •Subarray Datatype Constructor

- •Distributed Array Datatype Constructor

- •Address and Size Functions

- •Lower-Bound and Upper-Bound Markers

- •Extent and Bounds of Datatypes

- •True Extent of Datatypes

- •Commit and Free

- •Duplicating a Datatype

- •Use of General Datatypes in Communication

- •Correct Use of Addresses

- •Decoding a Datatype

- •Examples

- •Pack and Unpack

- •Canonical MPI_PACK and MPI_UNPACK

- •Collective Communication

- •Introduction and Overview

- •Communicator Argument

- •Applying Collective Operations to Intercommunicators

- •Barrier Synchronization

- •Broadcast

- •Example using MPI_BCAST

- •Gather

- •Examples using MPI_GATHER, MPI_GATHERV

- •Scatter

- •Examples using MPI_SCATTER, MPI_SCATTERV

- •Example using MPI_ALLGATHER

- •All-to-All Scatter/Gather

- •Global Reduction Operations

- •Reduce

- •Signed Characters and Reductions

- •MINLOC and MAXLOC

- •All-Reduce

- •Process-local reduction

- •Reduce-Scatter

- •MPI_REDUCE_SCATTER_BLOCK

- •MPI_REDUCE_SCATTER

- •Scan

- •Inclusive Scan

- •Exclusive Scan

- •Example using MPI_SCAN

- •Correctness

- •Introduction

- •Features Needed to Support Libraries

- •MPI's Support for Libraries

- •Basic Concepts

- •Groups

- •Contexts

- •Intra-Communicators

- •Group Management

- •Group Accessors

- •Group Constructors

- •Group Destructors

- •Communicator Management

- •Communicator Accessors

- •Communicator Constructors

- •Communicator Destructors

- •Motivating Examples

- •Current Practice #1

- •Current Practice #2

- •(Approximate) Current Practice #3

- •Example #4

- •Library Example #1

- •Library Example #2

- •Inter-Communication

- •Inter-communicator Accessors

- •Inter-communicator Operations

- •Inter-Communication Examples

- •Caching

- •Functionality

- •Communicators

- •Windows

- •Datatypes

- •Error Class for Invalid Keyval

- •Attributes Example

- •Naming Objects

- •Formalizing the Loosely Synchronous Model

- •Basic Statements

- •Models of Execution

- •Static communicator allocation

- •Dynamic communicator allocation

- •The General case

- •Process Topologies

- •Introduction

- •Virtual Topologies

- •Embedding in MPI

- •Overview of the Functions

- •Topology Constructors

- •Cartesian Constructor

- •Cartesian Convenience Function: MPI_DIMS_CREATE

- •General (Graph) Constructor

- •Distributed (Graph) Constructor

- •Topology Inquiry Functions

- •Cartesian Shift Coordinates

- •Partitioning of Cartesian structures

- •Low-Level Topology Functions

- •An Application Example

- •MPI Environmental Management

- •Implementation Information

- •Version Inquiries

- •Environmental Inquiries

- •Tag Values

- •Host Rank

- •IO Rank

- •Clock Synchronization

- •Memory Allocation

- •Error Handling

- •Error Handlers for Communicators

- •Error Handlers for Windows

- •Error Handlers for Files

- •Freeing Errorhandlers and Retrieving Error Strings

- •Error Codes and Classes

- •Error Classes, Error Codes, and Error Handlers

- •Timers and Synchronization

- •Startup

- •Allowing User Functions at Process Termination

- •Determining Whether MPI Has Finished

- •Portable MPI Process Startup

- •The Info Object

- •Process Creation and Management

- •Introduction

- •The Dynamic Process Model

- •Starting Processes

- •The Runtime Environment

- •Process Manager Interface

- •Processes in MPI

- •Starting Processes and Establishing Communication

- •Reserved Keys

- •Spawn Example

- •Manager-worker Example, Using MPI_COMM_SPAWN.

- •Establishing Communication

- •Names, Addresses, Ports, and All That

- •Server Routines

- •Client Routines

- •Name Publishing

- •Reserved Key Values

- •Client/Server Examples

- •Ocean/Atmosphere - Relies on Name Publishing

- •Simple Client-Server Example.

- •Other Functionality

- •Universe Size

- •Singleton MPI_INIT

- •MPI_APPNUM

- •Releasing Connections

- •Another Way to Establish MPI Communication

- •One-Sided Communications

- •Introduction

- •Initialization

- •Window Creation

- •Window Attributes

- •Communication Calls

- •Examples

- •Accumulate Functions

- •Synchronization Calls

- •Fence

- •General Active Target Synchronization

- •Lock

- •Assertions

- •Examples

- •Error Handling

- •Error Handlers

- •Error Classes

- •Semantics and Correctness

- •Atomicity

- •Progress

- •Registers and Compiler Optimizations

- •External Interfaces

- •Introduction

- •Generalized Requests

- •Examples

- •Associating Information with Status

- •MPI and Threads

- •General

- •Initialization

- •Introduction

- •File Manipulation

- •Opening a File

- •Closing a File

- •Deleting a File

- •Resizing a File

- •Preallocating Space for a File

- •Querying the Size of a File

- •Querying File Parameters

- •File Info

- •Reserved File Hints

- •File Views

- •Data Access

- •Data Access Routines

- •Positioning

- •Synchronism

- •Coordination

- •Data Access Conventions

- •Data Access with Individual File Pointers

- •Data Access with Shared File Pointers

- •Noncollective Operations

- •Collective Operations

- •Seek

- •Split Collective Data Access Routines

- •File Interoperability

- •Datatypes for File Interoperability

- •Extent Callback

- •Datarep Conversion Functions

- •Matching Data Representations

- •Consistency and Semantics

- •File Consistency

- •Random Access vs. Sequential Files

- •Progress

- •Collective File Operations

- •Type Matching

- •Logical vs. Physical File Layout

- •File Size

- •Examples

- •Asynchronous I/O

- •I/O Error Handling

- •I/O Error Classes

- •Examples

- •Subarray Filetype Constructor

- •Requirements

- •Discussion

- •Logic of the Design

- •Examples

- •MPI Library Implementation

- •Systems with Weak Symbols

- •Systems Without Weak Symbols

- •Complications

- •Multiple Counting

- •Linker Oddities

- •Multiple Levels of Interception

- •Deprecated Functions

- •Deprecated since MPI-2.0

- •Deprecated since MPI-2.2

- •Language Bindings

- •Overview

- •Design

- •C++ Classes for MPI

- •Class Member Functions for MPI

- •Semantics

- •C++ Datatypes

- •Communicators

- •Exceptions

- •Mixed-Language Operability

- •Problems With Fortran Bindings for MPI

- •Problems Due to Strong Typing

- •Problems Due to Data Copying and Sequence Association

- •Special Constants

- •Fortran 90 Derived Types

- •A Problem with Register Optimization

- •Basic Fortran Support

- •Extended Fortran Support

- •The mpi Module

- •No Type Mismatch Problems for Subroutines with Choice Arguments

- •Additional Support for Fortran Numeric Intrinsic Types

- •Language Interoperability

- •Introduction

- •Assumptions

- •Initialization

- •Transfer of Handles

- •Status

- •MPI Opaque Objects

- •Datatypes

- •Callback Functions

- •Error Handlers

- •Reduce Operations

- •Addresses

- •Attributes

- •Extra State

- •Constants

- •Interlanguage Communication

- •Language Bindings Summary

- •Groups, Contexts, Communicators, and Caching Fortran Bindings

- •External Interfaces C++ Bindings

- •Change-Log

- •Bibliography

- •Examples Index

- •MPI Declarations Index

- •MPI Function Index

13.3. FILE VIEWS |

401 |

io_node_list (comma separated list of strings) [SAME]: This hint speci es the list of I/O devices that should be used to store the le. This hint is most relevant when thele is created.

nb_proc (integer) [SAME]: This hint speci es the number of parallel processes that will typically be assigned to run programs that access this le. This hint is most relevant when the le is created.

num_io_nodes (integer) [SAME]: This hint speci es the number of I/O devices in the system. This hint is most relevant when the le is created.

striping_factor (integer) [SAME]: This hint speci es the number of I/O devices that thele should be striped across, and is relevant only when the le is created.

striping_unit (integer) [SAME]: This hint speci es the suggested striping unit to be used for this le. The striping unit is the amount of consecutive data assigned to one I/O device before progressing to the next device, when striping across a number of devices. It is expressed in bytes. This hint is relevant only when the le is created.

13.3 File Views

MPI_FILE_SET_VIEW(fh, disp, etype, letype, datarep, info)

INOUT |

fh |

le handle (handle) |

IN |

disp |

displacement (integer) |

IN |

etype |

elementary datatype (handle) |

IN |

letype |

letype (handle) |

IN |

datarep |

data representation (string) |

IN |

info |

info object (handle) |

int MPI_File_set_view(MPI_File fh, MPI_Offset disp, MPI_Datatype etype, MPI_Datatype filetype, char *datarep, MPI_Info info)

MPI_FILE_SET_VIEW(FH, DISP, ETYPE, FILETYPE, DATAREP, INFO, IERROR) INTEGER FH, ETYPE, FILETYPE, INFO, IERROR

CHARACTER*(*) DATAREP

INTEGER(KIND=MPI_OFFSET_KIND) DISP

fvoid MPI::File::Set_view(MPI::Offset disp, const MPI::Datatype& etype, const MPI::Datatype& filetype, const char* datarep,

const MPI::Info& info) (binding deprecated, see Section 15.2) g

The MPI_FILE_SET_VIEW routine changes the process's view of the data in the le. The start of the view is set to disp; the type of data is set to etype; the distribution of data to processes is set to letype; and the representation of data in the le is set to datarep. In addition, MPI_FILE_SET_VIEW resets the individual le pointers and the shared le pointer to zero. MPI_FILE_SET_VIEW is collective; the values for datarep and the extents

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

402 |

CHAPTER 13. I/O |

1of etype in the le data representation must be identical on all processes in the group; values

2for disp, letype, and info may vary. The datatypes passed in etype and letype must be

3committed.

4The etype always speci es the data layout in the le. If etype is a portable datatype

5(see Section 2.4, page 11), the extent of etype is computed by scaling any displacements in

6the datatype to match the le data representation. If etype is not a portable datatype, no

7scaling is done when computing the extent of etype. The user must be careful when using

8nonportable etypes in heterogeneous environments; see Section 13.5.1, page 430 for further

9details.

10If MPI_MODE_SEQUENTIAL mode was speci ed when the le was opened, the special

11displacement MPI_DISPLACEMENT_CURRENT must be passed in disp. This sets the displace-

12ment to the current position of the shared le pointer. MPI_DISPLACEMENT_CURRENT is

13invalid unless the amode for the le has MPI_MODE_SEQUENTIAL set.

14

15Rationale. For some sequential les, such as those corresponding to magnetic tapes

16or streaming network connections, the displacement may not be meaningful.

17MPI_DISPLACEMENT_CURRENT allows the view to be changed for these types of les.

18(End of rationale.)

19

20Advice to implementors. It is expected that a call to MPI_FILE_SET_VIEW will

21immediately follow MPI_FILE_OPEN in numerous instances. A high-quality imple-

22mentation will ensure that this behavior is e cient. (End of advice to implementors.)

23

24The disp displacement argument speci es the position (absolute o set in bytes from

25the beginning of the le) where the view begins.

26

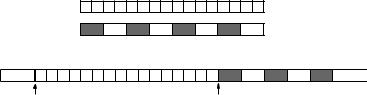

27Advice to users. disp can be used to skip headers or when the le includes a sequence

28of data segments that are to be accessed in di erent patterns (see Figure 13.3). Sep-

29arate views, each using a di erent displacement and letype, can be used to access

30each segment.

31

first view 000111 000111 000111 000111 000111 000111 000111 000111

32

second view

33

34 |

file structure: |

111000 |

111000 |

111000 |

111000 |

111000 |

111000 |

... |

|

|

|||||||||

35 |

header 111000 |

111000 |

|||||||

|

|

|

|

|

|

|

|

|

|

36 |

|

|

|

|

|

|

|

|

|

37

38

39

40

41

first displacement |

second displacement |

Figure 13.3: Displacements

(End of advice to users.)

42An etype (elementary datatype) is the unit of data access and positioning. It can be

43any MPI prede ned or derived datatype. Derived etypes can be constructed by using any

44of the MPI datatype constructor routines, provided all resulting typemap displacements are

45non-negative and monotonically nondecreasing. Data access is performed in etype units,

46reading or writing whole data items of type etype. O sets are expressed as a count of etypes;

47le pointers point to the beginning of etypes.

48

13.3. FILE VIEWS |

403 |

Advice to users. In order to ensure interoperability in a heterogeneous environment, additional restrictions must be observed when constructing the etype (see Section 13.5, page 428). (End of advice to users.)

A letype is either a single etype or a derived MPI datatype constructed from multiple instances of the same etype. In addition, the extent of any hole in the letype must be a multiple of the etype's extent. These displacements are not required to be distinct, but they cannot be negative, and they must be monotonically nondecreasing.

If the le is opened for writing, neither the etype nor the letype is permitted to contain overlapping regions. This restriction is equivalent to the \datatype used in a receive cannot specify overlapping regions" restriction for communication. Note that letypes from di erent processes may still overlap each other.

If letype has holes in it, then the data in the holes is inaccessible to the calling process. However, the disp, etype and letype arguments can be changed via future calls to MPI_FILE_SET_VIEW to access a di erent part of the le.

It is erroneous to use absolute addresses in the construction of the etype and letype. The info argument is used to provide information regarding le access patterns andle system speci cs to direct optimization (see Section 13.2.8, page 398). The constant

MPI_INFO_NULL refers to the null info and can be used when no info needs to be speci ed. The datarep argument is a string that speci es the representation of data in the le.

See the le interoperability section (Section 13.5, page 428) for details and a discussion of valid values.

The user is responsible for ensuring that all nonblocking requests and split collective operations on fh have been completed before calling MPI_FILE_SET_VIEW|otherwise, the call to MPI_FILE_SET_VIEW is erroneous.

MPI_FILE_GET_VIEW(fh, disp, etype, letype, datarep)

IN |

fh |

le handle (handle) |

OUT |

disp |

displacement (integer) |

OUT |

etype |

elementary datatype (handle) |

OUT |

letype |

letype (handle) |

OUT |

datarep |

data representation (string) |

int MPI_File_get_view(MPI_File fh, MPI_Offset *disp, MPI_Datatype *etype, MPI_Datatype *filetype, char *datarep)

MPI_FILE_GET_VIEW(FH, DISP, ETYPE, FILETYPE, DATAREP, IERROR) INTEGER FH, ETYPE, FILETYPE, IERROR

CHARACTER*(*) DATAREP

INTEGER(KIND=MPI_OFFSET_KIND) DISP

fvoid MPI::File::Get_view(MPI::Offset& disp, MPI::Datatype& etype, MPI::Datatype& filetype, char* datarep) const (binding deprecated, see Section 15.2) g

MPI_FILE_GET_VIEW returns the process's view of the data in the le. The current value of the displacement is returned in disp. The etype and letype are new datatypes with

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48