- •Cloud Computing

- •Foreword

- •Preface

- •Introduction

- •Expected Audience

- •Book Overview

- •Part 1: Cloud Base

- •Part 2: Cloud Seeding

- •Part 3: Cloud Breaks

- •Part 4: Cloud Feedback

- •Contents

- •1.1 Introduction

- •1.1.1 Cloud Services and Enabling Technologies

- •1.2 Virtualization Technology

- •1.2.1 Virtual Machines

- •1.2.2 Virtualization Platforms

- •1.2.3 Virtual Infrastructure Management

- •1.2.4 Cloud Infrastructure Manager

- •1.3 The MapReduce System

- •1.3.1 Hadoop MapReduce Overview

- •1.4 Web Services

- •1.4.1 RPC (Remote Procedure Call)

- •1.4.2 SOA (Service-Oriented Architecture)

- •1.4.3 REST (Representative State Transfer)

- •1.4.4 Mashup

- •1.4.5 Web Services in Practice

- •1.5 Conclusions

- •References

- •2.1 Introduction

- •2.2 Background and Related Work

- •2.3 Taxonomy of Cloud Computing

- •2.3.1 Cloud Architecture

- •2.3.1.1 Services and Modes of Cloud Computing

- •Software-as-a-Service (SaaS)

- •Platform-as-a-Service (PaaS)

- •Hardware-as-a-Service (HaaS)

- •Infrastructure-as-a-Service (IaaS)

- •2.3.2 Virtualization Management

- •2.3.3 Core Services

- •2.3.3.1 Discovery and Replication

- •2.3.3.2 Load Balancing

- •2.3.3.3 Resource Management

- •2.3.4 Data Governance

- •2.3.4.1 Interoperability

- •2.3.4.2 Data Migration

- •2.3.5 Management Services

- •2.3.5.1 Deployment and Configuration

- •2.3.5.2 Monitoring and Reporting

- •2.3.5.3 Service-Level Agreements (SLAs) Management

- •2.3.5.4 Metering and Billing

- •2.3.5.5 Provisioning

- •2.3.6 Security

- •2.3.6.1 Encryption/Decryption

- •2.3.6.2 Privacy and Federated Identity

- •2.3.6.3 Authorization and Authentication

- •2.3.7 Fault Tolerance

- •2.4 Classification and Comparison between Cloud Computing Ecosystems

- •2.5 Findings

- •2.5.2 Cloud Computing PaaS and SaaS Provider

- •2.5.3 Open Source Based Cloud Computing Services

- •2.6 Comments on Issues and Opportunities

- •2.7 Conclusions

- •References

- •3.1 Introduction

- •3.2 Scientific Workflows and e-Science

- •3.2.1 Scientific Workflows

- •3.2.2 Scientific Workflow Management Systems

- •3.2.3 Important Aspects of In Silico Experiments

- •3.3 A Taxonomy for Cloud Computing

- •3.3.1 Business Model

- •3.3.2 Privacy

- •3.3.3 Pricing

- •3.3.4 Architecture

- •3.3.5 Technology Infrastructure

- •3.3.6 Access

- •3.3.7 Standards

- •3.3.8 Orientation

- •3.5 Taxonomies for Cloud Computing

- •3.6 Conclusions and Final Remarks

- •References

- •4.1 Introduction

- •4.2 Cloud and Grid: A Comparison

- •4.2.1 A Retrospective View

- •4.2.2 Comparison from the Viewpoint of System

- •4.2.3 Comparison from the Viewpoint of Users

- •4.2.4 A Summary

- •4.3 Examining Cloud Computing from the CSCW Perspective

- •4.3.1 CSCW Findings

- •4.3.2 The Anatomy of Cloud Computing

- •4.3.2.1 Security and Privacy

- •4.3.2.2 Data and/or Vendor Lock-In

- •4.3.2.3 Service Availability/Reliability

- •4.4 Conclusions

- •References

- •5.1 Overview – Cloud Standards – What and Why?

- •5.2 Deep Dive: Interoperability Standards

- •5.2.1 Purpose, Expectations and Challenges

- •5.2.2 Initiatives – Focus, Sponsors and Status

- •5.2.3 Market Adoption

- •5.2.4 Gaps/Areas of Improvement

- •5.3 Deep Dive: Security Standards

- •5.3.1 Purpose, Expectations and Challenges

- •5.3.2 Initiatives – Focus, Sponsors and Status

- •5.3.3 Market Adoption

- •5.3.4 Gaps/Areas of Improvement

- •5.4 Deep Dive: Portability Standards

- •5.4.1 Purpose, Expectations and Challenges

- •5.4.2 Initiatives – Focus, Sponsors and Status

- •5.4.3 Market Adoption

- •5.4.4 Gaps/Areas of Improvement

- •5.5.1 Purpose, Expectations and Challenges

- •5.5.2 Initiatives – Focus, Sponsors and Status

- •5.5.3 Market Adoption

- •5.5.4 Gaps/Areas of Improvement

- •5.6 Deep Dive: Other Key Standards

- •5.6.1 Initiatives – Focus, Sponsors and Status

- •5.7 Closing Notes

- •References

- •6.1 Introduction and Motivation

- •6.2 Cloud@Home Overview

- •6.2.1 Issues, Challenges, and Open Problems

- •6.2.2 Basic Architecture

- •6.2.2.1 Software Environment

- •6.2.2.2 Software Infrastructure

- •6.2.2.3 Software Kernel

- •6.2.2.4 Firmware/Hardware

- •6.2.3 Application Scenarios

- •6.3 Cloud@Home Core Structure

- •6.3.1 Management Subsystem

- •6.3.2 Resource Subsystem

- •6.4 Conclusions

- •References

- •7.1 Introduction

- •7.2 MapReduce

- •7.3 P2P-MapReduce

- •7.3.1 Architecture

- •7.3.2 Implementation

- •7.3.2.1 Basic Mechanisms

- •Resource Discovery

- •Network Maintenance

- •Job Submission and Failure Recovery

- •7.3.2.2 State Diagram and Software Modules

- •7.3.3 Evaluation

- •7.4 Conclusions

- •References

- •8.1 Introduction

- •8.2 The Cloud Evolution

- •8.3 Improved Network Support for Cloud Computing

- •8.3.1 Why the Internet is Not Enough?

- •8.3.2 Transparent Optical Networks for Cloud Applications: The Dedicated Bandwidth Paradigm

- •8.4 Architecture and Implementation Details

- •8.4.1 Traffic Management and Control Plane Facilities

- •8.4.2 Service Plane and Interfaces

- •8.4.2.1 Providing Network Services to Cloud-Computing Infrastructures

- •8.4.2.2 The Cloud Operating System–Network Interface

- •8.5.1 The Prototype Details

- •8.5.1.1 The Underlying Network Infrastructure

- •8.5.1.2 The Prototype Cloud Network Control Logic and its Services

- •8.5.2 Performance Evaluation and Results Discussion

- •8.6 Related Work

- •8.7 Conclusions

- •References

- •9.1 Introduction

- •9.2 Overview of YML

- •9.3 Design and Implementation of YML-PC

- •9.3.1 Concept Stack of Cloud Platform

- •9.3.2 Design of YML-PC

- •9.3.3 Core Design and Implementation of YML-PC

- •9.4 Primary Experiments on YML-PC

- •9.4.1 YML-PC Can Be Scaled Up Very Easily

- •9.4.2 Data Persistence in YML-PC

- •9.4.3 Schedule Mechanism in YML-PC

- •9.5 Conclusion and Future Work

- •References

- •10.1 Introduction

- •10.2 Related Work

- •10.2.1 General View of Cloud Computing frameworks

- •10.2.2 Cloud Computing Middleware

- •10.3 Deploying Applications in the Cloud

- •10.3.1 Benchmarking the Cloud

- •10.3.2 The ProActive GCM Deployment

- •10.3.3 Technical Solutions for Deployment over Heterogeneous Infrastructures

- •10.3.3.1 Virtual Private Network (VPN)

- •10.3.3.2 Amazon Virtual Private Cloud (VPC)

- •10.3.3.3 Message Forwarding and Tunneling

- •10.3.4 Conclusion and Motivation for Mixing

- •10.4 Moving HPC Applications from Grids to Clouds

- •10.4.1 HPC on Heterogeneous Multi-Domain Platforms

- •10.4.2 The Hierarchical SPMD Concept and Multi-level Partitioning of Numerical Meshes

- •10.4.3 The GCM/ProActive-Based Lightweight Framework

- •10.4.4 Performance Evaluation

- •10.5 Dynamic Mixing of Clusters, Grids, and Clouds

- •10.5.1 The ProActive Resource Manager

- •10.5.2 Cloud Bursting: Managing Spike Demand

- •10.5.3 Cloud Seeding: Dealing with Heterogeneous Hardware and Private Data

- •10.6 Conclusion

- •References

- •11.1 Introduction

- •11.2 Background

- •11.2.1 ASKALON

- •11.2.2 Cloud Computing

- •11.3 Resource Management Architecture

- •11.3.1 Cloud Management

- •11.3.2 Image Catalog

- •11.3.3 Security

- •11.4 Evaluation

- •11.5 Related Work

- •11.6 Conclusions and Future Work

- •References

- •12.1 Introduction

- •12.2 Layered Peer-to-Peer Cloud Provisioning Architecture

- •12.4.1 Distributed Hash Tables

- •12.4.2 Designing Complex Services over DHTs

- •12.5 Cloud Peer Software Fabric: Design and Implementation

- •12.5.1 Overlay Construction

- •12.5.2 Multidimensional Query Indexing

- •12.5.3 Multidimensional Query Routing

- •12.6 Experiments and Evaluation

- •12.6.1 Cloud Peer Details

- •12.6.3 Test Application

- •12.6.4 Deployment of Test Services on Amazon EC2 Platform

- •12.7 Results and Discussions

- •12.8 Conclusions and Path Forward

- •References

- •13.1 Introduction

- •13.2 High-Throughput Science with the Nimrod Tools

- •13.2.1 The Nimrod Tool Family

- •13.2.2 Nimrod and the Grid

- •13.2.3 Scheduling in Nimrod

- •13.3 Extensions to Support Amazon’s Elastic Compute Cloud

- •13.3.1 The Nimrod Architecture

- •13.3.2 The EC2 Actuator

- •13.3.3 Additions to the Schedulers

- •13.4.1 Introduction and Background

- •13.4.2 Computational Requirements

- •13.4.3 The Experiment

- •13.4.4 Computational and Economic Results

- •13.4.5 Scientific Results

- •13.5 Conclusions

- •References

- •14.1 Using the Cloud

- •14.1.1 Overview

- •14.1.2 Background

- •14.1.3 Requirements and Obligations

- •14.1.3.1 Regional Laws

- •14.1.3.2 Industry Regulations

- •14.2 Cloud Compliance

- •14.2.1 Information Security Organization

- •14.2.2 Data Classification

- •14.2.2.1 Classifying Data and Systems

- •14.2.2.2 Specific Type of Data of Concern

- •14.2.2.3 Labeling

- •14.2.3 Access Control and Connectivity

- •14.2.3.1 Authentication and Authorization

- •14.2.3.2 Accounting and Auditing

- •14.2.3.3 Encrypting Data in Motion

- •14.2.3.4 Encrypting Data at Rest

- •14.2.4 Risk Assessments

- •14.2.4.1 Threat and Risk Assessments

- •14.2.4.2 Business Impact Assessments

- •14.2.4.3 Privacy Impact Assessments

- •14.2.5 Due Diligence and Provider Contract Requirements

- •14.2.5.1 ISO Certification

- •14.2.5.2 SAS 70 Type II

- •14.2.5.3 PCI PA DSS or Service Provider

- •14.2.5.4 Portability and Interoperability

- •14.2.5.5 Right to Audit

- •14.2.5.6 Service Level Agreements

- •14.2.6 Other Considerations

- •14.2.6.1 Disaster Recovery/Business Continuity

- •14.2.6.2 Governance Structure

- •14.2.6.3 Incident Response Plan

- •14.3 Conclusion

- •Bibliography

- •15.1.1 Location of Cloud Data and Applicable Laws

- •15.1.2 Data Concerns Within a European Context

- •15.1.3 Government Data

- •15.1.4 Trust

- •15.1.5 Interoperability and Standardization in Cloud Computing

- •15.1.6 Open Grid Forum’s (OGF) Production Grid Interoperability Working Group (PGI-WG) Charter

- •15.1.7.1 What will OCCI Provide?

- •15.1.7.2 Cloud Data Management Interface (CDMI)

- •15.1.7.3 How it Works

- •15.1.8 SDOs and their Involvement with Clouds

- •15.1.10 A Microsoft Cloud Interoperability Scenario

- •15.1.11 Opportunities for Public Authorities

- •15.1.12 Future Market Drivers and Challenges

- •15.1.13 Priorities Moving Forward

- •15.2 Conclusions

- •References

- •16.1 Introduction

- •16.2 Cloud Computing (‘The Cloud’)

- •16.3 Understanding Risks to Cloud Computing

- •16.3.1 Privacy Issues

- •16.3.2 Data Ownership and Content Disclosure Issues

- •16.3.3 Data Confidentiality

- •16.3.4 Data Location

- •16.3.5 Control Issues

- •16.3.6 Regulatory and Legislative Compliance

- •16.3.7 Forensic Evidence Issues

- •16.3.8 Auditing Issues

- •16.3.9 Business Continuity and Disaster Recovery Issues

- •16.3.10 Trust Issues

- •16.3.11 Security Policy Issues

- •16.3.12 Emerging Threats to Cloud Computing

- •16.4 Cloud Security Relationship Framework

- •16.4.1 Security Requirements in the Clouds

- •16.5 Conclusion

- •References

- •17.1 Introduction

- •17.1.1 What Is Security?

- •17.2 ISO 27002 Gap Analyses

- •17.2.1 Asset Management

- •17.2.2 Communications and Operations Management

- •17.2.4 Information Security Incident Management

- •17.2.5 Compliance

- •17.3 Security Recommendations

- •17.4 Case Studies

- •17.4.1 Private Cloud: Fortune 100 Company

- •17.4.2 Public Cloud: Amazon.com

- •17.5 Summary and Conclusion

- •References

- •18.1 Introduction

- •18.2 Decoupling Policy from Applications

- •18.2.1 Overlap of Concerns Between the PEP and PDP

- •18.2.2 Patterns for Binding PEPs to Services

- •18.2.3 Agents

- •18.2.4 Intermediaries

- •18.3 PEP Deployment Patterns in the Cloud

- •18.3.1 Software-as-a-Service Deployment

- •18.3.2 Platform-as-a-Service Deployment

- •18.3.3 Infrastructure-as-a-Service Deployment

- •18.3.4 Alternative Approaches to IaaS Policy Enforcement

- •18.3.5 Basic Web Application Security

- •18.3.6 VPN-Based Solutions

- •18.4 Challenges to Deploying PEPs in the Cloud

- •18.4.1 Performance Challenges in the Cloud

- •18.4.2 Strategies for Fault Tolerance

- •18.4.3 Strategies for Scalability

- •18.4.4 Clustering

- •18.4.5 Acceleration Strategies

- •18.4.5.1 Accelerating Message Processing

- •18.4.5.2 Acceleration of Cryptographic Operations

- •18.4.6 Transport Content Coding

- •18.4.7 Security Challenges in the Cloud

- •18.4.9 Binding PEPs and Applications

- •18.4.9.1 Intermediary Isolation

- •18.4.9.2 The Protected Application Stack

- •18.4.10 Authentication and Authorization

- •18.4.11 Clock Synchronization

- •18.4.12 Management Challenges in the Cloud

- •18.4.13 Audit, Logging, and Metrics

- •18.4.14 Repositories

- •18.4.15 Provisioning and Distribution

- •18.4.16 Policy Synchronization and Views

- •18.5 Conclusion

- •References

- •19.1 Introduction and Background

- •19.2 A Media Service Cloud for Traditional Broadcasting

- •19.2.1 Gridcast the PRISM Cloud 0.12

- •19.3 An On-demand Digital Media Cloud

- •19.4 PRISM Cloud Implementation

- •19.4.1 Cloud Resources

- •19.4.2 Cloud Service Deployment and Management

- •19.5 The PRISM Deployment

- •19.6 Summary

- •19.7 Content Note

- •References

- •20.1 Cloud Computing Reference Model

- •20.2 Cloud Economics

- •20.2.1 Economic Context

- •20.2.2 Economic Benefits

- •20.2.3 Economic Costs

- •20.2.5 The Economics of Green Clouds

- •20.3 Quality of Experience in the Cloud

- •20.4 Monetization Models in the Cloud

- •20.5 Charging in the Cloud

- •20.5.1 Existing Models of Charging

- •20.5.1.1 On-Demand IaaS Instances

- •20.5.1.2 Reserved IaaS Instances

- •20.5.1.3 PaaS Charging

- •20.5.1.4 Cloud Vendor Pricing Model

- •20.5.1.5 Interprovider Charging

- •20.6 Taxation in the Cloud

- •References

- •21.1 Introduction

- •21.2 Background

- •21.3 Experiment

- •21.3.1 Target Application: Value at Risk

- •21.3.2 Target Systems

- •21.3.2.1 Condor

- •21.3.2.2 Amazon EC2

- •21.3.2.3 Eucalyptus

- •21.3.3 Results

- •21.3.4 Job Completion

- •21.3.5 Cost

- •21.4 Conclusions and Future Work

- •References

- •Index

174 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

B. Amedro et al. |

|||||||||||||||||||||||||

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Fig. 10.6 Performance over Grid5000, Amazon EC2, and resource mix

execution over Grid5000; even with the balance of load by the partitioner, processes running on EC2 presented lower performance. Using both Grid5000 resources and Extra Large EC2 instances has proved to be more advantageous, presenting, on average, only 15% of overhead for such inter-domain execution when compared with the average of the best single domain ones. This is mainly due to high-latency communication and message tunneling, but this overhead could be further softened because of the possibility of adding extra resources to/from the grid/Cloud.

From a cost-performance point of view, previous performance evaluations of Amazon EC2 [10] showed that MFlops obtained per dollar spent decreases exponentially with increasing computing cores, and the cost for solving a linear system increases exponentially with the problem size. Our results indicate the same when using Cloud resources exclusively. Mixing resources, however, seems to be more feasible since a trade-off between performance and cost can be reached by the inclusion of in-house resources in computation.

10.5 Dynamic Mixing of Clusters, Grids, and Clouds

As we have seen, mixing Cloud and private resources can provide performance close to that of a larger private cluster. However, doing so in a static way can lead to a waste of resources if an application does not need the computing power during its complete lifetime. We will now present a tool that enables the dynamic use of Cloud resources.

10.5.1 The ProActive Resource Manager

The ProActive Resource Manager is a software for resource aggregation across the network, developed as a ProActive application. It delivers compute units represented

10 An Efficient Framework for Running Applications on Clusters, Grids, and Clouds |

175 |

by ProActive nodes (Java Virtual Machines running the ProActive Runtime) to a scheduler that is in charge of handling a task flow and distributing tasks or accessible resources. Owing to the deployment framework, presented in the Section 3.2, it can retrieve computing nodes using different standards such as SSH, LSF, OAR, gLite, EC2, etc. Its main functions are:

•Deployment, acquisition, and release of ProActive nodes to/from an underlying infrastructure

•Supplying ProActive Scheduler with nodes for task executions, based on required properties

•Maintaining and monitoring the list of resources and managing their states

Resource Manager is aimed at abstracting the nature of a dynamic infrastructure and simplifying effective utilization of multiple computing resources, enabling their exploitation from different providers within a single application. In order to achieve this goal, the Resource Manager is split into multiple components.

The core is responsible for handling all requests from clients and delegating them to other components. Once the request for getting new nodes for computation is received, the core “redirects it to a selection manager.” This component finds appropriate nodes in a pool of available nodes based on criteria provided by clients, such as a specific architecture or a specific library.

The pool of nodes is formed by one or several node aggregators. Each aggregator (node source) is in charge of node acquisition, deployment, and monitoring from a dedicated infrastructure. It also has a policy defining conditions and rules of acquiring/releasing nodes. For example, a time slot policy will acquire nodes only for a specified period of time.

All platforms supported by GCMD are automatically supported by the ProActive Resource Manager. When exploiting an existing infrastructure, the Resource Manager takes into account the fact that it could be utilized by local users, and provides fair resource utilization. For instance, Microsoft Compute Cluster Server has its own scheduler and the ProActive deployment process has to go through it instead of contacting cluster nodes directly. This behavior makes possible the coexistence of ProActive Resource Manager with others without breaking their integrity.

As we mentioned earlier, the node source is a combination of infrastructure and a policy representing a set of rules driving the deployment/release process. Among several such predefined policies, two have to be mentioned. The first addresses a common scenario when resources are available for a limited time. The second is a balancing policy – the policy that holds the number of nodes depending on the user’s needs. One such balancing policy is implemented by the Proactive Resource Manager, which acquires new nodes dynamically when the scheduler is overloaded and releases them as soon as there is no more activity in the scheduler.

Using node sources as building blocks helps to describe all resources at your disposal and the way they are used. Pluggable and extensible policies and infrastructures make it possible to define any kind of dynamic resource aggregation scenarios. One of such scenario is Cloud bursting.

176 |

B. Amedro et al. |

10.5.2 Cloud Bursting: Managing Spike Demand

Companies or research institutes can have a private cluster or use Grids to perform their daily computations. However, the provisioning of these resources is often done based on average usage for cost management reasons. When a sudden increase in computation arises, it is possible to offload some of them to a Cloud. This is often referred to as Cloud bursting.

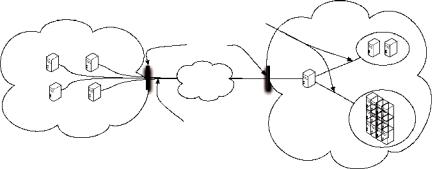

Figure 10.7 illustrates a Cloud bursting scenario. In our example, we have an existing local network that is composed of a ProActive Scheduler with a Resource Manager. This resource manager handles computing resources such as desktop machines or clusters. In our figure, these are referred to as local computing nodes.

A common kind of application for a scheduler is a bag of independent tasks (no communication between the tasks). The scheduler will retrieve a set of free computing nodes through the resource manager to run pending tasks. This local network is protected from Internet with a firewall that filters connections.

When the scheduler experiences an uncommon load, the resource manager can acquire new computing nodes from Amazon EC2. This decision is based on a scheduling loading policy, which takes into account the current load of the scheduler and the Service Level Agreement provided. These parameters are directly set in the resource manager administrator interface. However, when offloading tasks to the Cloud, we have to pay attention to the boot delay that implies a waiting time of few minutes between a node request and its availability for the scheduler.

The Resource Manager is capable of bypassing firewalls and private networks by any of the approaches presented in Section 3.3.3.

10.5.3 Cloud Seeding: Dealing with Heterogeneous Hardware and Private Data

In some cases, a distributed application, composed of dependent tasks, can perform a vast majority of its tasks on a Cloud, while running some of them on a local

|

RMI Connections |

Local network |

|

|

|

Amazon EC2 |

Firewalls |

|

|

INTERNET |

Local computing |

|

nodes |

|

|

|

ProActive |

EC2 computing |

|

Scheduler & |

|

Resource Manager |

|

instances |

HTTP Connections |

Fig. 10.7 Example of a Cloud bursting scenario