Rivero L.Encyclopedia of database technologies and applications.2006

.pdf

Text Databases

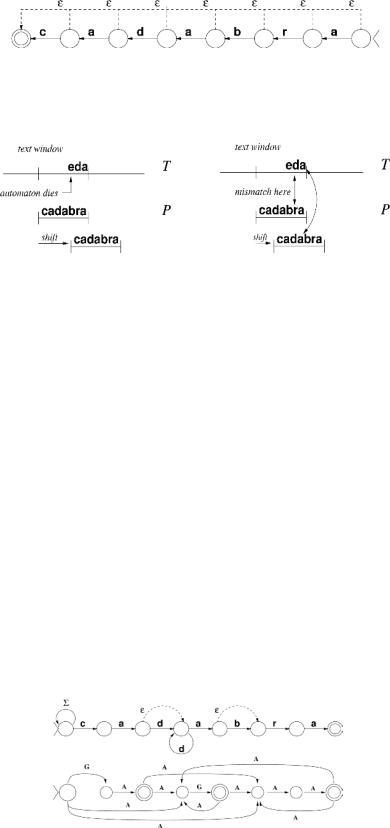

Figure 2. An NFA to recognize the suffixes of “cadabra,” reversed

Figure 3. On the left, the BDM search process in a window. On the right, the same window processed by Horspool

an imperfect way that gives shorter shifts but runs faster because it is simpler.

Horspool algorithm is not based on automata. It simply compares the current text window against P, reports an occurrence in case of equality, and then shifts the window so that its last character c aligns with the last occurrence of c in P. This does not lose any possible occurrence and wins because of its simplicity in all but small alphabets (see Figure 3, right side).

time exponential in m, which is disturbing in practice even for moderate sized patterns. Another approach is to simulate the NFA directly using bit-parallelism. Very efficient bit-parallel simulations of the restricted extended patterns mentioned previously have been developed. When simulating general regular expressions, however, the same exponential dependency on m appears. It is possible, using bit-parallelism, to search in O(mn/log(s)) time given O(s) space for the preprocessing.

Extended Patterns and Regular

Expressions

Patterns with wild cards, optional characters, gaps, and, in general, regular expressions, can be searched for by extending algorithms designed for simple strings (Navarro & Raffinot, 2002). The prevailing approach is, again, based on automata. Figure 4 shows some examples. A regular expression can be converted into an NFA and then a DFA, which can be used for searching in O(n) worstcase time. The problem is that this may need space and

Approximate Pattern Matching

It is usually convenient to allow some tolerance in pattern matching against the text, independently of the pattern searched for. The usual approach is to define a distance between strings that models the desired tolerance and let the user specify a tolerance threshold k together with P. Then, the system reports text substrings whose distance to P does not exceed k (Navarro, 2001; Navarro & Raffinot, 2002).

Figure 4. On top, an NFA to recognize an extended pattern with optional and repeatable characters. On the bottom, the NFA of a general regular expression.

690

TEAM LinG

Text Databases

Figure 5. An NFA to find “cadabra” within edit distance at most 2. Unlabeled arrows are traversed by Σ and e

6

The most general technique for approximate pattern matching relies on dynamic programming. A virtual matrix is filled such that at cell (i,j) contains the minimum distance between the length-i prefix of P and a substring of T finishing at position j. Each cell can be filled in constant time, giving O(mn) worst-case search time.

For some simple (and popular) distance functions, such as the edit distance, much faster algorithms exist. As before, is it possible to express the search as the outcome of an automaton, as depicted in Figure 5. This NFA can be made deterministic so as to search in O(n) worst-case time. As for regular expressions, the problem is that the preprocessing time and space is exponential in m or k, which makes the DFA approach unpopular. Bit-parallelism, however, can be used to simulate the NFA directly so as to obtain very practical O(kmn/w) worst-case time algorithms. Moreover, the dynamic programming matrix can be computed in bit-parallel so as to obtain O(mn/w) time.

Another useful approach for the case of low k/m values (which are the most interesting in practice) is to filter the text to discard most of it with a necessary condition that can be checked quickly. For example, if P is cut into k + 1 nonoverlapping pieces, then any occurrence within edit distance k must contain one of the pieces unaltered. A multipattern search for the pieces followed by classical verification of areas around the

occurrences is very simple and one of the fastest algorithms, with average complexity O(nk logσ(m)/m). For small alphabets, the best algorithms in practice are also optimal on average: O(n(k+logσ(m))/m).

Several of these techniques can also be adapted to approximate searching of regular expressions and extended patterns, with good results.

Indexed Searching

When the text can be preprocessed to build an index, the most popular general-purpose choices are suffix trees and arrays (Gusfield, 1997). The best choice for the specific case of natural language is the inverted index, reviewed later in this article.

A digital tree or trie is a tree where each branch is labeled by a character, and the children of a node are labeled by different characters. The set of strings represented by a trie are obtained by reading all its root-to- leaf paths and concatenating the characters labeling each path. A suffix trie is a trie representing all the suffixes of a given text. At the leaves, we store the starting position of the suffixes. Note that, because every substring is the prefix of some suffix, every text substring can be found by following a path from the suffix trie root. Hence, in order to search for all the occurrences of P in T, it is enough to traverse the suffix tree path of T corre-

Figure 6. The suffix trie and suffix array for the text “abracadabra”

691

TEAM LinG

sponding to the characters of P. All the occurrences of P are then found at the leaves of the subtree. This requires optimal time O(m+occ) to retrieve the occ occurrences of P (see Figure 6).

Although optimal at searching, the suffix trie can be of quadratic size in the worst case. The suffix tree is a variant that guarantees O(n) space and construction time, making the overall scheme optimal in theory. In practice, even the suffix tree may be too large (10 to 20 times the text size), so more compact structures may be preferred. The most popular is the suffix array, which contains just the leaves of the suffix tree, or which is the same, the sequence of pointers to all text suffixes in lexicographic order. The suffix array requires four times the text size, which is more manageable, though still large. Just as all the occurrences of P appear in a single subtree of the suffix tree, they appear in a single interval of the suffix array, which can be binary searched at O(mlogn) time. Very recently, compression techniques for suffix arrays have obtained great success, but the techniques are still immature.

Suffix trees and arrays can be searched for complex patterns, too (Navarro, Baeza-Yates, Sutinen, & Tarhio, 2001). The general idea is to define an automaton that recognizes the desired pattern, and backtrack in the suffix tree (or simulate a backtrack on the array), going through all the branches. The traversal stops either when the automaton runs out of active states (in which case the subtree is abandoned) or when it reaches a final state (in which case the subtree leaves are reported). On most regular expressions and approximate searching, O(nλ) worst-case time, for some 0 < λ < 1, is achieved.

Natural language texts, at least in most Western languages, have some remarkable features that make them easier to search (Baeza-Yates & Ribeiro-Neto, 1999; Witten, Moffat, & Bell, 1999). The text can be seen as a sequence of words. The number of different words grows slowly with the text size. The query is usually a word or a sequence of words (a phrase). In this case, inverted indexes are a low-cost solution that provide very good performance. An inverted index is just a list of all the different words in the text (the vocabulary), plus a list of the occurrences of each such word in the text collection. A search for a word reduces to a vocabulary search (with hashing, for example) plus outputting the list. A search for a complex pattern that matches a word is solved by sequential scanning over the vocabulary plus outputting the matching word lists. A phrase search is solved by intersecting the occurrences of the involved words. An inverted index typically needs 15% to 40% of the space of the text, but several compression techniques to the index and the text can be successfully applied (Witten, Moffat, & Bell, 1999).

Text Databases

SEMANTIC SEARCH

In semantic searching, the text collection is seen as a set of documents, and the goal is to establish the relevance of each document with respect to a user query, so as to present the most relevant ones to the user. The query can be a set of words or phrases, or even another document. As accuracy in the answers is a central concern, the choice of a model to compute how relevant is a document with respect to a query is fundamental (BaezaYates & Ribeiro-Neto, 1999).

Relevance Models

The most popular relevance model is called the vector model, in which each document is seen as a vector in a very high dimensional space. Each coordinate represents a word of the collection vocabulary. The value of the document vector along a coordinate is given by the relevance of the corresponding word to distinguish that document from others. The query is also seen as a vector, and the cosine between two vectors is their similarity measure.

A popular measure of the relevance of a word to distinguish a document from others is tf × idf, where tf=f/mf, f is the number of times the word appears in the document, mf is the number of times the most frequent word of the document appears in it, idf = log(N/n), N is the total number of documents, and n is the number of documents where the word appears. For Web searching, this model is usually enriched with the information given by the links between Web pages so that pages pointed to from “relevant” pages are also considered relevant.

Another less popular model, which is usually mixed with the vector model, is the boolean model. In this model, documents are relevant or not relevant. The query specifies which words must appear in relevant documents, which words must not appear, and indicates intersections, unions and complementation of resulting document sets. There are several other less popular models of relevance.

Evaluation

An issue related to relevance computation is how to evaluate the accurateness of the results. The most popular measures are called precision and recall. Precision indicates which fraction of the retrieved documents are actually relevant. Recall indicates which fraction of the relevant documents were actually retrieved. A human judgement is necessary to establish which are the docu-

692

TEAM LinG

Text Databases

ments really relevant to the query. Note that it is easy to have higher precision by retrieving fewer documents and higher recall by retrieving more documents, since the ranking just orders the document but does not indicate how many to retrieve. Hence the correct measure is a “precision-recall plot,” where the precision and recall obtained when retrieving more and more documents are drawn in a two-dimensional plot (one coordinate for precision and the other for recall). For example, one can plot the precision obtained for recall values of 0%, 10%, ..., 100%.

Indexes

Variants of the inverted index are useful for semantic searches as well. These indexes indicate only the documents where each word appears, as well as its number of occurrences. This is useful to compute term f in the vector ranking. Moreover, because only the few highest ranked documents will be output, documents with highest f value will be the most interesting ones, so the lists of documents where each term appears are sorted by decreasing f value. Several heuristics are used to avoid processing long lists of probably irrelevant documents. Although these may alter the correct outcome of the query, the relevance model is already a guess of what the user wants, so it is permissible to distort it a bit in exchange for large savings in efficiency. Note that this tradeoff is not permitted in syntactic searching.

FUTURE TRENDS

Textual databases face the following challenges in upcoming years:

•The increasing size of text collections, which in cases such as the Web, reach several terabytes. Maintaining efficiency and accuracy of results under this scenario is much more complex than for medium-sized collections.

•The increasing complexity of search operations, typical of applications in computational biology and other areas. This poses the need to find more complex patterns, which are usually much more difficult to search for than simple strings.

•The low quality of the data, with different reasons depending on the application: no quality control in the Web, experimental errors in DNA sequences, and so forth. This poses the need for approximate matching methods well-suited to each application.

•The size of the indexes, which, especially for general texts, is more relevant than the search speed. In a

large text, such an index requires frequent access to secondary memory, which is much slower than main 6 memory.

•The need to deal with secondary memory, which is a rather undeveloped feature in some indexes, especially those for general texts. Some indexes still perform poorly in secondary memory.

•The need to cope with changes in the text collection, as text indexes usually require expensive rebuilding upon text changes.

•The need to provide, for text databases, the same level of data management achieved for atomic values in traditional databases, with respect to powerful query languages (e.g., permitting joins), concurrency, recovery, security, and so forth.

REFERENCES

Baeza-Yates, R., & Ribeiro-Neto, B. (1999). Modern information retrieval. Reading, MA: Addison-Wesley.

Gusfield, D. (1997). Algorithms on strings, trees and sequences: Computer science and computational biology. Cambridge, UK: Cambridge University Press.

Navarro, G. (2001). A guided tour to approximate string matching. ACM Computing Surveys, 33(1), 31-88.

Navarro, G., Baeza-Yates, R., Sutinen, E., & Tarhio, J. Indexing methods for approximate string matching. IEEE Data Engineering Bulletin, 24(4), 19-27.

Navarro, G., & Raffinot, M. (2002). Flexible pattern matching in strings: Practical on-line search algorithms for texts and biological sequences. Cambridge, MA: Cambridge University Press.

Witten, I., Moffat, A., & Bell, T. (1999). Managing gigabytes (2nd Ed.). San Francisco: Morgan Kaufmann.

KEY TERMS

Approximate Searching: Searching that permits some differences between the pattern specification and its text occurrences.

Bit-Parallelism: A technique to store several values in a single computer word so as to process them all at once.

Edit Distance: A measure of string similarity that counts the number of character insertions, deletions and substitutions needed to convert one string into the other.

693

TEAM LinG

Indexed Searching: Searching with the help of an index, a data structure previously built on the text.

Precision and Recall: Measures of retrieval quality, relating the documents retrieved with those actually relevant to a query.

Sequential Searching: Searching without preprocessing the text.

Suffix Trees and Arrays: Text indexes that permit fast access to any text substring.

Semantic Search: A search in natural language text, based on the meaning rather than the syntax of the text.

Text Databases

Syntactic Search: A text search based on string patterns that appear in the text, without reference to meaning.

Text Database: A database system managing a collection of texts.

Vector Model: A model to measure the relevance between a document and a query, based on sharing distinctive words.

694

TEAM LinG

|

695 |

|

Transaction Concurrency Methods |

|

|

|

6 |

|

|

|

|

|

|

|

Lars Frank

Copenhagen Business School, Denmark

INTRODUCTION

In this article, I will evaluate the most important methods for increasing concurrency between transactions. Because different transactions have different needs, it is important that the evaluation gives an overview of the advantages and disadvantages of the different optimization methods.

It is possible to increase concurrency between database transactions and minimize the time in which conflicts may occur by splitting major transactions into minor transactions performing the same operations. For short, I will call this concurrency optimization technique short-duration locking, even though not all concurrency control methods use locking. Concurrency may also be increased by using by using low isolation levels, in which certain types of conflicts between transactions are accepted. In practice, either two-phase locking or optimistic concurrency control is used for concurrency control. When one of these concurrency control methods is chosen, the only way to increase concurrency further is to relax the isolation property. In practice, the isolation property is relaxed by using low isolation levels, short duration locks, or both. Therefore, these optimization methods are analyzed, too.

By using the results of my analyses, it should be easier to increase concurrency between database transactions. This is especially important in long-lived transactions, hotspots, and multidatabases in which the traditional concurrency control methods often have to give up in practice. However, when either short duration locking or low isolation levels optimize concurrency, the traditional atomicity, consistency, isolation, and durability (ACID) properties are normally lost. The problems caused by a lack of ACID properties may be managed by using approximated ACID properties (i.e., from an application point of view the system should function as if all the traditional ACID properties had been implemented).

When the isolation property is relaxed, the following isolation anomalies may occur (Berenson et al., 1995; Breibart, 1992):

updated by another transaction. Later, the update is overwritten by the first transaction. In practice, the read and the update of a record is often executed in different subtransactions to prevent locking the record across a dialogue with the user. In this situation, it is possible for a second transaction to update the record between the read and the update of the first transaction. If countermeasures are not used, the update of the second transaction may be lost.

•The dirty read anomaly is by definition a situation in which a first transaction updates a record without committing the update. After this, a second transaction reads the record. Later, the first update is aborted (i.e., the second transaction may have read a nonexisting version of the record). In extended transaction models, this may happen when the first transaction updates a record by using a compensatable subtransaction and later aborts the update by using a compensating subtransaction. If a second transaction reads the record before it is compensated, the data read will be “dirty.”

•The nonrepeatable read anomaly or fuzzy readis by definition a situation in which a first transaction reads a record without using long duration locks. This record is later updated and committed by a second transaction before the first transaction is committed or aborted. In other words, one cannot rely on what one has read. In extended transaction models, this may happen when the first transaction reads a record updated by a second transaction, which commits the record locally before the first transaction commits it globally.

•The phantom anomaly is normally not important and, therefore, I do not deal with it in this article.

BACKGROUND

Different concurrency methods and isolation levels have been analyzed and evaluated (e.g., Gray & Reuter, 1993), and short duration locks have been used in practice for many years. However, to my knowledge, short

•The lost update anomaly is by definition a situaduration locks have not been evaluated and compared to

tion in which a first transaction reads a record for |

other methods in terms of increasing concurrency be- |

update without using locks. After this, the record is |

tween transactions. |

Copyright © 2006, Idea Group Inc., distributing in print or electronic forms without written permission of IGI is prohibited.

TEAM LinG

The transaction model described in the next section is the countermeasure transaction model described by Frank and Zahle (1998), Frank (1999, 2004), and Frank and Kofod (2002). This model owes many of its properties to, for example, Garcia-Molina and Salem (1987); Mehrotra, Rastogi, Korth, and Silberschatz (1992); Weikum and Schek (1992); and Zhang, Nodine, Bhargava, and Bukhres (1994). The countermeasure transaction model (Frank & Zahle, 1998) is important because it is the first systematic attempt to find countermeasures against isolation anomalies caused by using short duration locking. In this article, I have generalized this idea and included new countermeasures that especially apply to isolation level anomalies.

Kempster, Stirling, and Thanisch (1999) have described isolation anomalies in detail. The objective of this article is not to evaluate concurrency control methods as such, as this has been done by Thomasian (1998), for example.

In the following, I will first describe how the atomicity property can be implemented by using extended transaction models. This is important if short duration locks are used. Next, I will describe and evaluate some of the methods most used to increase concurrency between transactions. The results of the evaluation will either be explained or adopted from references.

ATOMICITY IMPLEMENTATION FOR TRANSACTIONS THAT USE SHORT DURATION LOCKS

An updating transaction has the atomicity property and is called atomic if either all or none of its updates are executed. If short duration locks are used, the atomicity property cannot be implemented automatically by the DBMS, and therefore extended transaction models are recommended. In extended transaction models, a global transaction consists of a root transaction (client transaction) and several single-site subtransactions (server transactions). The subtransactions can be nested transactions (i.e., a subtransaction may be a parent transaction for other subtransactions). In the following, I will give a broad outline of how the atomicity property is implemented in extended transaction models.

In extended transaction models, a global transaction is partitioned into the following types of subtransactions that may be executed in different locations:

•The Pivot subtransaction manages the atomicity of the global transaction (i.e., the global transaction is committed when the pivot subtransaction is committed locally). If the pivot subtransaction aborts,

Transaction Concurrency Methods

all the updates of the other subtransactions must be compensated or not be executed.

•Compensatable subtransactions, that all may be compensated. Compensatable subtransactions must always be executed before the pivot subtransaction, to make it possible to compensate them if the pivot subtransaction cannot be committed. Compensation is achieved by executing a compensating subtransaction.

•Retriable subtransactions are designed so that the execution is guaranteed to commit locally (sooner or later) if the pivot subtransaction is committed. An update propagation tool is used to automatically resubmit the request for execution until the subtransaction has been committed locally (i.e., the update propagation tool is used to force the retriable subtransaction to be executed).

Executing compensatable, pivot, and retriable subtransactions, in that order, implements the global atomicity and atomicity property of a transaction (Breibart et al., 1992).

METHODS TO INCREASE

CONCURRENCY

In this section, I will analyze and evaluate pessimistic concurrency control, optimistic concurrency control, low isolation levels, and short duration locks as different methods to increase concurrency. Table 1 gives an overview of the properties of the methods used to increase concurrency.

Pessimistic Concurrency Control

Locking protocols implements serializability by using locks (i.e., each transaction locks the data it uses to prevent other transactions from accessing its data). Locking protocols can be described as pessimistic, because they assume that data used by one transaction may be needed by other transactions and, therefore, it is better to lock the data.

Pessimistic concurrency control can be optimized by using shared and exclusive locks for reading and writing/updating, respectively. All locks should have long duration (i.e., they must be held until the transaction has been committed). However, the designer of the transaction may divide it into subtransactions that manage the locks independently of the parent transaction. In this way, the programmer can force short duration locks to be used. If short duration locks are used, approximated ACID properties should be used, as described previ-

696

TEAM LinG

Transaction Concurrency Methods

ously. Deadlock occurs when two or more transactions are in a simultaneous waiting position, whereby each is waiting for the other to release a lock before it can proceed. By using short duration locks, it is possible to prevent and reduce the number of deadlocks, because the probability of deadlock diminishes with the number of records locked in a subtransaction.

Franaszek and Robinson (1985) show that optimistic concurrency control normally gives more concurrency 6 between transactions than locking protocols. However,

in a hotspot, in which a record is updated by many concurrent transactions, pessimistic concurrency control is generally better than optimistic concurrency control (O’Neil, 1986).

Optimistic Concurrency Control |

Low Isolation Levels |

Optimistic concurrency control assumes that data used by one transaction normally will not be needed by other concurrent transactions, and therefore all transactions have access to all data. However, at commit time a conflict occurrence, is checked.. If a conflict has occurred, the offending transaction is restarted. Otherwise, all updates of the transaction are written to the database and the transaction is committed. Optimistic concurrency control does not use locks because all transactions have access to all data until commit time. By dividing a transaction into subtransactions, it is possible to increase concurrency as the time in which conflicts may occur is reduced. However, if subtransactions are used, approximated ACID properties should be used in the same way as described previously.

The isolation level of a transaction for a table is defined as the degree of interference tolerated on the part of concurrent transactions. Therefore, it is possible to define many different isolation levels. Table 2 defines the relationship between the isolation levels of the SQL standard and the isolation anomalies that are accepted for a given isolation level.

The lower the isolation level of a transaction, the more concurrency is allowed and the more different isolation anomalies may occur for the transaction. Therefore, to achieve high performance and availability, it is important to select as low an isolation level as the applications can tolerate. The isolation levels defined by the SQL standard can primarily increase the concurrency in read–write conflicts, whereby transactions read re-

Table 1. Evaluation overview of methods to increase concurrency

Evaluation |

Pessimistic |

Optimistic concurrency |

Low isolation levels |

Short duration locks |

criteria |

concurrency control |

control |

|

|

Concurrency |

Allows concurrency |

Allows concurrency |

Concurrency is improved |

Concurrency is improved |

|

except when |

except for conflicting |

at the costs of “non-lost- |

at the costs of all types of |

|

conflicting locks |

transactions |

update” anomalies |

isolation anomalies |

|

occur |

|

|

|

Deadlock |

When deadlock |

Eliminates deadlock, but |

Can eliminate deadlocks |

Can reduce/ |

|

occurs one of the |

one of the transactions |

caused by read-write |

eliminate dead-locks |

|

transactions must be |

must be aborted in case |

conflicts |

caused by both read-write |

|

aborted |

of a conflict |

|

and write-write conflicts |

Hotspots |

Pessimistic con- |

Pessimistic con-currency |

Can eliminate read-write |

Can reduce both read-write |

|

currency is generally |

is generally better than |

conflicts in hotspots |

and write-write conflicts |

|

better than optimistic |

optimistic concurrency |

|

in hotspots |

|

concurrency control |

control in hotspots |

|

|

|

in hotspots |

|

|

|

Atomicity |

No problems |

No problems |

No problems |

Extended transaction |

property |

|

|

|

models should be used |

Consistency |

No problems |

No problems |

Countermeasures may be |

Asymptotic consistency |

property |

|

|

used to manage isolation |

should normally be used |

|

|

|

anomalies |

|

Isolation |

No problems except |

No problems except for |

Countermeasures may be |

Countermeasures should |

property |

for long-duration |

long-duration |

used to manage isolation |

always be used to manage |

|

transactions |

transactions |

anomalies |

isolation anomalies |

Durability |

No problems |

No problems |

No problems |

No problems if the |

property |

|

|

|

atomicity property is |

|

|

|

|

implemented |

Distribution |

Distributed con- |

Distributed con-currency |

May be implemented in a |

Retriable subtransactions |

options |

currency control will |

control will decrease |

distributed DBMS |

may be necessary to |

|

decrease performance |

performance and |

without further problems |

implement the atomicity |

|

and availability |

availability |

|

property |

Development |

A DBMS facility |

A DBMS facility |

Extra costs for |

Extra development costs |

costs |

|

|

countermeasures against |

for approximated ACID |

|

|

|

isolation anomalies |

properties |

697

TEAM LinG

Transaction Concurrency Methods

Table 2. Anomalies allowed by the different isolation levels of SQL

SQL |

Isolation anomalies |

|

|

isolation levels |

Dirty read |

Nonrepeatable read |

Phantoms |

Serializable |

No |

No |

No |

Repeatable read |

No |

No |

Yes |

Read committed |

No |

Yes |

Yes |

Read uncommitted |

Yes |

Yes |

Yes |

spectively write and update a table. As described later, this is not as good as short duration locking, which can reduce oreliminate both read–write and write–write conflicts.

Countermeasures against the isolation anomalies enable applications to tolerate isolation anomalies to a certain degree. In the next section, I will describe countermeasures against the isolation anomalies that may occur when short duration locks are used. In principle, these countermeasures can also be used against anomalies caused by low isolation. However, countermeasures against lost updates are an exception as long as low isolation levels do not allow lost updates.

In the following, I will describe countermeasures that can be used to design transactions that can tolerate the isolation anomalies caused by selecting low isolation levels.

The semantic analysis countermeasure analyzes the semantic meaning of the data in context of its use to relax isolation. For example, from a semantic point of view, dirty data are probably correct, because the data normally would have been committed if an error had not occurred and caused the update to be aborted. Therefore, it is normally safe to read dirty data if the data cannot be misused (e.g., selling from empty stocks). On the other hand, it is normally risky to read nonrepeatable data because the data are out of date and therefore might be wrong. In other words, data that cannot be misused should have an isolation level in which dirty reads are accepted and nonrepeatable reads are forbidden. Unfortunately, such an isolation level does not yet exist in the SQL standard. However, a great deal of research into the foundations of isolation levels has been done (Kempster et al., 1999), and some DBMS products do not respect the isolation level limitations of the SQL standard.

Alternatively, if data with a low isolation level can be misused, the data must be protected by higher isolation, provided that other countermeasures cannot give sufficient protection.

The restricted use countermeasure limits the use of dirty and nonrepeatable read data. For example, this type of data must not be written to the database either directly or in a modified form, as the value of the data may be inconsistent. The data may be used for decision-

making if the data are updated using the pessimistic view countermeasure or if the value of the data is not time critical. However, a user should know that, in both cases, the data might be inconsistent with other data stored in the database.

Short Duration Locks

In distributed systems, transactions often consist of a root transaction in a client location that manages several subtransactions in one or more servers. Each time the root transaction receives new information from the user, it starts a new subtransaction in a server until the transaction is finished. This type of transaction is long lived, as the user may be relatively slow in making his or her input, and other concurrent users do not want to wait for the first user to finish his or her job before they can access the same data. This is especially the case if the first user is interrupted or lacks information to finish his or her job. Therefore, short duration locks are often used in practice. Short duration locks are local locks that are released as soon as possible (i.e., data will, for example, not be locked across locations). In systems with relatively many updates, such as ERP-systems and e-commerce systems, low isolation levels cannot solve the availability problem, as all update locks must be exclusive. In such situations, I recommend using short duration locks and an extended transaction model that solves the problems with the missing ACID properties. Next, I will describe some of the most important countermeasures against isolation anomalies that occur when the isolation property is reduced by short duration locking.

The reread countermeasure is primarily used to prevent the lost update anomaly. Transactions that use this countermeasure read a record twice, using short duration locks for each read. If a second transaction has changed the record between the two readings, the transaction aborts itself after the second read. Often, it is not acceptable to lock records across a dialogue with a user, as no one knows how long it will take the user to make the input data. In such a situation, the reread countermeasure may be used to protect against the lost update anomaly in the following way:

698

TEAM LinG

Transaction Concurrency Methods

First, the application reads all the records needed using short duration shared locks, which are released after the readings. Next, the user enters data for the updating process assuming that no other transaction will change the records. After the user has entered the data, the application rereads the records, this time using short duration exclusive locks. The update will only be executed and committed if the record has not been changed since it was read the first time. (An update time-stamp in all database records might detect whether data has been changed since it was read the first time). The reread countermeasure may prevent the lost update anomaly and the dirty read anomaly in compensatable and pivot subtransactions. The reread countermeasure is the most used measure in practice.

The commutative updates countermeasure can prevent lost updates merely by using commutative operators. Adding and subtracting an amount from an account are examples of commutative updates. If a subtransaction only has commutative updates, it may be designed as commutable with other subtransactions that also only have commutative updates. This is very important because compensatable, compensating, and retriable subtransactions have to be commutative to enable the countermeasure to prevent the lost update anomaly. The commutative updates countermeasure has earlier been described in, e.g. Sagas (Garcia-Molina and Salem, 1987) and Open Nested Transactions (Weikum and Schek, 1992), where the countermeasure is used in both central and distributed databases.

The pessimistic view countermeasure against short duration locks anomalies reduces or eliminates the dirty read anomaly, the nonrepeatable read anomaly, or both by giving the users a pessimistic view of the situation. In other words, the user cannot misuse the information. The purpose is to eliminate the risk involved in using data in which long duration locks should have been used. A pessimistic view countermeasure may be implemented by using the following:

•The pivot or compensatable subtransactions for updates that limit the users’ options, and

•The pivot or retriable subtransactions for updates that increase the users’ options.

isolation levels) while it is running. The number of isola-

tion levels of the SQL standard is also very limited, and 6 there is no reason for ordering them in a hierarchy. By dropping all these restrictions, it is possible to both increase concurrency and find many more countermea-

sures to manage the risky anomaly situations. Especially when short duration locking is used, it is important that an application can force other applications to accept a minimum of locking rules, as the application may know that the risk of seeing the update depends on whether some “stock” has been increased or diminished.

Recently, there have been major developments within replication methods. How to manage concurrency should be integrated in the decision of how to manage recovery and data replication.

CONCLUSION

In this article, I have evaluated the most important methods for increasing concurrency between transactions. Because different transactions have different needs, it is important that the evaluation gives an overview of the advantages and disadvantages of the different optimization methods.

Short duration locking and low isolation levels are mandatory for long-lived transactions, as long duration locks per definition cannot be recommended for such transactions. As all transactions with a user dialogue are long lived, it is important to know how these techniques can be used to increase concurrency.

The countermeasure transaction model (Frank & Zahle, 1998) is important, as it is the first systematic attempt to find countermeasures against isolation anomalies caused by using short duration locking. In this article, I have generalized this idea and included new countermeasures that especially apply to isolation-level anomalies. I have also described how the different optimization methods can be integrated. This is important, as short duration locking and the use of low isolation levels are independent methods to increase concurrency, and therefore they can support each other. I hope that my analysis will make it easier to increase concurrency between database transactions in practice.

FUTURE TRENDS

In the current SQL standard, it is possible for a program only to select an isolation level for itself. However, a program may know if it is updating risky data, and, therefore, it should also be possible for a program to force other programs to accept a minimum of locking rules (i.e.,

REFERENCES

Berenson, H., Bernstein, P., Gray, J., Melton, J., O’Neil, E., & O’Neil, P. (1995). A critique of ANSI SQL isolation levels. Proceedings of the ACM SIGMOD Conference

(pp. 1-10).

699

TEAM LinG