Rivero L.Encyclopedia of database technologies and applications.2006

.pdfral statements to expressions using a combination of temporal and conventional relational algebraic operators. Currently, a proposal to add TSQL to the existing ANSI and ISO SQL standards is under consideration by the respective governing bodies.

BUSINESS APPLICATIONS

One of the aims of this article is the integration of the theoretical and practical aspects of temporal databases. To this point, the presentation has dealt with theoretical aspects of temporal databases. Now, we will look at some of the business applications of the temporal databases. The first type is the data warehouse. Data warehouses are enterprise-wide databases. These are enormous corporate databases, which are generally used to store all information about a company. As stated earlier, the element of time is crucial to many businesses, and it is in the data warehouse that the time dimension can be stored and utilized for querying the data. It is important to relate data warehouses with temporal databases. Every data warehouse has a time dimension that makes every data warehouse a temporal database (Kimball, 1997; Snodgrass, 1998). “The time dimension is a unique and powerful dimension in every datamart and enterprise data warehouse” (Kimball, 1997). Golan and Edwards (1993) discuss how temporal rules for trading at the Toronto Stock Exchange (TSE) were developed by First Marathon Securities in Canada, who derived relationships between 32 stock and economic indicators including TSE, DOW, S&P, P/E, and seven major industry indexes over a six-month period. The rules describe stock behavior of major TSE trading companies and their sensitivity to market or economic indices. The reported benefits include the description of relationships and stocks’ sensitivities to various indices.

Stantic, Khanna, and Thornton (2004) state that most modern database applications contain a significant amount of time-dependent data and a substantial proportion of this data is now-relative, that is, current now. Their research addresses the indexing of now-relative data, which is a natural and meaningful part of every temporal database as well as the focus of most queries. They propose a logical query transformation that relies on the representation of current time and the geometrical features of spatial access methods, and empirically demonstrate that this method is efficient on now-rela- tive bi-temporal data, outperforming a straightforward maximum time stamp approach by a factor of more than 20, both in number of disk accesses and CPU usage.

The scientific laboratory is another good business application where temporal database plays an important

Temporal Databases

role. A laboratory performs many tests and many versions of the same test. Each test often involves precise timing sequences. To ensure that time information is not lost, it must be stored in a database. Since the element of time in these tests cannot be discarded, the database in which the information is stored must be a temporal database. One of the major benefits of storing scientific test information in a temporal database that supports a temporal query language is that new patterns and knowledge can be mined from the database (Loomis, 1997). The third type of business application relies heavily on sales and marketing data. Success in sales and marketing depends heavily on effective timing. The appropriate time to approach a customer and when to advertise in certain locations is the knowledge that is essential for such types of businesses. The ability to correctly forecast this information is best provided by a temporal database. Some of the uses for temporal databases are in forecasting future events and analyzing patterns.

The last type of business application is multimedia. Multimedia is one of the fastest growing areas of business today, especially as conducted over the Internet. The time will soon come when a person will be able to order movies over the Internet and view them on a personal computer. The process is as follows: the person orders the movie, video images are stored in a multimedia database, and these are synchronized with the audio files in either the same or a different database. Synchronization means simultaneity and involves a time element.

Because multimedia is time-based, a great deal of synchronization is necessary. These media components will display in a precise synchronized order. Multimedia objects have temporal and spatial relationships that must be maintained in their presentation. Some of this data has real-time constraints in its delivery to client stations (Ozsu, 2003).

Thus, the multimedia database is another instance of a temporal database application like data warehousing.

THE FUTURE OF

TEMPORAL DATABASES

Since the foundations and principles of temporal databases are firmly rooted in the relational model of data and represent an evolutionary step, not a revolutionary one, they will stand the test of time (Date et al., 2003). As the business environment becomes increasingly competitive, the knowledge within an organization becomes an invaluable resource. To track this information, businesses must implement temporal databases. To provide

680

TEAM LinG

Temporal Databases

businesses with this capability to keep track of timevarying data, two developments are foreseen. Tuzhilin (1993) provided a good example of the benefits of integrating a temporal database with an existing business system to increase knowledge.

Second, a temporal extension will be added to existing ANSI and ISO SQL standards. This development will enable users to take advantage of new temporal features in the major database products. This extension will allow better quality and quantity of knowledge to be stored in and mined from the newly temporalized databases and gain competitive advantage. Mokbel, Xiong, and Aref (2004) discuss the Scalable Incremental hashbased Algorithm (SINA), a new algorithm for evaluating a set of concurrent continuous spatio-temporal queries. This algorithm was designed to incrementally evaluate continuous spatio-temporal queries and provide scalability in terms of the number of concurrent continuous spatio-temporal queries. The experimental results show that SINA is scalable and is more efficient than other index-based spatio-temporal algorithms.

CONCLUSION

With respect to the expected role and impact of the time construct and its usages in future database systems, active mechanisms based on event-condition-action rules will play an important role in next-generation database management systems. This can be useful to several novel applications including planning, scheduling, project management, medical information systems, geographical information systems, and natural language processing systems. Finally, although many temporal extensions of the relational data model have been proposed, there is no comparable amount of work in the context of object-oriented data models and extend the standard for object-oriented databases, defined by Object Data Management Group (ODMG) using temporal extensions. The investigation, on a formal basis, of the main issues arising from the introduction of time in an ob- ject-oriented model could lead to developments in spatio-temporal databases supporting spatial objects with continuously changing position and extent. The graphical user interfaces for spatio-temporal information, query processing in spatio-temporal databases, and storage structures and indexing techniques for spatiotemporal databases could have a strategic impact on business applications such as data mining and knowledge discovery.

REFERENCES

6

Böhlen, M.H., Jensen, C.S., & Snodgrass, R.T. (2000, December). Temporal statement modifiers. ACM Transactions on Database Systems, 25(4), 407-456.

Date, C.J. (2003). On modeling history. Retrieved January 29, 2005, from http://www.dbdebunk.com/page/ page/1027290.htm

Date, C.J. (2004). Introduction to database systems

(8th ed.). Addison-Wesley.

Date, C.J., Darwen, H., & Lorentzos, N.A. (2003). Temporal data and the relational model. Morgan Kaufmann.

David, M.M. Multimedia databases through the looking glass. Database Programming & Design Online. Retrieved January, 29, 2005, from http://www.dbpd.com/ 9705davd.htm

Gadia, S.K., & Nair, S.S. (1993). Temporal databases: A prelude to parametic data. In A. Tansel et al. (Eds.),

Temporal databases: Theory, design, and implementation (pp. 28-66). Benjamin/Cummings.

Golan, R., & Edwards, D. (1993, July). Temporal rules discovered using DataLogic/R with stock market data.

RSKD-93, 61-72.

Jensen, C.S., Mark, L., Roussopoulos, N., & Sellis, T. (1993, January). Using differential techniques to efficiently support transaction time. The International Journal on Very Large Data Bases, 2(1), 75-116.

Jensen, C.S., et al. (1993). Glossary. In A. Tansel et al. (Eds.), Temporal databases: Theory, design, and implementation (pp. 621-633). Benjamin/Cummings.

Kimball, R. (1997, July). It’s time for time. DBMS Online. Retrieved January 29, 2005, from http:// www.dbmsmag.com/9707d05.html

Loomis, T. (1997, August). Audit history and time-slice archiving in an object DBMS for laboratory databases.

Hewlett Packard Journal.

Mokbel, M.F., Xiong, X., & Aref, W.G. (2004). SINA: Scalable incremental processing of continuous queries in spatio-temporal databases. ACM Press, 623-634.

Navathe, S.B., & Rafi, A. (1993). Temporal extensions to the relational model and SQL. In A. Tansel et al. (Eds.), Temporal databases: Theory, design, and implementation (pp. 92-109). Benjamin/Cummings.

681

TEAM LinG

Ozsoyoglu, G., & Snodgrass, R.T. (1995, August). Temporal and real-time databases: A survey. IEEE Transactions on Knowledge and Data Engineering.

Ozsu, M.T. (0000). A new foundation. Database Programming & Design Online. Retrieved January 29, 2005, from http://www.dbpd.com/ozsu.htm

Snodgrass, R.T. (1998, June). Of duplicates and septuplets. Database Programming & Design, 46-49.

Stantic, B., Khanna, S., & Thornton, J. (2004). An efficient method for indexing now-relative bitemporal data. ACM International Conference Proceeding Series, 27, 113122.

Steiner, A. (2003). What are temporal databases? Retrieved January 29, 2005, from http://www.inf.ethz.ch/ personal/steiner/TemporalDatabases/Temporal DB.html

Tansel, A., et al. (Eds.). (1993). Part I: Extensions to the relational model. Temporal databases: Theory, Design, and implementation (pp. 1-5). Benjamin/ Cummings.

Tuzhilin, A. (1993). Applications of temporal databases to knowledge-based simulations. In A. Tansel et al. (Eds.), Temporal databases: Theory, design, and implementation (pp. 580-593). Benjamin/Cummings.

KEY TERMS

Bi-Temporal Database: This database supports both types of time that are necessary for storing and querying time-varying data. It aids significantly in knowledge discovery, because only the bi-temporal database is able to fully support the time dimension on three levels: the DBMS level with transaction time, the data level with valid time, and the user-level with user-defined time.

Granularity of Time: A concept that denotes precision in a temporal database. An example of progressive time granularity includes a day, an hour, a second, or a nanosecond.

Temporal Databases

ODMG: Object Data Management Group. The ODMG group completed its work on object data management standards in 2001 and was disbanded. The ODMG submitted the ODMG Java Binding to the Java Community Process as a basis for the Java Data Objects Specification (JDO). JDO provides transparent persistence (http:// www.odmg.org/).

RDBMS: Relational Database Management Systems.

Spatio-Temporal Database: A spatiotemporal element is a finite union of spatio-temporal intervals. Spatio-temporal elements are closed under the set theoretic operations of union, intersection, and complementation. Databases that support spatial objects with continuously changing position and extent. The graphical user interfaces for spatio-temporal information, query processing in spatio-temporal databases, and storage structures and indexing techniques for spatio-tem- poral databases could have a strategic impact on business applications such as data mining and knowledge discovery.

SQL: Structured Query Language is a standard interactive and programming language for getting information from and updating a database. Although SQL is both an ANSI and an ISO standard, many database products support SQL with proprietary extensions to the standard language. Queries take the form of a command language that lets you select, insert, update, find out the location of data, and so forth. There is also a programming interface.

Temporal Database: A temporal database is a database that records time-variant information. It is defined as an union of two sets of relations Rs and R1, where Rs is the set of all static relations and R1 is the set of all time-varying relations.

TSQL: Temporal Structured Query Language. With TSQL, ad hoc queries can be performed without specifying any time-varying criteria. The new criteria clause would be either ‘WHEN’ or ‘WHILE’. Either name captures the idea of a time-varying condition. Currently, a proposal to add TSQL to the existing ANSI and ISO SQL standards is under consideration by the respective governing bodies.

682

TEAM LinG

683

Text Categorization

FabrizioSebastiani

Università di Padova, Italy

INTRODUCTION

During the last 15 years, the production of documents in digital form has exploded, due to the increased availability of hardware and software tools for generating digital data (e.g., personal computers, digital cameras, word processors) and for digitizing data that had been originated in nondigital form (e.g., scanners, OCR software). This phenomenon has also strongly affected “novel” digital media such as imagery, video, music, and so forth. However, natural language text has been, at least from a quantitative viewpoint, the medium most responsible for this explosion, due to its immediacy and to the ubiquity of word processing and text authoring tools. As a consequence, there is an increased need for hardware and software solutions for storing, organizing, and retrieving the large amounts of digital text that are being produced, with an eye towards its future use.

The design of such solutions has traditionally been the object of study of information retrieval (IR), the discipline that is broadly concerned with the computermediated access to data with poorly specified semantics. While all of the previously mentioned types of media fall within the scope of IR, it is unquestionable that text has been its major focus of attention ever since its inception in the late 1950s.

The following are two main directions one may take for providing convenient access to a large, unstructured repository of text:

6

unstructured collection of documents into meaningful groups.

There are two main variants of text classification. The first is text clustering, which is concerned with finding a latent yet undetected group structure in the repository, and the second is text categorization (TC), which is concerned with structuring the repository according to a scheme given as input. In other words, while in the former task the set of groups (or classes, or labels) is not known in advance, it is predefined and known in the latter. The latter task will be the focus of this paper.

Note that the underlying notion of TC, that of membership of a document dj in a class ci (based on the semantics of dj and ci), is inherently subjective. This is because different classifiers (be they human or machine) might disagree on whether dj belongs in ci. This means that membership cannot be determined with certainty, which in turn means that any classifier (be it human or machine) will be prone to misclassification errors. It is thus customary to evaluate text classifiers by applying them to a set of labelled (i.e., preclassified) documents (which here plays the role of a “gold standard”). In this way, the accuracy of the classifier may be measured by the degree of coincidence between its classification decisions and the labels originally attached to the documents.

Applications

Maron’s (1961) seminal paper is usually taken to mark the

•Providing powerful tools for searching relevant official birth date of TC, which at the time was called documents within this large repository. This is the automatic indexing. This name reflected the fact that the

aim of text search, a subdiscipline of IR concerned with building systems that accept a natural language query and return as a result a list of documents ranked according to their estimated relevance to the user’s information need. Nowadays, the “tip of the iceberg” of text search is represented by Web search engines, but commercial solutions for the text search problem were being delivered decades before the birth of the Web.

main (or only) application that was then envisaged for TC was to automatically index (i.e., generating internal representations for) scientific articles for Boolean information retrieval systems. In fact, since index terms for these representations were drawn from a fixed, predefined set of such terms, we can regard this type of indexing as an instance of TC once index terms play the role of classes. The importance of TC increased in the late ‘80s and early ‘90s with the need to organize the increasingly larger

•Providing powerful tools for turning this unstruc- quantities of digital text being handled in organizations at tured repository into a structured one, thereby all levels. Since then, frequently pursued applications of

easing storage, search, and browsing. This is the |

TC technology have been |

aim of text classification, a subdiscipline of IR |

|

concerned with building systems that partition an |

|

Copyright © 2006, Idea Group Inc., distributing in print or electronic forms without written permission of IGI is prohibited.

TEAM LinG

Text Categorization

•newswire filtering (i.e., the grouping of news sto- ...” rules, to the effect that a document was assigned to the ries produced by news agencies according to theclass specified in the “then” clause only if the linguistic matic classes of interest; Hayes & Weinstein, 1990); expressions (i.e., words) specified in the “if” part occurred

•patent classification (i.e., the organization of patin the document. The drawback of this approach was the

ents into taxonomies so as to ease the detection of existing patents related to a new patent; Fall, Törcsvári, Benzineb, & Karetka, 2003); and

•Web page classification (i.e., the grouping of Web pages [or sites] according to the taxonomic classification schemes typical of Web portals; Dumais & Chen, 2000).

The previous applications all have a certain thematic flavour, in the sense that classes tend to coincide with topics, or disciplines. However, TC technology has been applied to domains that are not thematic in nature, among which are

•spam filtering (i.e., the grouping of personal e-mail messages into the two classes [LEGITIMATE and SPAM] so as to provide effective user shields against unsolicited bulk mailings; Drucker, Vapnik, & Wu, 1999);

high cost of humanpower required for defining the rule set and maintaining it (i.e., for updating the rule set as a result of possible subsequent additions or deletions of classes or as a result of shifts in the meaning of the existing classes).

In the 1990s, this approach was superseded by the supervised machine learning approach, whereby a general inductive process (the learner) is fed with a set of “training” documents, preclassified according to the categories of interest. By observing the characteristics of the training documents, the learner may generate a model (the classifier) of the conditions that are necessary for a document to belong to any of the categories considered. This model can subsequently be applied to previously unseen documents for classifying them according to these categories.

This approach has several advantages over the knowledge engineering approach. First of all, a higher degree of automation is introduced: The engineer needs to build not

•authorship attribution (i.e., the automatic identifi- a text classifier, but an automatic builder of text classifiers

cation of the author of a text among a predefined set of candidate authors (Diederich, Kindermann, Leopold, & Paaß,, 2003);

•author gender detection (i.e., a special case of the previous task in which the issue is deciding whether the author of the text is a MALE or a FEMALE; Koppel, Argamon, & Shimoni, 2002);

•genre classification (i.e., the identification of the nontopical nature of the text, such as determining if a product description is a PRODUCT REVIEW or an AD V E R T I S E M E N T ; Stamatatos, Fakotakis, & Kokkinakis, 2000);

(the learner). Once built, the learner can then be applied to generating many different classifiers for many different domains and applications; one only needs to feed it with the appropriate sets of training documents. By the same token, the previously mentioned problem of maintaining a classifier is solved by feeding new training documents appropriate for the revised set of classes. Many inductive learners are available off the shelf; if one of these is used, the only humanpower needed in setting up a TC system is that for manually classifying the documents to be used for training. For performing this latter task, less skilled humanpower is needed than for building an expert system,

•survey coding (i.e., the classification of responwhich is also advantageous. Consider also that, when an

dents to a survey based on the textual answers they have returned to an open-ended question; Giorgetti

& Sebastiani, 2003); or even

•polarity detection (i.e., deciding if a product review is THUMBS UP or a THUMBS DOWN; Pang, Lee, & Vaithyanathan, 2002).

TECHNIQUES

Approaches

In the 1980s, the most popular approach to TC was one based on knowledge engineering, whereby a knowledge engineer and a domain expert working together built an expert system that automatically classified text. Typically, such an expert system would consist of a set of “if ... then

organization has previously relied on manual work for classifying documents, many preclassified documents are already available to be used as training documents when the organization decides to automate the process.

Most important, the accuracy of classifiers (i.e., their capability to make the right classification decisions) built by machine learning methods now rivals that of human professionals and usually exceeds that of classifiers built by knowledge engineering methods. This has brought about a wider acceptance of supervised learning methods, even outside academia. Although for certain applications (such as spam filtering) a combination of machine learning and knowledge engineering is still the basis of several commercial systems, it is fair to say that in most other TC applications (especially of the thematic type), the adoption of machine learning technology has been widespread.

684

TEAM LinG

Text Categorization

Learning Text Classifiers

Many different types of supervised learners have been used in TC (Sebastiani, 2002), including probabilistic “naive Bayesian” methods, Bayesian networks, regression methods, decision trees, Boolean decision rules, neural networks, incremental or batch methods for learning linear classifiers, example-based methods, classifier ensembles (including boosting methods), and support vector machines. The time span between the development of a new, supervised learning method and its application to TC has become narrower because machine learning researchers now view TC as a strategic and challenging application and one of the benchmarks of choice for the algorithms they develop. Although all of the techniques mentioned previously still retain their popularity, it is fair to say that in recent years support vector machines (Joachims, 1998) and boosting (Schapire & Singer, 2000) have been the two dominant learning methods in TC. This seems due to a combination of two factors: (a) these two methods have strong justifications in terms of computational learning theory, and (b) in comparative experiments on widely accepted benchmarks, they have outperformed all other competing approaches.

Building Internal Representations for Documents

The learners discussed in the previous section cannot operate on the documents as they are; the documents must be given internal representations that the learners (and classifiers, once built) can make sense of. It is thus customary to transform all the documents (i.e., those used in the training phase, in the testing phase, or in the operational phase of the classifier) into internal representations by means of methods used in text search, where the same need is also present. By means of these methods, a document is usually represented by a vector, where the dimensions of the vector correspond to the terms that occur in the training set, and the value of each individual entry corresponds to the weight that the term in question has for the document.

In TC applications of a thematic kind, the set of terms is usually made to coincide with the set of content-bearing words (i.e., all words but topic-neutral words such as articles, prepositions, etc.), possibly reduced to their morphological roots (stems) so as to avoid excessive stochastic dependence among different dimensions of the vector. Weights for these words are meant to reflect the word’s importance in determining the semantics of the document in which it occurs and are automatically computed by weighting functions. These functions usually rely on intuitions of a statistical kind, such as

•the more often a term occurs in a document, the

more important it is for that document; and |

6 |

•the more documents a term appears in, the less important that term is in characterizing the semantics of those documents.

In nonthematic TC applications, the opposite is often true. For instance, frequently used articles, prepositions, and punctuation (together with many other stylistic features) may be helpful clues in authorship attribution, while it is more unlikely that the frequencies of use of content-bearing words may be of help. This shows that choosing the right dimensions for the right task requires a deep understanding, on the part of the engineer, of the nature of the task.

Reducing the Dimensionality of the

Vectors

The techniques described in the previous section tend to generate very large vectors, frequently in the tens of thousands. While such a situation is not problematic in text search, whose standard algorithms are fairly robust with respect to the dimensionality of the vectors, it is in TC, since the efficiency of many learning devices (e.g., neural networks) tends to degrade rapidly with the size of the vectors. In TC applications, it is thus customary to run a dimensionality reduction pass before starting to build the internal representations of the documents. This means identifying a new vector space in which to represent the documents in such a way that the new vectors have a much smaller number of dimensions than the original ones. Several techniques for dimensionality reduction have been devised within TC (or, more often, borrowed from the fields of machine learning and pattern recognition).

An important class of such techniques is feature extraction methods (e.g., term clustering methods, latent semantic indexing). Feature extraction methods define a new vector space in which each dimension is a combination of some or all of the original dimensions; their effect is usually a reduction of both the dimensionality of the vectors and the overall stochastic dependence among dimensions.

An even more important class of dimensionality reduction techniques is that of feature selection methods, which do not attempt to generate new terms, but try to select the best ones from the original set. The measure of quality for a term is its expected impact on the accuracy of the resulting classifier. To measure this, feature selection functions are employed for scoring each term according to this expected impact so that the highest scoring terms can be retained for the new vector space. These functions mostly come from statistics (e.g., chi-

685

TEAM LinG

square and information theory). Mutualinformation, also known as information gain tend to encode each in their own way the intuition that the best terms for classification purposes are the ones that are distributed most differently across the different categories.

CHALLENGES

Text categorization, especially in its machine learning incarnation, is now a fairly mature technology that has delivered working solutions in a number of applicative contexts. Still, a number of challenges remain for TC research.

The first and foremost challenge is delivering high accuracy in all applicative contexts. While highly effective classifiers have been produced for applicative domains such as the thematic classification of professionally authored texts (such as newswires), in other domains reported accuracies are far from satisfying. Such applicative contexts include the classification of Web pages, where the use of text is more varied and obeys rules different from those of linear verbal communication, spam filtering, a task that has an adversarial nature; in that spammers adapt their spamming strategies to circumvent the latest spam filtering technologies; and authorship attribution, in which current technology is not yet able to tackle the inherent stylistic variability among texts written by the same author.

A second important challenge is to bypass the document labeling bottleneck (i.e., the fact that labelling (namely, manually classifying), documents for use in the training phase is costly.

To this end, semisupervised methods have been proposed that allow building classifiers from a small sample of labelled documents and a usually larger sample of unlabelled documents (Nigam, McCallum, Thrun, & Mitchell, 2000). However, the problem of learning text classifiers mainly from unlabelled data is still, unfortunately, open.

REFERENCES

Diederich, J., Kindermann, J., Leopold, E., & Paaß, G. (2003). Authorship attribution with support vector machines. Applied Intelligence, 19(1/2), 109-123.

Drucker, H., Vapnik, V., & Wu, D. (1999). Support vector machines for spam categorization. IEEE Transactions on Neural Networks, 10(5), 1048-1054.

Text Categorization

Dumais, S. T., & Chen, H. (2000). Hierarchical classification of Web content. In N. J. Belkin, P. Ingwersen, & M.- K. Leong (Eds.), Proceedings of SIGIR-00, 23rd ACM International Conference on Research and Development in Information Retrieval (pp. 256-263). Athens, Greece: ACM Press.

Fall, C. J., Törcsvári, A., Benzineb, K., & Karetka, G. (2003). Automated categorization in the International Patent Classification. SIGIR Forum, 37(1), 10-25.

Giorgetti, D., & Sebastiani, F. (2003). Multiclass text categorization for automated survey coding. In Proceedings of SAC-03, 18th ACM Symposium on Applied Computing (pp. 798-802). Melbourne, Australia: ACM Press.

Hayes, P. J., & Weinstein, S. P. (1990). Construe/Tis: A system for content-based indexing of a database. Innovative Applications of Artificial Intelligence (pp.49-66). Menlo Park, CA: AAAI Press.

Joachims, T. (1998). Text categorization with support vector machines: Learning with many relevant features. In C. Nédellec & C. Rouveirol (Eds.), Proceedings of ECML98, 10th European Conference on Machine Learning

(pp. 137-142). Chemnitz, DE: Springer Verlag.

Koppel, M., Argamon, S., & Shimoni, A. R. (2002). Automatically categorizing written texts by author gender.

Literary and Linguistic Computing, 17(4), 401-412.

Maron, M. (1961). Automatic indexing: An experimental inquiry. Journal of the Association for Computing Machinery, 8(3), 404-417.

Nigam, K., McCallum, A. K., Thrun, S., & Mitchell, T. M. (2000). Text classification from labeled and unlabeled documents using EM. Machine Learning, 39(2/3), 103-134.

Pang, B., Lee, L., & Vaithyanathan, S. (2002). Thumbs up? Sentiment classification using machine learning techniques. Proceedings of EMNLP-02, 7th Conference on Empirical Methods in Natural Language Processing

(pp. 79-86). Philadelphia: Association for Computational Linguistics.

Schapire, R. E. & Singer, Y. (2000). BoosTexter: A boost- ing-based system for text categorization. Machine Learning, 39(2/3), 135-168.

Sebastiani, F. (2002). Machine learning in automated text categorization. ACM Computing Surveys, 34(1), 1-47.

Stamatatos, E., Fakotakis, N., & Kokkinakis, G. (2000). Automatic text categorization in terms of genre and author. Computational Linguistics, 26(4), 471-495.

686

TEAM LinG

Text Categorization

KEY TERMS

Boosting: One of the most effective types of learners for text categorization. A classifier built by boosting methods is actually a committee (or ensemble) of classifiers, and the classification decision is made by combining the decisions of all the members of the committee. The members are generated sequentially by the learner, which attempts to specialize each member by correctly classifying the training documents the previously generated members have misclassified most often.

Classifier: An algorithm that, given as input two or more classes (or labels), automatically decides to which class or classes a given document belongs, based on an analysis of the contents of the document. A single-label classifier is one that picks one class for each document. When the classes among which a single-label classifier must choose are just two, it is called a binary classifier. A multilabel classifier is one that may pick zero, one, or many classes for each document.

Dimensionality Reduction: A phase of classifier construction that reduces the number of dimensions of the vector space in which documents are represented for the purpose of classification. Dimensionality reduction beneficially affects the efficiency of both the learning process and the classification process. In fact, shorter vectors need to be handled by the learner and by the classifier, and often on the effectiveness of the classifier too, since shorter vectors tend to limit the tendency of the learner to “overfit” the training data.

Learner (or Supervised Learning) Algorithm: A general inductive process that automatically generates a classifier from a training set of preclassified documents.

Supervised (Machine) Learning: A form of machine learning (i.e., improving the machine’s performance at

performing a task by exposing the machine to experiential data). A learning method is supervised when it relies on 6 the exposure to preclassified data, that is, to data items

that have previously been labelledbyclasses(orcategories) from a predefined finite set.

Support Vector Machines: One of the most effective types of learners for text categorization. They attempt to build a classifier that maximizes the margin (i.e., the minimum distance between the hyperplane that represents the classifier and the vectors that represent the documents). Different functions for measuring this distance (kernels) can be plugged in and out; when nonlinear kernels are used, this corresponds to mapping the original vector space into a higher-dimensional vector space in which the separation between the examples belonging to different categories may be accounted for more easily.

Terms: The dimensions of the vector space in which documents are represented according to the vector space model. In thematic applications of text categorization, terms usually coincide with the content-bearing words (or with their “stems”) that occur in the training set, while in nonthematic applications they may be taken to coincide with the topic-neutral words or with other custom-defined global characteristics of the document.

Vector Space Model: A popular method for representing documents and determining their semantic relatedness, originally devised in the mid 1960s for text search applications and subsequently applied in the representation of documents for text categorization applications. Documents are represented as vectors in a vector space generated by the terms that occur in a document corpus (the document collection in text search, the training set in text categorization), and semantic relatedness is usually measured by the cosine of the angle that separates the two vectors.

687

TEAM LinG

688

Text Databases

Gonzalo Navarro

University of Chile, Chile

INTRODUCTION

A text is any sequence of symbols (or characters) drawn from an alphabet. A large portion of the information available worldwide in electronic form is actually in text form (other popular forms are structured and multimedia information). Some examples are natural language text (e.g., books, journals, newspapers, jurisprudence databases, corporate information, the Web), biological sequences (e.g., ADN and protein sequences), continuous signals (e.g., audio and video sequence descriptions, time functions), and so on. Recently, due to the increasing complexity of applications, text and structured data are being merged into so-called semistructured data, which is expressed and manipulated in formats such as XML (out of the scope of this review).

As more text data is available, the challenge to search them for queries of interest becomes more demanding. A text database is a system that maintains a (usually large) text collection and provides fast and accurate access to it. These two goals are relatively orthogonal, and both are critical if one is to profit from the text collection.

Traditional database technologies are not well suited to handle text databases. Relational databases structure the data in fixed-length records whose values are atomic and are searched for by equality or ranges. There is no general way to partition a text into atomic records, and treating the whole text as an atomic value is of little interest for the search operations required by applications. The same problem occurs with multimedia data types, hence the need for specific technology to manage texts.

The simplest query to a text database is a string pattern: The user enters a string and the system points out all the positions of the collection where the string appears. This simple approach is insufficient for some applications in which the user wishes to find a more general pattern, not just a plain string. Patterns may contain wild cards (i.e., characters that may appear zero or more times), optional characters that may appear in the text or not, classes of characters (i.e., string positions that match a set of characters rather than a single one), gaps that match any text substring within a range of lengths, and so forth. In many applications, patterns must be regular expressions, composed by simple strings and union, concatenation, or repetition of other

regular expressions. These extensions are typical of computational biology applications but also appear in natural language searches.

In addition, a text database may provide approximate searching, that is, means for recovering from different kinds of errors that the text collection (or the user query) may contain. There may be, for example, typing, spelling, or optical character recognition errors in natural language texts; experimental measurement errors or evolutionary differences in biological sequences; and noise or irrelevant differences in continuous signals. Depending on the application, the text database should provide a reasonable error model that permits users to specify some tolerance in their searches. A simple error model is the edit distance, which gives an upper limit to the total number of character insertions, deletions, and substitutions needed to match the user query with a text substring. More complex models give lower costs to more probable changes in the sequences.

The previously mentioned searches are collectively called syntactic search, because they are expressed in terms of sequences of characters present in the text. In natural language texts, semantic search is of great value. In this case, the user expresses an information need and the system retrieves portions of the text collection (i.e., documents) that are relevant to that need, even if the query words do not directly appear in the answer. A model that determines the relevance of a document with respect to a query is necessary. This model ranks the documents and offers the highest ranked documents to the user. Unlike in the syntactic search, there are no right or wrong answers, just better and worse ones. However, because the user’s time spent browsing the results is the most valuable resource, good ranking is essential in the success of the search.

SYNTACTIC SEARCH

In syntactic searching, the desired outcome of a search pattern is clear, so the main concern of a text database is efficiency. Two approaches are possible with pattern matching: sequential and indexed searching (these can be combined, as we will see). Sequential searching assumes that it is not possible to preprocess the text, so only the pattern is preprocessed and then the whole text

Copyright © 2006, Idea Group Inc., distributing in print or electronic forms without written permission of IGI is prohibited.

TEAM LinG

Text Databases

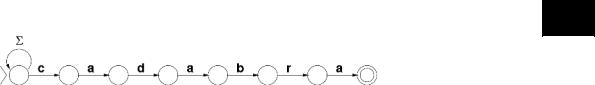

Figure 1. An NFA to recognize strings terminated in pattern “cadabra”

6

database is sequentially scanned. Indexed searching, on the other hand, preprocesses the text so as to build a data structure of it (an “index”), which can be used later to speed up searches. Building an index is usually a time and memory demanding task, so indexed searching is possible only when several conditions are met: (a) The text must be large enough to justify it against the simpler sequential search, (b) the text must change rarely enough so as to amortize the indexing work over many queries, and (c) there must be enough space to hold the index, which can be quite large in some applications.

Sequential Searching

Simple String Matching

In the simplest search problem, we are given a string pattern P of m characters, a text T of n characters (which may be the concatenation of all texts in the collection), and are required to point out all the occurrences of P in T (Navarro & Raffinot, 2002). There exist many string matching algorithms. The most famous are Knuth-Mor- ris-Pratt (KMP), for being the first in achieving the optimal O(n) worst-case time; and Boyer-Moore (BM), for being the first in achieving so-called sublinear search time, meaning that it does not inspect every text character (actually, its search time improves as m grows). Another famous algorithm is Backward DAWG Matching (BDM), for its average-case optimality, O(n logσ(m)/ m), where σ is the alphabet size if we assume a uniformly distributed text. Many other algorithms are relevant to theorists for their algorithmic features: optimal in space usage, bounded time to access the next text character, simultaneous worstand average-case optimality, and so on.

In practice, the most efficient string-matching algorithms derive from their theoretically more appealing variants. Shift-Or can be seen as a derivation of KMP that is twice as fast and O(n) in most practical cases. Horspool is a simplification of BM that is usually the fastest in searching natural language text. Backward Nondeterministic DAWG Matching (BNDM) is a derivation of BDM that is faster to search biological sequences of moderate length. Backward Oracle Matching (BOM) also derives from BDM and is the fastest for longer biological sequences.

The use of finite automata is at the kernel of pattern matching. Many of the previously mentioned algorithms rely on automata. Figure 1 shows a nondeterministic finite automaton (NFA) that reaches its final state every time the string it has consumed finishes with a given pattern P. If fed with the text, this automaton will signal all the endpoints of occurrences of P in T. Algorithm KMP consists essentially in making that NFA deterministic (a DFA), and using it to process each text character in O(n) time. Shift-Or, on the other hand, uses the automaton directly in nondeterministic form. The NFA states are mapped to bits in a computer word, and a constant number of logical and arithmetic operations over the computer word simulate the update of all the NFA states simultaneously, as a function of a new text character. The result is twice as fast as KMP in practice, and O(n) provided m ≤ w, with w being the number of bits in the computer word (32 or 64 nowadays). For m > w, Shift-Or is still O(n) on average. This technique to map NFA states to bits is called bit-parallelism, and it has been extensively used in pattern matching to simulate NFAs directly without converting them to DFAs.

In Figure 2 we see an NFA that recognizes every reversed prefix of a pattern P, that is, a prefix of P read backwards (a prefix is a substring that starts at the beginning of the string). Note that the NFA will have active states as long as we have fed it with a reversed substring of P. This automaton is used by BDM and BNDM, the former by converting it to deterministic and the latter with bit-parallelism. The idea is to build such an NFA over the reverse pattern. Given a text window (segment of length m) where the pattern could appear, we feed the automaton with the window characters read right to left. If the automaton runs out of active states at some point, it means that the window suffix we have read is not a substring of P, so P cannot appear in any window containing the suffix read. Hence, we can shift the window over T to the position following the window character that killed the automaton. Actually, we can remember the last time the automaton signaled a prefix of P and shift to align the window to that position. If, on the other hand, we read the whole window and the automaton still has active states, then P is in the window, so we report it and shift to align the window to the last prefix recognized (see Figure 3, left side). Algorithm BOM is also based on determinizing this NFA, albeit in

689

TEAM LinG